NCache High Availability Demo

This video demonstrates how NCache keeps your data highly available by providing 100 percent uptime and data reliability. In this particular demo, I'm going to start and stop a cache server node from a running cache cluster and I'm going to show you how NCache successfully handles that, without having any downtime or data loss, for your client applications.

Today, I will talk about ‘how you can achieve high availability in NCache and how NCache provides you high availability’. As you know, NCache is used in mission critical applications, which cannot afford any downtime.

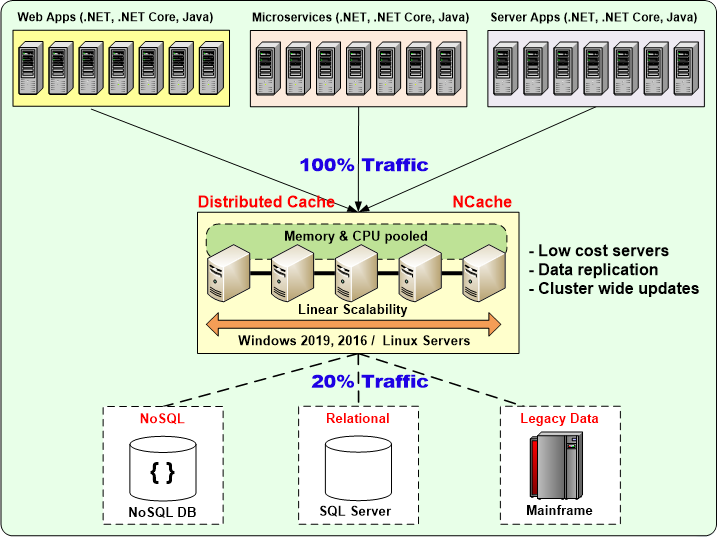

Here is a picture of you have an application server farm that is using a cache cluster of multiple servers and, then there is a multiple data sources. And, these usually are minimum of a two or more cache servers.

And, NCache provides you a dynamic cache clustering, where you can add or remove cache servers at runtime without stopping the application, because NCache has a peer-to-peer architecture. And, I will demonstrate this in the video today.

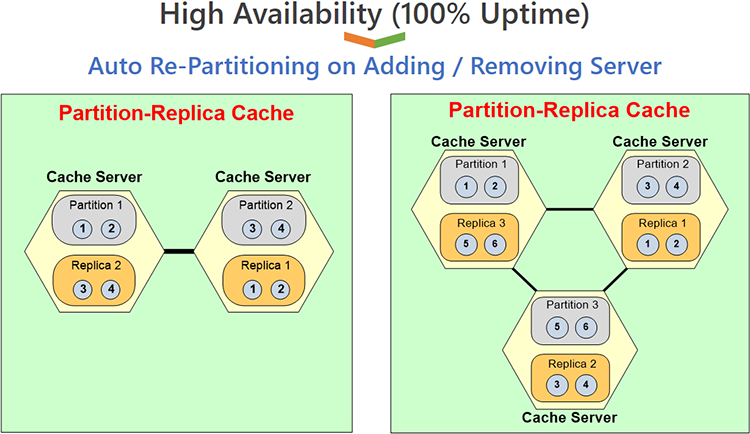

I am going to use the partition-replica topology, where we'll start out and keep adding more servers and you'll see in this topology every server has one partition. So, if I start with one server, it’s only one partition and the replica is also on the same partition or, maybe there's no replica and then as you add a second server you have two partitions. So, the entire data of the cache gets divided up half half each and, each partition is replicated onto a different server.

And then, as you add a third server, you have three partitions and the same data gets divided up into three partitions. Each partition has now one third of the data instead of having one half of the data and each partition is backed up onto a different server. So, this is what I’m going to demonstrate.

Adding Clients & Servers at Runtime

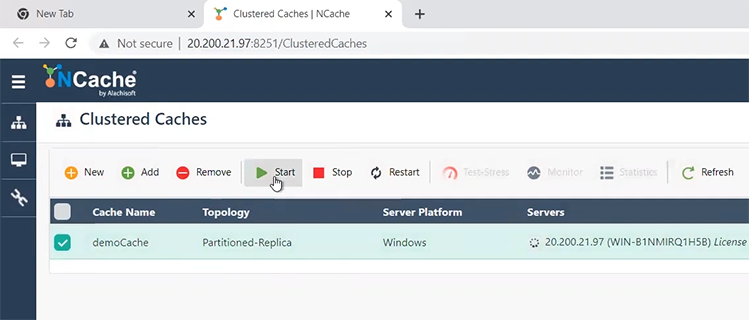

Let me quickly jump into the product and, show you this. I have three cache servers that I will use. I will start with one and, then I’ll keep adding more. And, then I have one client, I am actually right now, sitting on the client machine. So, I’m going to just click on this and open the NCache Web Manager, which is a web-based management tool. And currently, this is how NCache comes when you install NCache. So, as you installed NCache on all these three servers, on each server there was a ‘democache’ that got installed. So, I’m going to actually now start the democache and you'll see that the democache is started.

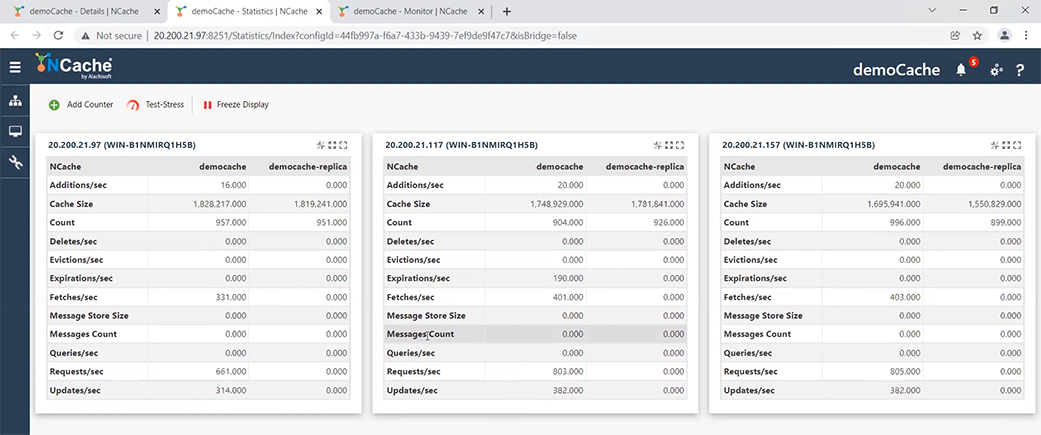

Once it’s started I will also open a statistics on it and I will also do monitor cache. Monitoring gives me a very nice dashboard. There is a server dashboard and there is a report dashboard. The report dashboard is very much like the statistics window. So, I’m just going to stick to the server dashboard and I will keep it here. And, now that I have this running, I need to now run the clients.

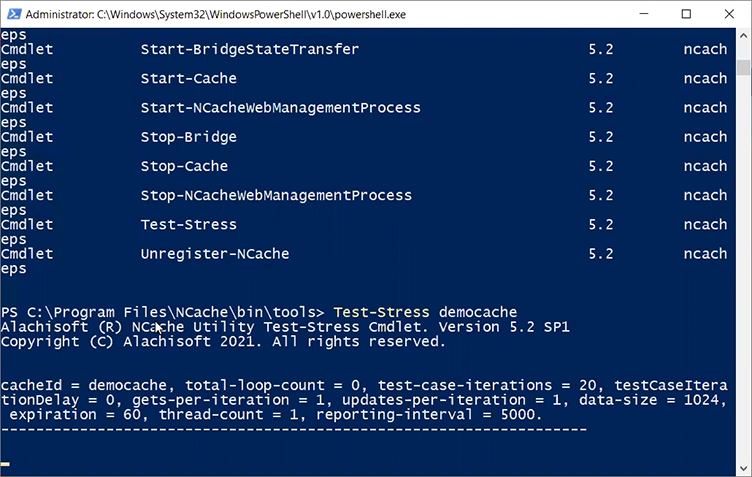

NCache comes with a Powershell based stress test tool. So, just let me type the word NCache. There's a Powershell management, so, I’m going to run 'Test-stress' and I will give it a name of my cache, which is 'democache', as you can see right here 'Test-Stress democache'. The cache name is case insensitive, so, you can enter it any way you like.

When I start the application, this is my stress test tool. The reason this is there is, so that, you don't have to do any programming to start testing NCache. You can also simulate stress through this stress test tool. That's why it is called stress test tool. But, you know, just imagine this would be your application.

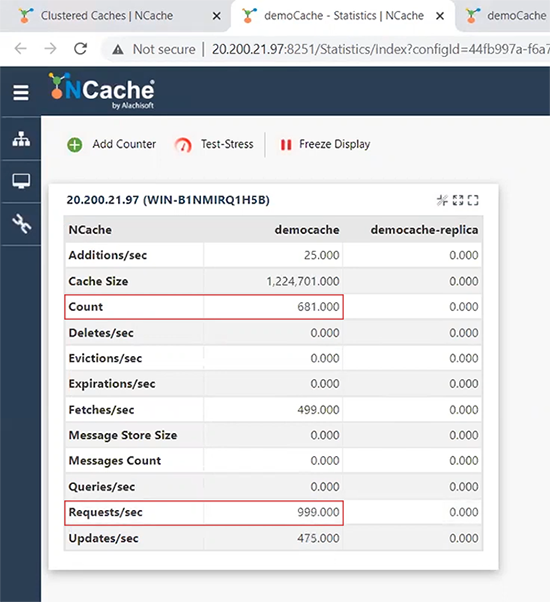

Now, if I come on to my statistics window, you'll see that I have a count of about, you know, 400 items that it keeps increasing. I have about 981 or about 1000 requests per second from that one client.

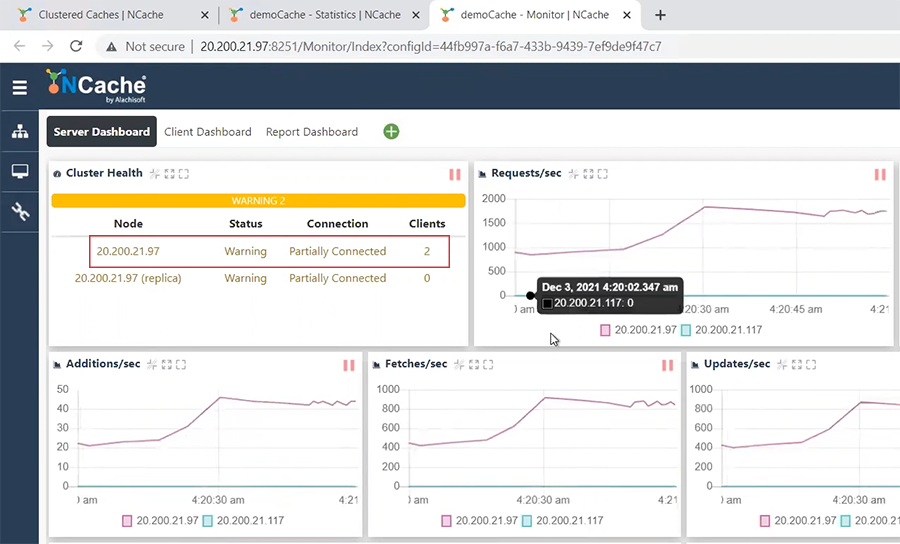

I’m going to now go ahead and run a second client application. So, I can keep adding multiple clients by starting multiple stress test tool instances or I’m sorry Powershell instances. So, if I come here, I again say Test-Stress democache. Once I do this, you'll see that the count or the request is almost doubled now. Because, each client, as you saw in this picture, each client is putting its own transaction load onto the server, so, the transaction capacity of the server has almost doubled. I can also see it here that in my server dashboard, I have two clients connected to a one node cache cluster.

So, there's only one server in the cluster that I am using currently. Okay, I’m going to now, let's assume that what happens in real life is that, you know, your capacity grows and by the way we recommend a minimum of two cache servers. So, you should never have only one cache server running on this. But, I just wanted to start with one, so, I could add two servers at runtime to show you.

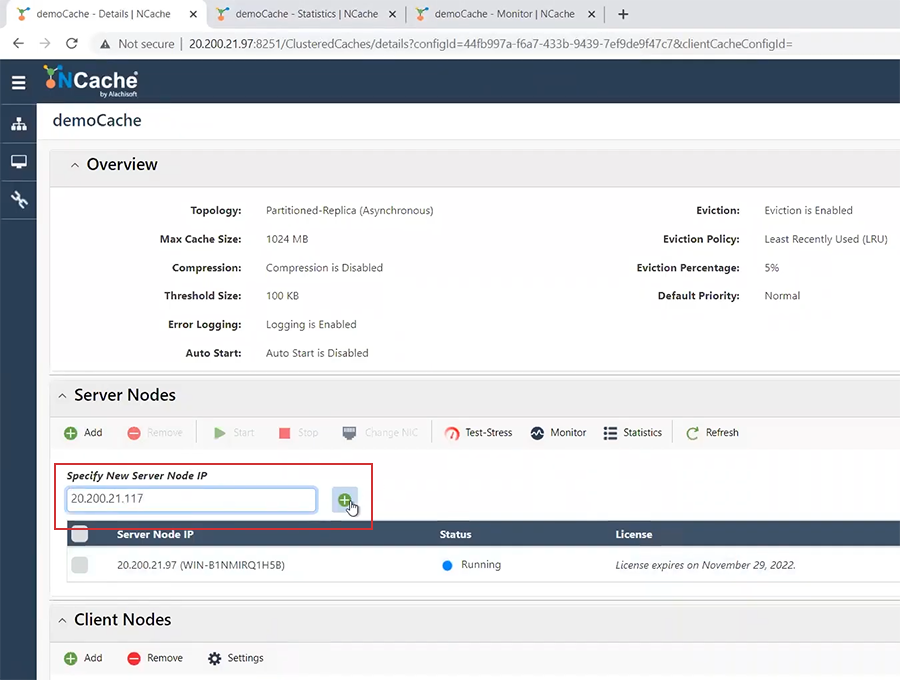

So, now the next thing I do is, I’m going to add a second cache server and the second cache server that I’m going to add is the 117. I will go ahead and 117, I will say add. It has been added but it has stopped. So, I’m going to click it here and say start. That will just start the server node.

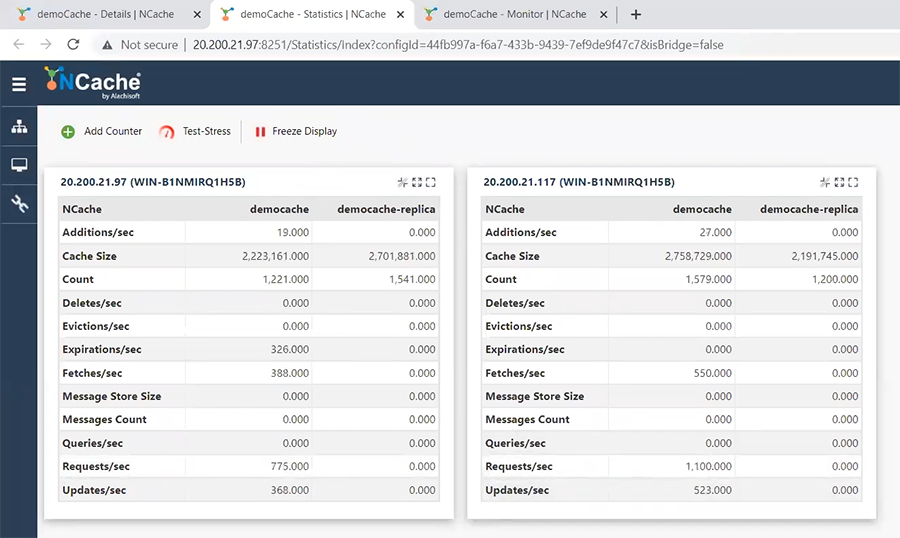

Once I start here, you'll see that a second node will get added here, there. And, the count the cache count has dropped. I did not show you that the cache count was actually double that much, now that it's two servers the cache count has dropped and the transaction requests per second has been divided into half. Because, half of the requests are being processed by this cache server, half of them are being processed by this cache server. So, this is how the count has dropped.

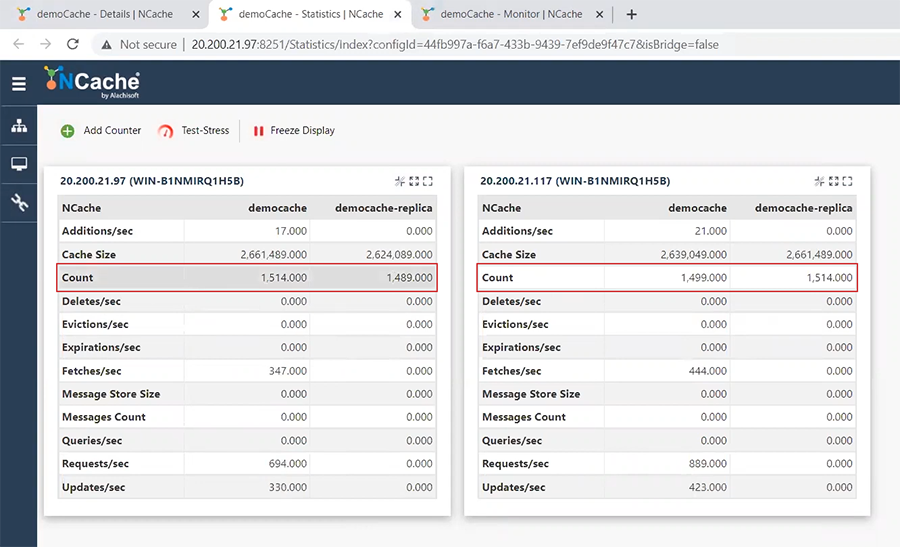

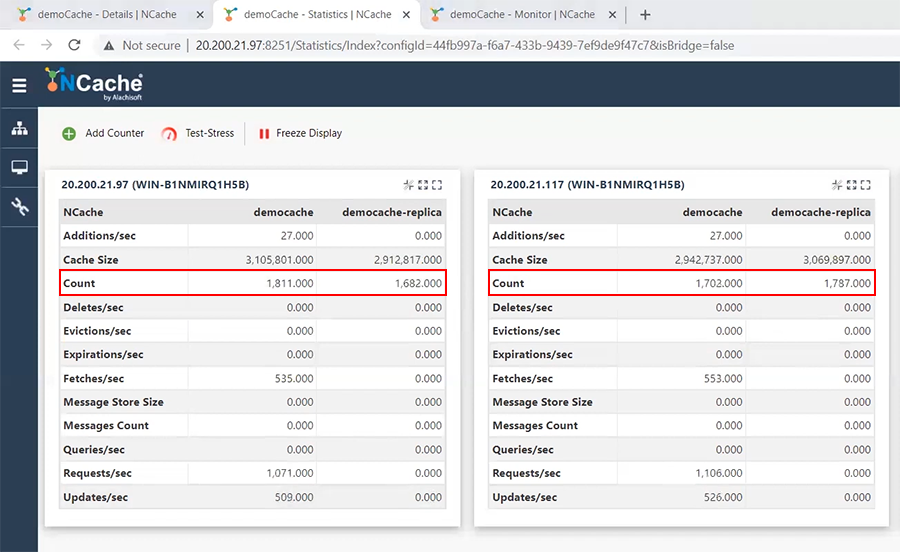

So, now I’ve just added a cache server without stopping the application. You can see the stress test tool is running here and it's also running here. No issues at all. And what happened now is that my partitions, so, I was one server, now I’m a two server cluster. So, there's partition one, partition two and every partition is backed up onto the other server. So, let me show you what that is. So, here is partition one. It got this many items and its replica is right here. So, you see this is almost the same quantity as this one. And, then this is my partition two. About 1500 something and it's backed up here.

Now this replication is Asynchronous. So, this count will not always be exact. But, it will eventually be exact even if you stop the transaction.

Ok. Now, let's say that my transaction load keeps on growing. My business is doing well and I need to add more servers because I need to increase the transaction capacity. I can also add more clients because that's what's going to happen.

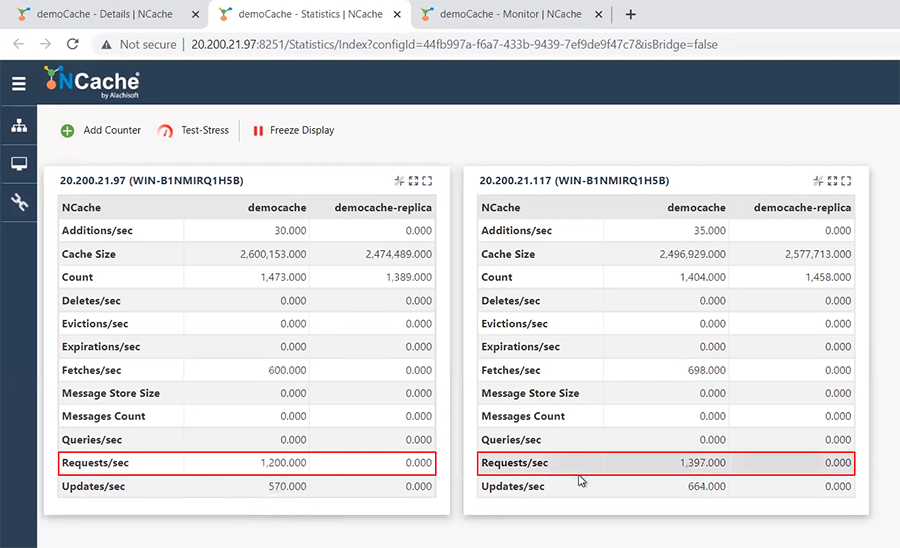

First is that, I will be adding more clients because the first thing I’ll do is add more clients, more application servers I mean. And, that will result in a higher transaction load. Just watch, this transaction load will increase, there, see. It went to 1182 per server instead of 800 something and actually it's gone even more it's gone 1200 something.

So, the transaction load keeps on going up. I can also see it here. I can see the request per second on each server, that is being distributed as I added more servers, it is being increased.

So, now that I’ve added more clients, at some point I will need to, you know, I will notice that my cache servers are starting to slow down because the transaction capacity is maxing out or maybe the storage capacity of how much memory I have in each server is maxing out. So, I need to add yet one more servers.

In any case, as I said, we recommend a minimum of two servers. So, you should never have a single server cluster in production at least. Because, that does not give you high availability. So, when you have minimum of two and you reach the capacity limit of these two, then that it's time to add a third server.

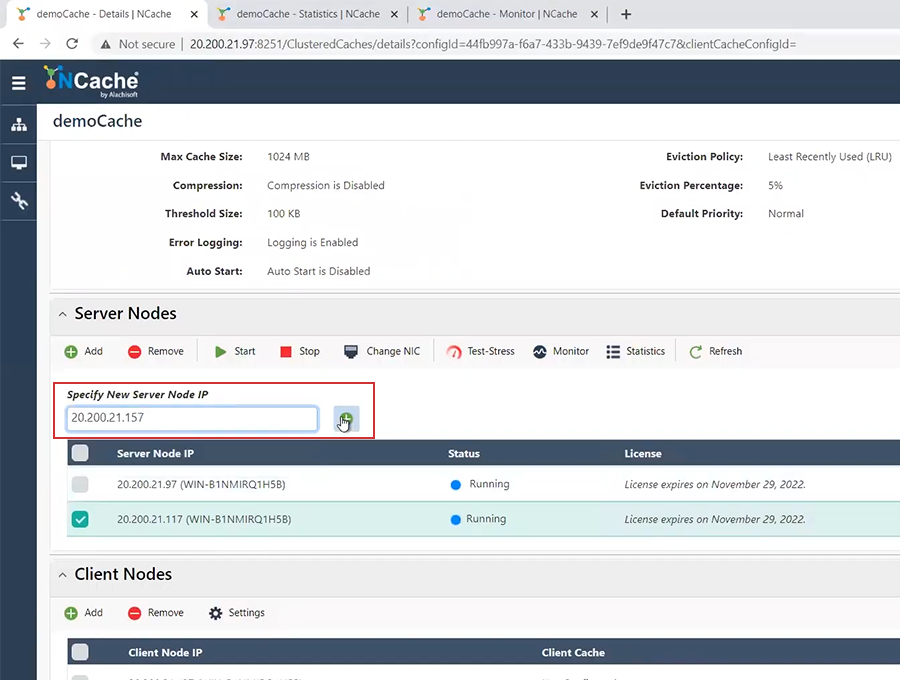

How do we add a third server? I have a third server right here 157. I will come here straight away and I will say add a server and it is 157. So, I will come here and add 157. Again, it is added but it stopped. I will come here, select this and say start.

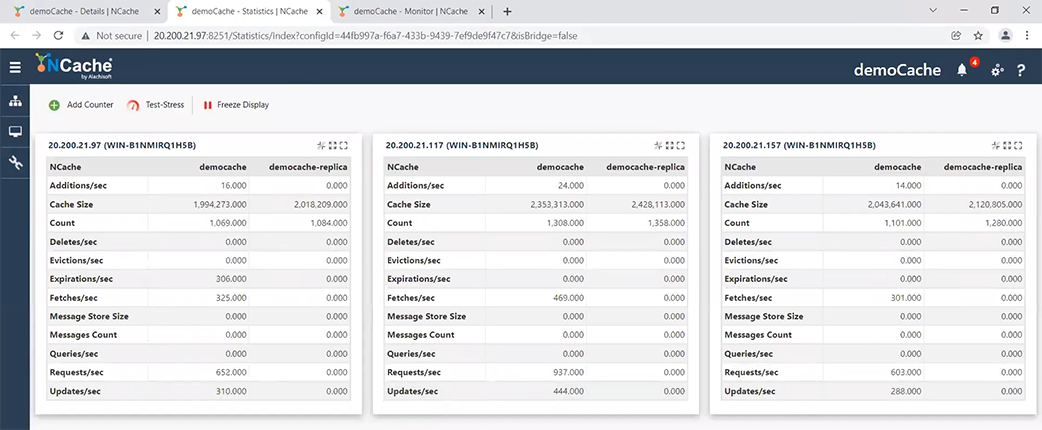

Once I start notice this 1800 is going to go down. Watch, as soon as the third server kicks in it will share the load, there. See, it came down to about 1100 something each.

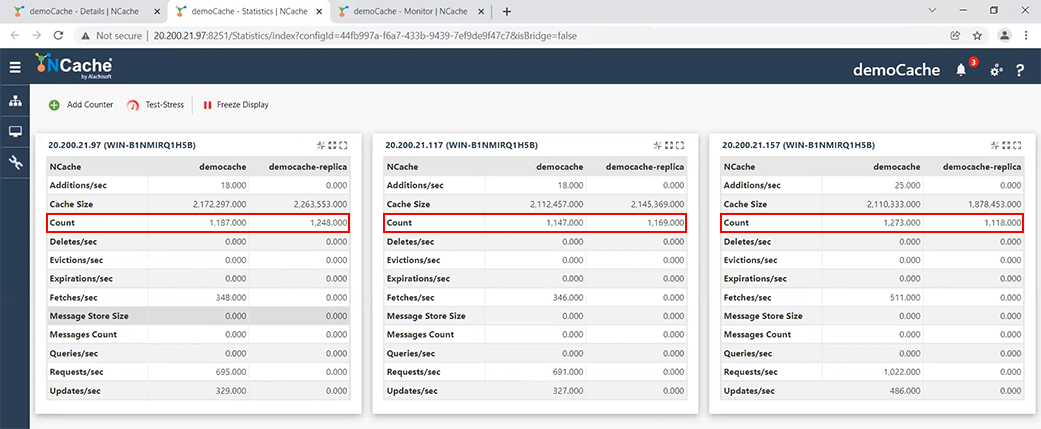

Because, now I have three servers instead of two and as I showed here that when you have three servers, you have three partitions. So, the data from both of these partitions gets divided up further into three partitions.

So, now each partition has a replica on to a different server and that's how the data is actually distributed. So, I have just demonstrated that you can add more servers without stopping either the application or the cache. So, everything is… I mean the application is totally, you know, unaffected by this change, by your adding more servers, which is a great thing about NCache that it lets you do that.

Okay. So, now there comes a time maybe you need to also bring a server down. There are two ways that you can bring a server down. One you're bringing it down permanently because, you're reducing your capacity. Maybe you're coming down from three servers to two because it's a seasonal business. You had a peak usage time during the holiday season and now again you're going to go back to your default, you know, smaller configuration. So, some of these servers are going to be actually removed. So, let's go ahead and do that.

I’m going to remove server 157 in this. So, I’m going to pick server 157 and I’ll say stop. First I will do stop. Now, as you can see, as I’m stopping, this count will go down and this count will increase further. See, it has gone to about 2000 each again. That means the data has shifted from this onto this.

I have basically gone from three partition configuration to a two partition configuration. Each partition is replicated, as you can see here each partition. So, this partition is replicated right here and this partition is replicated right here, Okay. And, no effect on the applications as you can see.

So, that pretty much handles the situation where you need to either add a server, because, you need to increase capacity or you need to bring a server down, because, your capacity need has changed. It has actually because of the seasonal use the capacity need has changed.

Maintenance Mode

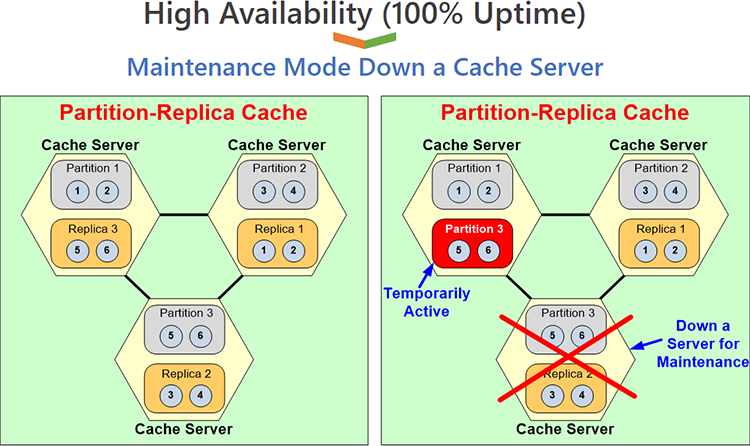

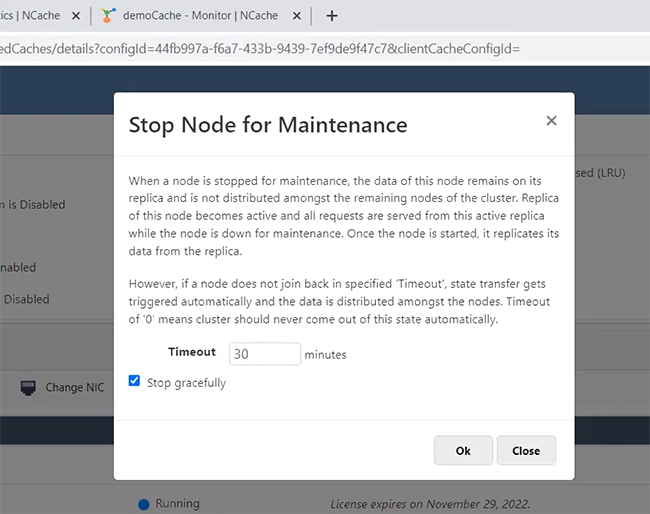

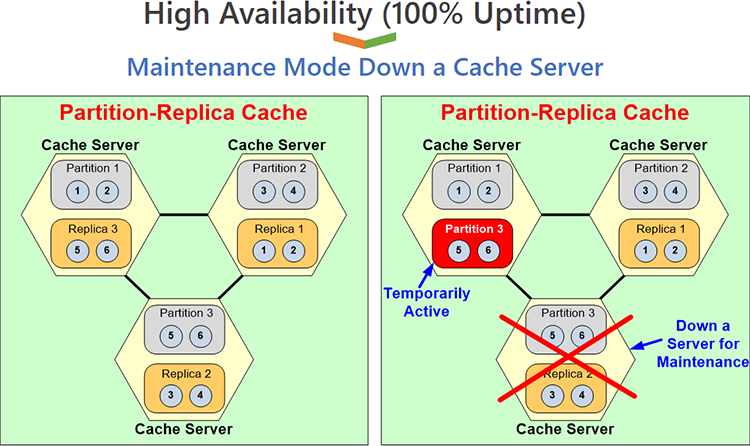

There is another situation which is what we call maintenance mode, where you need to bring a server down but not because your capacity has gone down, is because you need to do some maintenance. Let's say you need to up apply some operating system patches or something. So, you need to bring a server down for maybe five minutes, ten minutes, half an hour something. But, your cache has got huge amounts of data. I mean our customers, they have tens of gigabytes of data in each server. So, if you have a three, four, five, six server cluster and a combined, you know, data of tens of gigabytes in each server, bringing a server down actually impacts performance. Because, now if you had to re-partition, everything to go from three partitions to two partitions, you're going to do a lot of state transfer and then for what, again to add it back. So, we came up with a feature called maintenance mode, where you can tell NCache that okay I’m bringing this server down but I don't want you to repartition the cache, keep this as a three partition nodes, partition one, partition two and the replica three, which is right here, will become partition three. And, this stays active. It's a temporary arrangement. At the end of it once you're done you'll bring this node back up and it'll be back to this picture again.

Let me show you how you can do that, okay. So, I will, first of all again achieve the three server configuration, I’m going to add this, well assume that I had removed it, now I’m adding it back. I should have removed it, I only stopped it. But, I am going to add it back. I am again on a three node cluster. My data is evenly distributed.

My transaction load is evenly distributed and I can see it also here. Actually, this is not showing it yet, it will. But anyway. So, once I have this, now I need to do maintenance. So, I’m going to come here and I will say, okay again, remember that each node has about 1200 items each, okay.

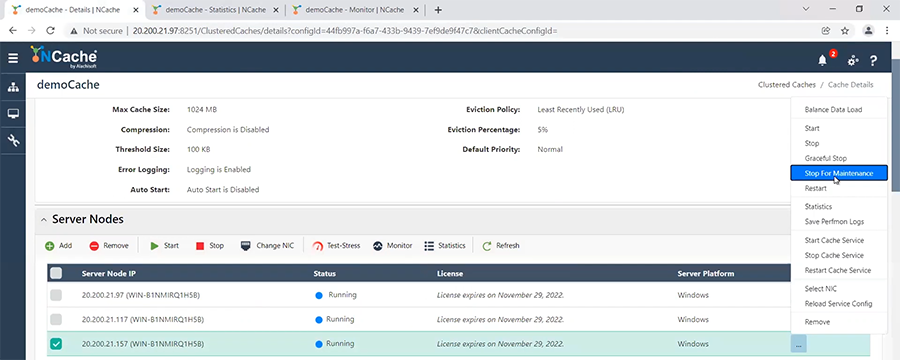

So, if I were to bring it down, to a two node cluster this should go up to 2000+ items in each node, which is not what is going to happen because, I’m going to come here and I’ll say, here I will say stop this for maintenance.

When, I click on this it asks me, for how long do you want to keep it in maintenance? Obviously, this timeout is very important because at the end of that timeout NCache assumes that it's no longer maintenance. Because, if you don't add the node back by this time, NCache assumes that you have actually removed it permanently, like the other removed, that I had just shown you and it will actually remove and re-partition the cache.

But, if you add it back within this time period and it's a configurable time period, if you add it back within this time period then NCache will do what I just showed you that it will not repartition, it will just keep that partition, that replica in a temporary partition mode. So, let me just say, okay, I’m saying stopping this now. So, let’s come here 1300, 1300, 1470 and now this is completely gone. But, notice this count has not gone up. Why? Because one of the replicas has become active partition. You don't know which one through this picture but the fact that the count has not gone up, the replica is still there. This replica has become an active partition. So, now server one has partition one and partition three, server two has partition two and a passive replica one and, server three is down which I need to do, you know, I need to bring it down for maintenance.

So, now you go and you do your maintenance. You apply your patches and, now you're done, now you want to bring it back up, so, again you just come here, you don't even need to re-add, just say start again because, it was stopped. And, by the way in all of that, the application was still running without any interruption. So, all of that, all those changes do not require any application interruptions. So, I’m going to say start again. And, once I say start, now watch, this will regain its position and it's again catching up with the data and you'll see that again it's again at the same level, that it was previously.

Conclusion

So, as you can see, I have demonstrated both adding and removing nodes at runtime in a permanent basis and also temporarily removing a node for scheduled maintenance basis. So, that is the end of my demo. I had hope to demonstrate to you that NCache gives you high availability. It's a 100% uptime where you don't have to bring any of the application down. There's no interruption in the application.