Developing Azure Microservices & App Services with NCache

Recorded webinar

By Ron Hussain and Adam J. Keller

Optimize performance and scalability of your Azure cloud apps to run under peak load. Use a fast, scalable and common data repository to improve Azure service application experience without any data or session loss.

This webinar demonstrates how to integrate Azure Microservices and App Services with a .NET distributed cache in the cloud.

We'll cover:

- Intro to Azure Microservices and App Services

- Using NuGet packages for NCache client application resources

- Making NCache API calls from the Azure Services

- Creating and deploying a distributed cache in Azure

- Using the cache for Azure Services

- Monitoring the cache that is running Azure Microservices and App Services

The topic that we've selected today is using NCache which is the main distributed caching system inside Azure services. My main focus today will be Azure microservices. I'll talk about some architectural details of microservices. I'll talk about some details on why you need distributed cache in micro services, what are the limitations and then I'll also talk about app services Microsoft Azure web application service projects, where your web applications may also need a distributed caching system for handling data, for sessions, output caching. So, those sort of features I'm going to highlight. The main agenda that I'm going to cover today, the hands-on portion within this webinar is going to focus on the deployment architecture. How exactly a distributed cache gets deployed and how exactly your applications would need to connect to it.

Azure Microservices & App Services

So, first I'll talk about some introductory details about Azure microservices and app services

What is a Microservice?

What is a microservice? So, this is a very common term that we hear a lot these days. It is a platform and it is being offered by Microsoft Azure. There are some AWS and then there are some third-party providers as well. Typically, I would just name Akka.NET, that's also a very popular platform on the Java and then they have put it towards .NET as well. A microservice is a platform which encapsulate customer’s business scenarios and it takes care of certain problems that customer is having within the application. So, it could be developed by a small engineering team. It could be written in any programming language.

It could be stateful it could be stateless but it is something which is independent within the application. So, it can be independently versioned, Implemented, deployed and then the nice thing about microservice architecture is that can be scaled out, independently. You don't have to scale out an entire application. If a portion within the application that fulfills these characteristics, you can just implement it as a microservice. Your application can use it as a microservice resource and then you can scale out this particular portion without having to worry about the entire application scalability. So, it gives you a more granular approach towards different segments within the application.

I've highlighted two common points here that your microservices are well-defined interface protocols and they interact with other microservices as well. And, then they have unique names that's something that you get in Microsoft Azure and then they need to remain consistent and available in case of any failures. So, that's another important characteristic of a microservice. This is something that I've copied from Microsoft MSDN website. So, and I'm pretty sure everybody knows what microservice is. So, this should cover some basic details about microservice, alright?

Microsoft Azure Service Fabric

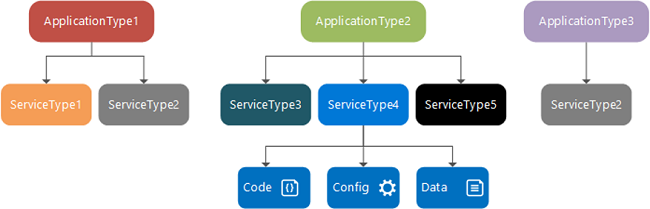

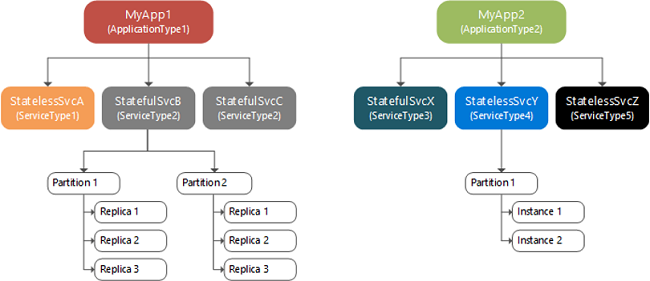

Here's the diagram where your application type it has a service type it could be stateful or stateless. I've already covered that.

There's another application type that could also have its own microservices and then it may be talking to some back-end data sources for code, for configs. It may be calling a web service. It could also have some data which it needs access to and then they are, you know... This is just an idea how microservices are architectured. There could be multiple applications and each application could have multiple microservices, which again in turn are a cluster of servers, instance clusters. You could have partitions within the microservice. That’s primarily a stateful microservice. If you have multiple microservices, you can choose to have stateful or stateless microservices.

If they are stateful microservices, you would have data partition and then there are replicas of other partition. So, if any partition goes down you would have the backup made available, automatically. So, this is part of Microsoft Azure, this is general part of microservice architecture as well and that's what Amazon also gives you, Akka.NET that also has the same kind of question format. So, microservice is something that provides state within itself within its architecture by default. So, I'll highlight where exactly you need a distributed caching like NCache. Why exactly you need a distributed cache and there are a few bottlenecks, there are a few issues that distributed cache is going to help address as part of this webinar, which I'm going to cover.

Introduction to Azure Web App Services

Then I will also talk about Azure web application services. It's easier to deploy web applications in Microsoft Azure.

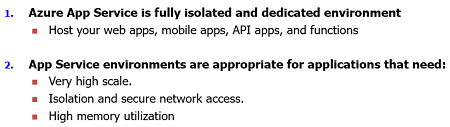

You don't have to have entire VM. It actually cuts down on your DevOps time, the amount of expertise needed, the time it takes, it's something which is managed. So, you could just get an instance based application deployed in Microsoft Azure. It's a fully isolated dedicated environment for your applications. You can scale it out. You can host multiple applications. It can be your mobile apps, web or it could be your function apps, whichever is required at this point you can use it using our Microsoft Azure web app service model.

And, then it's highly scalable, I've already mentioned that isolation is guaranteed. You get a secure network access and then you can also use high memory utilization and other resources which are needed. And, this is a transition that most of deployments are taking where you actually instead of having the entire VM hosting your application in a web form or a web garden scenario where you have to manage the entire VM, you can have an instance-based approach where service-based application can be deployed and it could be a typical MVC application which can use sessions, which can use database calls. So, all sort of regular MVC web application related concepts but it's just that the deployment is slightly different.

Distributed Caching

Now that we've defined Azure microservices and Azure web application services, Right? So, microservice in Microsoft Azure is a service fabric project whereas web app services is an app service project. So, I'll highlight details around that and I'll talk about the networking piece that you need in order to use at the distributed cache.

What is an In-Memory Distributed Cache?

First, I'll talk about distributed caching concepts in general.

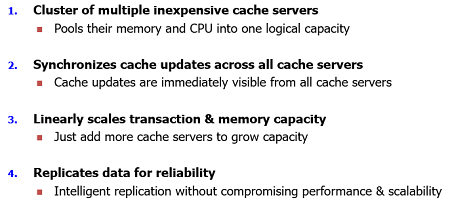

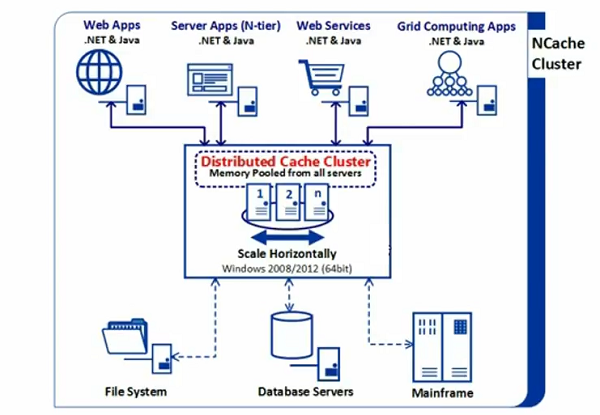

What is a distributed cache? A distributed cache is a cluster of inexpensive caching service which are pooled together for memory and their resources for the computation power and then then their storage power and in case of the distributed cache is the memory which presents the main storage. So, you pool all the memory resources into a logical capacity.

Then you can synchronize your cache updates across all cache servers. For example, any update which is applied on any given server is applied on all caching servers. So, distributed cache is a cluster of multiple inexpensive cache servers which are joined together into a logical capacity. You have multiple servers in Microsoft Azure these go these are going to be VMs. So, NCache is deployed on a virtual machine. This is the current deployment option. NCache can also be deployed in docker, right? So, that's another deployment option. So, cache server running on a VM but will be treated as a separate resource within Microsoft Azure and that's the typical deployment as Far as NCache server-side configuration is concerned.

The next characteristic of a distributed cache is that you should have your all cache updates synchronized on all caching servers. You could have 2 3 4 caching servers and they should have a consistent view of the data. Data should be visible as a same state for all the applications which are connected to it.

And, then it could it should linearly scale out for memory and transaction as you grow your capacity you need more storage more resources you can simply introduce more and more caching servers and it should grow linearly. And.. then the replication is another part of it which should replicate data, for any data that gets added if server goes down it should automatically replicated. Replication should be done based on operation then data should be made available if a server goes down.

So, these are some common characteristics of distributed cache.

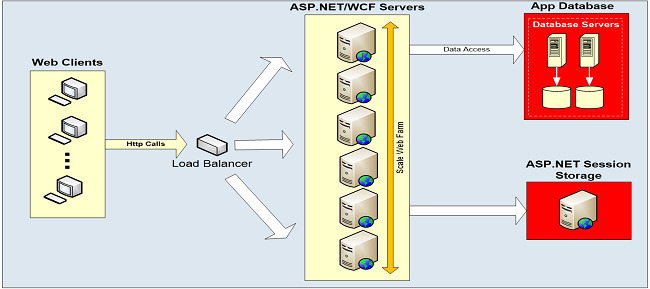

Data Storage is Scalability Bottleneck

Typically, your applications, be it app services, microservices they may be talking to some back-end data sources. In Microsoft Azure you could have a sequel server instance which could be on the same V net or a cross V net but you still talk to database server.

So, it could be slow it may not be very scalable. So, it makes more sense to introduce a distributed caching layer in Microsoft Azure as well. And, I'll talk about use cases in regards to microservices in-app services as well.

NCache Deployment

This is a typical deployment of NCache.

So, in Microsoft Azure you would have VMs which would scale horizontally you could just create a virtual network, create VMs and then install NCache on those VMs or use our Azure or AWS images. So, to speak and then your applications can connect microservices, API Apps, typical IIS hosted apps on other VMs or it could be app services they can all connect to this and it saves you trips to the backend data sources. So, that's the general idea of a typical deployment of NCache and I'll revisit this once we move on to our deployment for microservices and Azure app services. In cloud this would slightly change. So, we'll talk about it.

Three Common Uses of NCache

OKAY! this is the most important slide within this webinar. I want to highlight where exactly you would be using a distributed cache in Microsoft Azure services, right? So, typically talk about web applications, web services, back-end applications, window services, which need to access data from centralized source and they need a very fast repository. So, that's a typical use case. In Microsoft Azure, you already have a very scalable platform. Your applications can scale out linearly and that's the typical case with web forms and web garden as well.

So, I've listed down few important use cases that you can use inside Microsoft Azure for services.

App Data CachingFirst of all, you can use it for data caching and this applies on Microsoft Azure microservices and as well as app services. If there are stateless services, right? Because stateful services would require their own set of partitioning and then you would have replicas as well but stateless do not have replicas. So, one important aspect is that you have a stateless application but you still want to have data reliability, right? So, in that case you can put data in a distributed cache. The distributed cache by design it can cover the replication aspect and high availability aspect. So, your data would be made available even for the stateless microservices.

And, the next benefit could be within the microservice or app service as well if there's a web application it may talk to a back-end data source such as the SQL server. So, SQL server is slow. It's not something which can handle huge transaction load. In response to that, a distributed cache can have multiple servers, which are linearly scaled out and they're joined into a logical capacity, right? So, you can add more and more servers on the fly. And, in Microsoft Azure it's very simple to manage that. You can just spawn a new VM and would you would just double your capacity as you add more and more caching servers. So, data caching use case, its first of all in memory. So, it's super-fast in capacity database and then it's a very scalable platform even in Microsoft Azure in comparison to Your Microsoft SQL server. So, these are two benefits that you get in Microsoft Azure app services and Microsoft's use case. You just need to introduce NCache API call and you keep on adding data in a key value pair.

ASP.NET Specific CachingSecond use case is specific to Microsoft web app services. It is in regards to ASP.NET specific caching. So, if you have an app service, you can use NCache, where your app service talk to NCache for sessions as well as for output caching data. It can also use object caching API by the way because this use case also applies on the ASP.NET app services. So, your session state typically is going to be part of the same app service instance that demands that you need a sticky session load-balancing or it could be a part of the database. In either way, if it's a sticky session it's limited where you have to have sticky load-balancing which is the default option. And, then in the database side, it's again going to be slow and then it's the single point of failure as well.

With app services, using NCache as a session provider, first of all, it's very scalable. It's extremely fast and there would not be any single point of failure. Sessions are replicated across servers so that's where you get an edge where you should consider using a distributed cache for your ASP.NET applications. And, then this could be multi-site deployment as well and this is something that I plan on covering today as well where we would have one application deployed in one site talks to caching servers on the same site and then it has an ability to talk to caching servers across site as well. And, I'll highlight what configurations are needed in inside Azure but that's impossible within NCache distributed caching offerings within Microsoft Azure.

Second use case is around output caching, right? So, you could use your static pages within an app service within a web application deployed as an app service. If there are static Pages, you cache the contents of those pages. The entire page output can be cached and you can use that page output next time when you need to access the same page. So, that's another benefit. This is something that I cover in our typical ASP.NET performance and scalability webinar, where I talk about four different ways to optimize ASP.NET performance. So, the same concept would be applied on Microsoft Azure app services, where a web application is deployed as an app service.

Pub/Sub and Runtime Data SharingNow third important use case and that's the most important bit when it comes to microservices. Although it applies on app services as well. Your app services may need data sharing right one application service talking to another application service or it could be data which is shared between two different applications. So, there are web applications which are different in nature but they are dependent on the same data. There could be a reporting application and there could be a consumer of that, or there could be a producer in terms of product catalogs and then there is another application which needs to depend on that, it needs to generate some reports based on the first application. So, that use case demands that your applications share data between another. So, there isn't any way you can use database to share data but that's slow and that's single point of failure in some cases as well. Distributed cache makes more sense because your applications can connect to it in a client-server model. Multiple applications can connect to the same tier same caching tier and then they can use the same cache resources and get access to the same data and that's something that you can turn on.

In Azure microservices, we need to take it to another level. In Azure microservices, although these are clustered and I've already mentioned that there they could be stateful and stateless, right? There could be a requirement and this is something that I mentioned that your microservices may need to interact with the microservices as well, right? So, by design, microservices architecture requires that your service instances may need to interact with one another and then may need to share data between one another. And, that's a very core requirement as far as microservices platform is concerned. We simply need multiple application instances share data between one another. One microservice sending some data and it is available to other microservices instances, if it's a stateful or you could have multiple applications interact in on the same lines, but within two microservices this is something that's a must have and therefore many third-party providers are providing data sharing and pub/sub model as part of their offerings, right?

So, in Microsoft Azure, if there is a requirement where you need to have data sharing handled where one application talk to a store and it has a data that's needed by another application, another microservice instance, so you need a platform to handle that and NCache offers that platform where data is made available in a centralized manner. It's extremely fast repository. It's extremely scalable on top of it multiple microservice instances different services or instances of same services can connect to this cache and then they can use data sharing features out of it. So, data is centrally available in a faster manner.

Then we have topic-based pub/sub model. We have messaging platform out of it. So, a message can be sent it could be data driven messages or it could be application driven messages or topic-based message where producer application and that's an instance of microservice that sends data to the cache and then that message is transmitted to all the recipients on this subscriber end. And, similarly the subscribers can also be producers in in some cases. So, you could use pub/sub messaging between multiple Azure microservice instances. It could be event driven data sharing between multiple instances as well. And, then on top of it we have event notification and continuous query system. It could be an SQL command and based on that you can define a data set again against which you can get data-driven messages out of this. So, that's the use case that you would consider using Microsoft Azure distributed cache in inside Microsoft Azure Microservices.

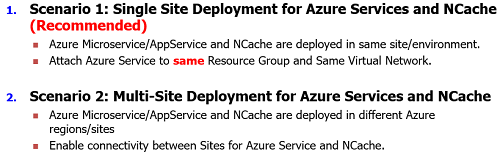

NCache Deployment Scenarios in MS Azure

Then I will also talk about Azure web application services. It's easier to deploy web applications in Microsoft Azure.

All right. So, now we have two kinds of scenarios right. So, we would have everything deployed in a single region in a single site within the same resource group or within the same virtual network preferably, right? So, I've already discussed that NCache is going to be deployed on VMs in Microsoft Azure whereas your applications can be either VMs accessing those VMs of NCache or these could be Azure services and we'll focus on Azure services today. VM case is very typical where you spawn a VM, go to the iAS, host your application in there and then make your application connect to NCache using NCache APIs or session store provider. But, when it comes to services, you need to do some networking configuration. So, that your service talks to NCache.

So, we have single-site deployment where your Azure services and NCache are deployed in the same region in the same site. So, your Azure service when you deploy it, you need to attach it to the same resource group and also onto the same virtual network as well. So, that's recommended approach. You would not need any intermediary. There would not be multiple hops would not slow down things. So, that's the recommended scenario for our site.

Second scenario, that's also a very valid scenario that you may have multiple sites, right? We still recommend that your cache and your applications, your microservices app services they should always be in the same region they should be able to connect to each other. Since NCache is a TCP-IP protocol. So, it uses IP addresses and ports for all the communication and these are TCP-IP ports. So, it needs to be deployed in such a way that your applications are nearby. They're deployed in combination to one another. They're nearby, they're not different hops available. So, we recommend that your cache is deployed on the same site where your applications are but there could be a scenario where you're using multi-site features. Where your application may need to talk to the cache on another site or your caches need to talk to one another. So, we need site-to-site communication going on between NCache as well.

So, that's the agenda that I will be covering these two scenarios. Scenario 1 is recommended everything is in the singles closer to your application. Scenario two is that you may have multiple sites and your application from site one talk to the caching servers on site two or it could be caching servers. For example, for the WAN replication feature you could have bridge between your site one and site two caches.

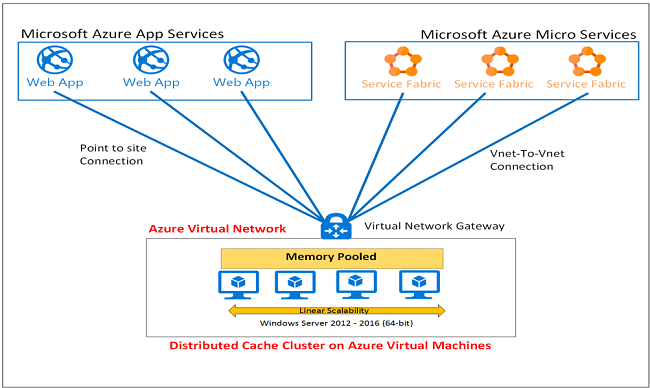

Scenario 1: Single Site Deployment for Azure Services and NCache (Recommended)

So, single site deployment for Azure Services, I'll cover some detailed steps around this. I'll talk about application development, how you introduce NCache calls inside your application and we will use a service project for this as a reference example. Alright. So, this is the diagram that should cover all the details that I plan on covering within this webinar.

I've already mentioned that NCache servers are going to be VMs in Microsoft Azure. Right? So, you need to take care of virtual network which supports these VMs and then NCache needs to be installed on these and then your web applications, which are deployed as app services, it could be API apps or it could be microservice apps, right? They all are service instances. They aren't any VMs underlying. So, that also means that you cannot install NCache on these resources you need to include this as part of the application resources. So, there are NuGet packages that we'll be highlighting. All right! So, we have web apps and then we have API apps and there could be microservices and other apps as well.

So, this is a typical single site deployment where we have same Azure virtual network hosting your applications as well as the VMS of NCache. And, what we really are doing in this is a point to site VPN between your web applications and your service apps and NCache service. So, that's what the overall networking looks like. It's part of the same virtual network where we will actually use a service project. We will introduce NCache resources in it then we'll create server-side resources for NCache. So, that our VMs are up and running and then we'll simply come back to the application and attach our application to the same virtual network where our NCache VMs are existing. So, that would just make it a single site and using the same virtual network as of our caching service.

So, let's go through the steps with these.

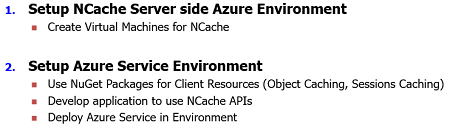

All right! First step is that you have NCache Server-side either and warm it set up and that actually means setting up Azure virtual machines for NCache and this step is common for all sort of sorts of deployment whether it's a single site deployment or a multi-site deployment. For single site deployment, you just need that your NCache servers are set up, they are VMs and then NCache is installed on those. So, I'm going to quickly take you to our Azure portal, right? And, if I just go to the resource groups, I've just created a resource group, let me just sort it. My first resource group is demo resource group one, right? And, here we have all sort of VMs, for example, we have demo VM one and then we also have demo VM two and as a matter of fact I have used the same virtual Network, my bad.. All right! I've used the same virtual network for both of these demo machines.

Let me just quickly show you the.. let me just bring it right here. All right! So, we have demo VM one if you look at this it's deployed in West Europe and then we have demo VM two and then there is a demo VM v-net one, right? If I click on it, I can see demo VM 1 and demo VM 2 part of this and and these are the IP addresses 10.4.0.4 and 10.4.0.5. So, that represents our two NCache VMs and if I quickly take you to this environment right here.

So, what I've really done is I've created a VM, an empty 2012 or 2016 image would do and then I've gone to our Alachisoft website and all you need, there isn't any special installer for NCache. You just need to use the existing installer. Go to the download page and download the 30-Day trial of NCache from our website and once you've done that I've installed NCache on demo VM 1 and demo VM 2. And, then I created a demo V net cache as well. The cache creation steps are very simple. You just need to go to demo cache take the caching topology, async, and specify demo VM 1 and then I think demo VM 2 should pick the IP as is. Yes it does. So, these servers are able to talk to one another.

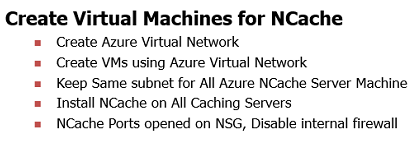

One additional step that I've taken, if I come back to the presentation, these are the steps that that are needed for setting up NCache service for Microsoft Azure. You create a Azure virtual network, right? That’s something that you may already have in your environment. And, then you create VMs on those Azure virtual Network. So, you could have dedicated set of VMs for NCache; server one server two server three. And, then you make sure that you keep the same subnets. That's something that we recommend and then you install NCache on all those VMs. Then one other thing is that you open NCache ports for communication. There are two kinds of ports, two kinds of network considerations that you need to have. One is that you need to have internal firewall disabled and then on the network where these VMs are, you should have a network security group, right?

So, for example, demo VM one network security group, we would have these ports opened already, right? So, if I quickly show you the NCache ports, all we need is port 9800 and 48250. This is for inbound as well as for outbound Communication. 9800 and 48250 So, this would make sure that any application trying to use NCache, which uses port 9800, is able to connect to these caching servers and then any application which is trying to manage, for example, there could be another VM which is trying to manage this and could be cross virtual network, but it's part of the same resource group, these network security group allow you to have this communication going on on these ports. So, that's it. That's the consideration that you need to have.

I've turned off firewall on the caching servers themselves. And, these ports are open and I've just created a cache and I can see the statistics. I can just run a stress testing tool application on these machines directly and I can quickly, there's a bit of lag so please bear with me. And, I can just simulate some stress on these as well. So, we have I'm sorry V net one cache and we just run and simulate the activity on these two caching servers. So, that's how easy it is as far as server-side setup is concerned. Now, you need to come back to your application and have a look at that. Yeah! there you go. So, we have activity coming in. So, we're good. I'm just going to close this down.

Step 2 for Single-Site Deployment: Deploy Azure Service in EnvironmentNext, now that we have virtual machines configured, I've created a cache cluster as well, next thing is then I need a web application which can talk to this cache cluster, right? So, I'll quickly come back to my presentation right here, right?

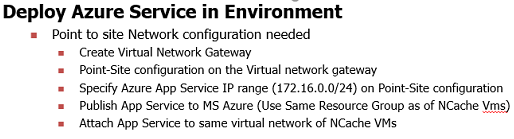

So, from your applications standpoint, what you really need is, you need a point to site network configuration between your application, in your app service in NCache. So, first of all, I have done that already, right? just to highlight that. Now if you come back to the resource group, we have demo resource group 1. So, we have demo virtual network gateway, right? We created a gateway on this, right? And, then we have a point to site. This is a virtual network gateway which uses demo v-net, that's the main which network for our caching service. So, if I come back right here, if you look at this diagram, it actually uses a virtual network gateway here, which is part of this virtual network and then my applications which I'll deploy shortly is going to have a point to site communication going on between this so that this is attached to the same virtual network. That's the requirement of Microsoft Azure. in order for your app service to talk to resources which are on a virtual network, for example, VMs, you need to have a point to site set up.

So, coming back right here. So, we have demo resource group. Even if I show you the point to site, let me just come right here, if I show you the point site-to-site configurations, we have this IP which is a general IP for our app services. So, once an app service gets deployed you click on it and then attach it to the corresponding virtual network.

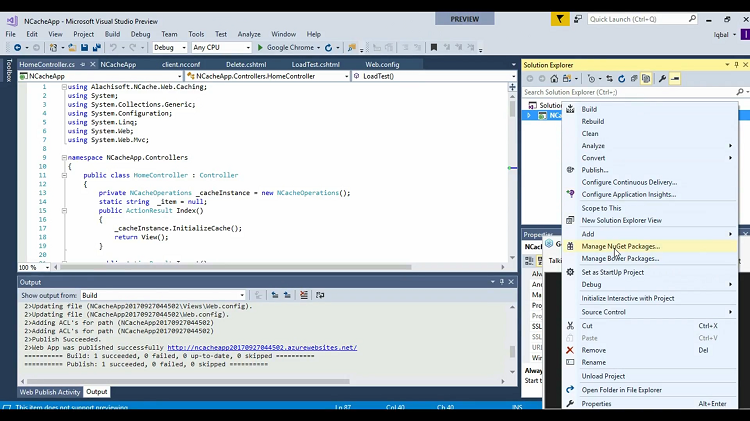

So, I'll come back to my project, I think I didn't show it earlier, but this is a sample application which is a service App. I'm going to show you what is needed. So, if you right click on it, you have NuGet packages available for Microsoft Azure.

So for example, we have Alachisoft.NCache.SDK. These are privately available with us, right? These equip your applications with all the NCache points at resources because there isn't any installation of NCache on the client side. Typical NCache deployment is that you have client installed, you have a server installed. So, covers it, right? But, with services it's an instance. So, you don't have access to underlying VM. So, in that case you need the NuGet packages. So, that all the resources are made part of which you need inside the application. So, this is the nougat package that I've already installed. What it really has added all the NCache client-site libraries, right? So, that's it has added some configs as well. So, we have client.ncconf, that's the main file which connects to any cache.

For example, I already have settings to connect to V net one cache that's the name and then also have the internal IP addresses to connect to those. Next thing is that I publish this application and then this application should be able to access 10.4.0.4 for VM one and 10.4.0.5 for VM two. So, I need to make sure that there's a point to site communication where this public IPs is accessible to my application, only then it would be able to connect with this cache.

So, coming back to the application I am showing some cache calls. So, within this MVC project, we have our main controller where we have this cache instance and then I'm using cache instance. InitializeCache. Let me just go to this method right here. So, this is the initialized method, NCache.initializeCache and then we also are calling cache.insert to add an item, Cache.delete to delete that item, cache.get to get the item back and then if I'm not sure if I have performed some load test, yeah! So, there is another method load test which actually loads item, thousand items are loaded in the cache and then those are retrieved as well. So, this should take care of the main logic as well as our application is concerned.

There are some views that I'll show you. So, we have delete, get, index and load test as well and these are simply being called through our to our application. So, what I'll do is I'll these are basic NCache calls. So, what I'll do next is I just deploy this to Microsoft Azure. So, I've already set up all the resource groups and networking piece, right here. But, what you really need is if it's a Microsoft Azure app, you just create new, your web app name based on your subscription. Let's wait for some time. So, that it picks the subscription. There you go. So, it automatically picked Visual Studio enterprise, and then resource group, I just plan to use the demo resource group one. So, that I'm using the single site deployment. So, it's part of the same resource group and then I pick the service plan and then just choose create. You could just put new here as well. So, that even the location is West Europe, alright? Choose Okay on this and then choose create and it will automatically get started on that.

Since, I already have done this. So, what I do next is, I'll simply go to my web config, make sure that it's using V net cache one and inside client.ncconf we have the V net one cache. So, this application is going to be deployed to the same site. Same resource group end and then I'll attach it to the virtual network which is part of my NCache servers VMs.

Coming back to Microsoft Azure, in a moment, if I go to the resource group one, we should have this application somewhere. There you go! If you click on it and then on the networking piece of this application once it's deployed, right? So, Right! So, you go to the networking, you click on it and then it's already connected to demo v-net one, right? I've already done this, right? So, in your case since once you make a point to site connection on to your virtual network gateway that you have one demo resource group one, right? Bringing back this up, right here. So, once you create a virtual network, create a point to site and then this app service IP is exposed, after deploying your app service you need to attach it to the virtual network, right? So, this is something that that's an extra step which is needed, right here. So, my application I hope it's loaded. I'm waiting it for it yeah!

Now on the server end I'm just going to clear the contents and I'll just insert an item. Successfully added in the cache. And, you can see one item at it I'll just quickly get it. I'm sorry I can't really show you the counters but it actually indeed retrieved it and then I can delete it, right? So, and since it's part of the same virtual network is a lot faster in comparison.

Now I'll just simulate some load tests I'll just add thousand items and you should see some activity. There you go, right? So, requests per second and those items are being added and then you could see about 600 items here about 400 items here and then it's not retrieving those items. So, that's a typical deployment of your services, be it microservices, be it app services, API apps, any application deployed in a service models. You just need to introduce NuGet packages, create a point to site connection to NCache VMs through what your network gateway and then your application instance can be attached to the VM. So, these are all Microsoft Azure networking configurations and NCache just needs private IP addresses to be exposed on the TCP-IP channel. So, that's all what we need.

Scenario 2: Multi-Site Deployment for Azure Services and NCache

So, next since we have less time available I think in last 10 minutes I will cover the multi-site scenario as well. The configurations are almost similar.

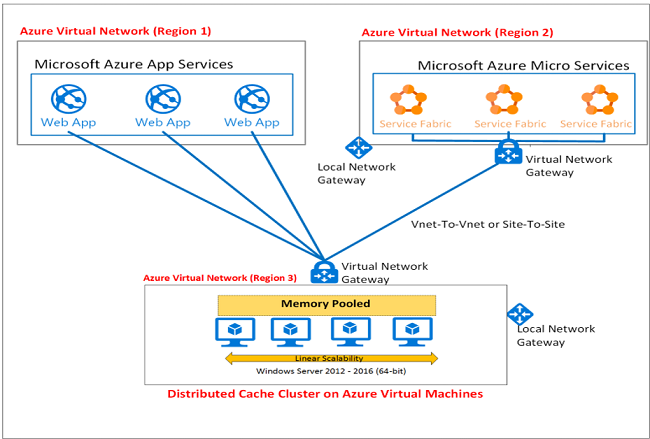

We have our web apps or Microsoft services or app services deployed on one Azure virtual network or in one site and then NCache VM's or another site and for that what we really need is a local network gateway here and a virtual network gateway here and then we need a site-to-site connection between these two. Again, these are again all Microsoft Azure settings that equips two sides to connect with one another using a site-to-site connection and NCache utilizes those and internal IPs are mapped on each side. So, your NCache server internal IPs are mapped onto your application services virtual network andwe vice versa.

And for that I'll quickly show you the configurations. If I bring you back to resource groups, we have demo resource group two, right? So, that's another virtual network. And, we have demo v-net two, right here, and I have two boxes on this virtual network as well, demo VM 3 and Demo VM 4. So, this environment right here is 10. 4.0.4 that's another virtual network. It could be way across site as well I'm just using the analogy of another virtual network as another site. But, it could be a cross site, it could be another data center in another region in Microsoft Azure.

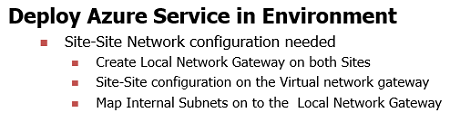

Steps for Multi-Site Deployment: Deploy Azure Service in EnvironmentSo, what you really need is keep the same settings for this as I already explained.

Keep the VM part on the same virtual network same subnet, create a cache, start that cache and now your application needs to connect to our cache which is a cross-site. For that, you need a site-to-site communication going on. And, if I come back right here on this demo resource group, since we have a demo virtual network and demo virtual network gateway, yeah there you go, virtual network gateway 2, right? So, that's a gateway on the second site. So, if we go to the connections, we have demo 2 site-to-site connection. So, we have a site-to-site connection to the demo 2 local network gateway. So, that is something that I've configured, right? So, that enables my virtual network one to connect to virtual network two which are across site. So, this is exactly what we've done.

I'll just quickly show you the NCache application that nothing changes here. You just need to change the name of the cache and then you should have the IP addresses to connect to the caching servers which I have as part of the stack. So, V-net to cache and I'll be using the same application to now connect to, I just need to publish it one more time, and notice I still publish it in demo resource group one. Whereas our virtual machines are in demo resource group two. And, this is the VM 3, right here, where we have two caching servers. So, I'll just publish this one more time keeping a site-to-site connection already configured. I'll just publish this and same application would now go across network, across site from virtual network 1 to the virtual network 2 and would be able to connect to this. So, I'll just wait for it to be published and then I'll come back right here then show you the networking piece one more time.

So, what you really need is same setup for virtual machines, keep the same virtual network here, same subnet, spawn the VMS, install NCache on them, disable the firewall, set up the network security group so that NCache ports are open and then you need a site-to-site connection between your applications as services connecting to NCache service. So, if you already have a virtual network where your app services are attached, you just need to make sure that virtual network 1 is now able to talk to virtual network 2 and then there a cross site and this is exactly what I've done in Microsoft Azure as well. So, publishing was successful. I think this was it. Let me just close it down and let me just insert an item, alright? And let's see if an item has been added there you go. So, now item has been added. So, now it's talking across site, from one site to another and then if I get this item it would just be retrieved. If I delete it, this item would go away from my demo VM 3, no items are there, and similarly, I'll just simulate a load test where I'll start using the same environment but I'm just simulating some activity on it.

So, now my site one, app services are talking to site 2 cache service. They're going across site. It's not a recommended scenario because there there's a WAN replication or WAN latency which may become part of it. So, we recommend keeping everything in the same site but it's possible there could be a scenario where your based on multi-site sessions feature for example, based on banner application feature of NCache. Could be that on-premise applications talking to Azure or Azure applications talking to one premise vice versa. So, these are the requirements and our load test is now complete as well so that successfully got executed.

So, that covers our Azure deployment.

Distributed Caching Options for .NET

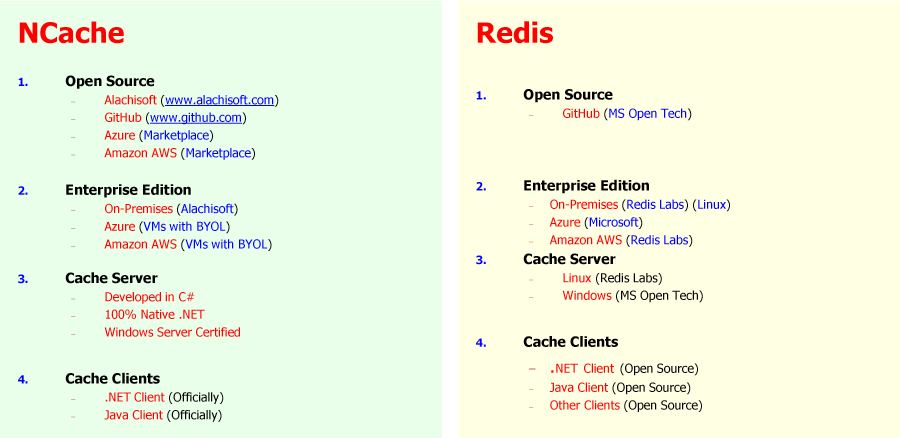

A few things that I would like to mention at this point that and there is a Redis comparison as well.

We're talking about Azure. So, we'll definitely talk about Redis. So, it's available on our website. NCache is 100% .NET. It's developed in c-sharp. It's fully supported for.NET and java. In comparison, Redis behind the scenes is on Linux, you don't have control on the VMS. In NCache you have full control on the VMS. you can run server-side code on NCache as well the Microsoft version of a Windows version of Redis is not that you know stable. It's the Linux version which is used behind the scenes and then provided as a service model. And then, NCache can be used on Dockers as well. If you plan on using NCache on the server-side Dockers containers, you could use that.

And then, I'll also talk about some details in regards to deployment options. You just install NCache server portion, you get a Azure release from our end. It's a server only release and then you need the NuGet packages to make your applications equipped with NCache and that's something that you can also get hold from our support or sales team. So, that's something that's not publicly available. That's intentional but you just need to request to us and then we'll provide you the release for Azure as well as the NuGet packages and after that it's just the networking piece that you need a single site set up or multi-site set up. Typically, we recommend single site and in that case, you need a point to site communication and in multi-site you need a site to site communication.

So, that completes our presentation.