Five ASP.NET Performance Boosters

Recorded webinar

By Ron Hussain and Nick Zulfiqar

Learn how to boost ASP.NET performance and scalability with a distributed .NET Cache. Watch this webinar to learn about the five ways to boost ASP.NET performance for scalability in ASP.NET applications and how to use an In-Memory Distributed Cache to effectively resolve them.

Co-delivered by our Senior Solutions Architect and Regional Sales Director, please join us to learn about:

- ASP.NET Application Data Caching

- ASP.NET Session State Caching

- ASP.NET SignalR Backplane using Distributed Cache

- ASP.NET View State Caching

- ASP.NET Output Caching

Today we're going to be talking about 5 ways to boost your ASP.NET application performance and scalability. It's a very hot topic, I would say it's something that is, it's high in demand. So, we're glad to bring this for you. Ron is going to talk about that in a minute and also, you have any questions during this presentation feel free to type them into the video and I'll be able to bring those up to Ron's attention.

So, Ron what do you kind of get started? Thanks, Nick. Hi everybody, my name is Ron and I'll be your presenter for today's webinar and as Nick suggested the topic that we chose today is five ASP.NET performance boosters. So, we'll go through five different features. Initially, I will talk about five different problems, what you would typically see within an ASP.NET web application, these are day to day issues that actually slow down your applications, its performance degradation, then the scalability is another avenue, another aspect where we'll talk about these bottlenecks and then I'll talk about different solutions with the help of distributed caching system. How to resolve those issues within your ASP.NET applications? So, that's what we have lined up today, I will cover different features, I will have sample applications, I'll show you everything in action how to set up and get started with this. So, it's going to be a pretty interactive pretty hands-on webinar and as Nick mentioned, if there are any questions please feel free to jump in and I'll be very happy to answer all those questions for you. Alright assuming that everything looks good, I'm going to get started with this.

ASP.NET is Popular for High Traffic Web Apps

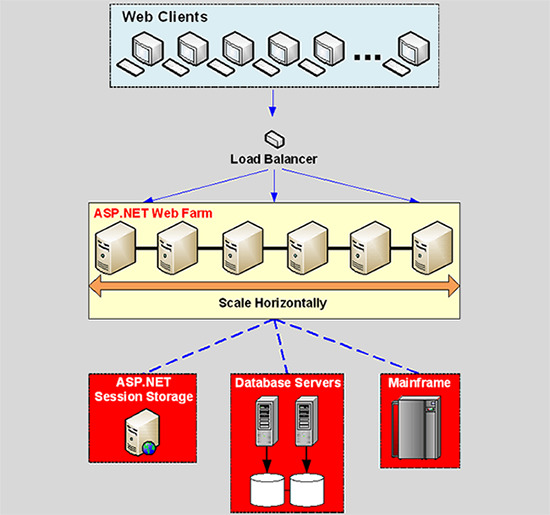

So, first of all, I'll talk about ASP.NET platform in general. ASP.NET is a very popular platform for web applications. We see a lot of web deployments and it's increasing in its popularity. Nice thing about ASP.NET platform is that, it is based on the usage pattern it's something which scales out pretty nicely, you can handle thousands of concurrent users and their associated requests without having to change anything inside the application architecture. You can create a web farm, you could create a web garden, it gives you a lot of scalability options, you can put a load balancer in front and then you can route requests between different web servers and you can achieve linear scalability, horizontal scalability out of the ASP.NET web farm.

A load balancer could have sticky load balancing or equal load balancing depend upon your architecture, depending upon the stateful nature of your web servers or app servers, and then you can scale out as you need to.

So, this is a typical web farm deployment for ASP.NET applications, you have N number of clients going through the load balancer to set up web servers within a web farm and then you have ASP.NET session storage, that's one kind of data that you may see within your ASP.NET web applications. Then it will be database servers, relational databases or NoSQL databases you may be dealing with a lot of data back and forth and then it could be any other back-end data system mainframe file system where your applications are interacting with. So, this is pretty popular, pretty scalable, pretty fast and a lot of active deployments are using this platform.

The Problem: Scalability Bottlenecks

So, let's talk about the scalability bottleneck within ASP.NET. Where exactly is problem? It's something which scales out very nicely the scalability problem and let's quickly define what scalability is? Scalability is an ability within the application architecture or within the environment where your application is deployed. It is that ability where you can increase number of requests per second or given requests, concurrent requests without compromising on the performance. If you have certain amount of latency under say five users. How about you maintain that latency? You don't degrade performance and it's okay to not improve performance but at least you maintain the performance under 5000 users or 500,000 users that ability itself is called scalability, where you get maximum throughput out of the system, more and more request loads are being handled and then your latency does not grow as user loads grow. So, it's essentially performance under extreme load or extreme performance under extreme load. So, that ability is called scalability.

Now, typically ASP.NET applications although the web farm is very scalable, they would now give you the scalability problems within the web farm but they can cause slowness under peak loads primarily because of backend data sources. So, if your application slows down, it chokes down because of a huge amount of load then that that application is a candidate for scalability, it needs to have scalable architecture within itself. Why application would feel your world or you would see situations like this where applications slow down under peak load, there could be different data storage rated bottlenecks or some bottlenecks within the application. So, from this point onwards we'll talk about five different bottlenecks within an ASP.NET application that limits your ability of scalability, that limits you to scale out.

Two Data Storage Bottlenecks

Alright. So, first of all, we have two data storage bottlenecks.

Database cannot Scale

We have application database which cannot scale up typically, you would have a relational database in the form of SQL Server, oracle or any other popular relational data source. It's very good for storage but it's not very good when it comes to handle huge amount of transaction load. It tends to choke down and, in some cases, it actually gives you time-out errors. So, or at least it actually slows down your performance you cannot add more database servers on the fly so, it's going to be single source. It's also single point of failure in some cases if you don't have replication and then the most important, the pressing issue here is that it's not very fast, it's slow under normal scenario and then under peak load and it actually worsens the situation where it's not able to accommodate the increased load and it further slows things down. So, that's our first bottleneck.

ASP.NET Session State Storage

Second bottleneck is around ASP.NET session state storage, now session state is a very important kind of data. It's something that has user information, for example, there could be an e-commerce application you have maintaining user information or the shopping cart for that matter, it could be booking system, it could be ticketing system, it could be your financial system. So, it could be any sort of front system where users log in and they have their very important data within this session object.

Now, these are the three modes that ASP.NET platform offers, we have InProc where everything gets stored inside the worker process. So, everything sits inside the application process so your worker processes are not stateless, HTTP protocol itself is stateless but in this case, your worker processes would host all the user data themselves. Then we have StateServer that second option and then we have SQL Server. So, talk about these conventional session state storage options and let's talk about their Bottlenecks.

First of all, InProc it's fast because it's in memory, there's no serialization deserialization but on the downside, first of all, you cannot handle a web garden scenario. On one web server you would only have single worker process for a given application because that's where your session data exists now if next request goes to another worker process, you don't have that session data anymore it has to be the same worker process and this is why it limits you, it technically it's not possible to run web garden with InProc session management, so that's one limiting factor here.

Secondly, you need sticky session bit to be enabled on load balancer. So, first of all, you limited your performance or scalability or your resources on the web server and by having only single process for an application. Secondly, your web servers where the initial request got served, subsequent requests would always go to that same server. That is all sticky session load balancing works, it gets the job done but main issue with this is that it could be that one web server has a lot of users active but other web servers are sitting idle because those users have already been logged out and since the users are going to be sticky in nature, they would never go to this free server, they would always go to the same server where they have to go with data exists. So, that's another problem you have to have sticky session load balancing with InProc, and most importantly, if your worker process gets recycle and we've seen based on our experience ASP.NET worker processes do get recycle quite a lot you would lose all the data right, if it's a maintenance related task, if the server needs to go down you have to bring one web server down bring another web server up you lose all data as a result of that. So, that sums up all the problems, all the bottlenecks that you would see with InProc session management with an ASP.NET. So, it's clearly not an option and it's also not very scalable you have one server which you hit capacity of that server and with sticky is a load balancing it doesn't really help.

Second option is StateServer, it's slightly better than InProc because it takes data out of your application process inside a state service, it could be a remote box or one of your web servers, it's entirely up to you but problem with this is that it's not scalable, it's single source, you can scale up on that server but scaling out is not an option. It's always going to be single server, one service hosting all your session data and that is going to manage all the requests load as well, if your request load grows, there are no options to add more resources. So, it's not going to scale out in comparison eventually your state service can choke down for example, if you have hundreds and thousands of users and their associated request makes it millions of requests per second or per day requester or within millions that's pretty heavy load. So, based on that StateServer would not scale out and it would not be able to cope up with increasing load, and then it's also a single point to failure. If StateServer itself goes down, you lose all your session data and session data is a very important kind of data you wouldn't want to lose your sessions while your user is about purchase something or it's about to make a decision and it's going to impact the business in return.

The third option is SQL Server. SQL Server is again an out of process session management but again it's slow, it's not scalable, you cannot add more SQL Servers and it's not meant to handle transactional data alone, so you end up having the same problem that we discussed as part of our application database, that problem remains the same for data caching as well as for session caching. So, session state is not something which is going to be optimized with the default options that ASP.NET platform presents and this is primarily because of the data sources that it offers InProc, StateServer and SQL Server.

I hope this has built some basic details around the bottlenecks and of course we'll talk about the solution, we're talking about distributed caching and its features in comparison and we'll talk about how to take care of these issues one by one, so I want to list down all the problems first and then we'll talk about solutions one by one. This diagram illustrates the same.

We have data storage bottleneck database and then we have ASP.NET session storage or InProc or databases as a session manager and that also up clearly a bottleneck, where we have single sources in most cases, they're not scalable, they're not very reliable and then there's slow in general.

ASP.NET View State Bottleneck

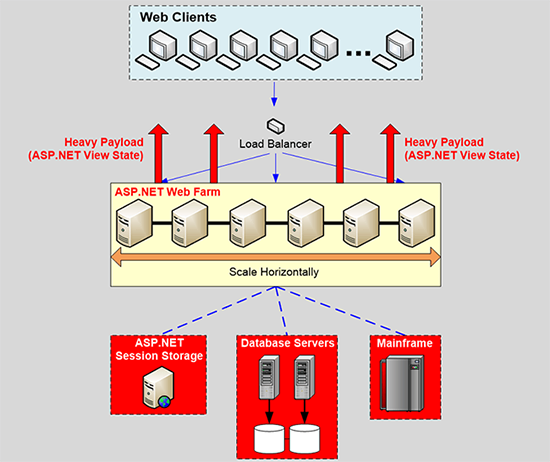

Now third important bottleneck is ASP.NET view state. For ASP.NET web farms there is a view state, now those of you who want to know what view state is? I'm pretty sure everybody knows but view state is a client-side state management, it's a packet, it's a state of your controls widgets that you have on your web farms, it gets constructed on the server end and it becomes part of your response packet goes back to the browser that's where it's stored, it's never really used on the browser end and it's brought back to the server when you post back on that page as part of the dispersed request packet. So, it becomes bundled with the request and response packets, it goes back to the browser, it's never really used there and one pressing issue with view state is that is generally very heavy. It's hundreds of kilobytes in size and think on the scenario, where you have huge amount of transaction load, we have millions of transactions and then each transaction has a view state packet bundle to it or a request response packet for ASP.NET web application has view state part of it and if you have a hundreds of kilobytes of view state per request and you have millions of transactions, it is going to consume a lot of bandwidth and it would in general slow down your page responses because you're dealing with a lot of data back and forth and that data is heavy in size as well.

So, that's another bottleneck which eats up your bandwidth, your cause increases significantly and then it slows down your page response times because view state is general heavy, you're dealing with heavier payload for requests in response. So, that is another kind of bottleneck which is part of ASP.NET web farms. If you have ASP.NET web farms application, there's no getting away from this problem. By default, you would have to deal with a lot of view state and this diagram covers it where view state goes back to the browser, it's a client-side state management gets constructed on the server end and goes back to the browser and bring it back on the server on your post backs. Now request and response packets are heavy, they eat up a lot of bandwidth, heavy payload slows things down as well.

Extra Page Execution Bottleneck

Now fourth bottleneck and this is second last, we have one more bottleneck after this is the extra page execution bottleneck. Now this is true for ASP.NET web farms as well as for ASP.NET MVC web applications. There are scenarios where either entire page output is same or portions within a dynamic page are same, right so, you're dealing with static content within application very frequently. So, page output does not change very frequently but you're still executing those requests. There could be a request and it involves some back-end databases that gets rendered and then you fetch some data from the back-end data sources, you read that data, make it meaningful apply some daily business logic layer, data access layer all the good stuff and after that you render a response and the response is sent back to the end user in the browser. Now whatever the same cycle has to go again and then the content is not changing, you would have to go through the same cycle again and again and again. This page executes regardless if it changes or not, by default you're dealing with a lot of static content and although the content is not changing but you're still executing the same request. Now this increases your infrastructure cost, extra CPU, extra memory, extra database resources it can hit capacity on the web server, it can also hit capacity on database site in general it's something which would waste a lot of expensive CPU and resources on executing something which has already been executed. So, that's another bottleneck and we'll talk about a solution with the help of page output caching how to take care of this as well. So, this covers our fourth bottleneck and the fifth and by the way this diagram it involves page execution on static output and made more databases we dealing with a lot of requests.

ASP.NET SignalR Backplane Bottleneck

And fifth bottleneck is SignalR backplane. Now this is a very specific use case you may or may not have SignalR but for those of you, who are familiar with SignalR and if you have set up a backplane, right a backplane is a common message bus and your web server send all their messages instead of sending pushing functionality on the client browsers. There could be a scenario where few client requests are dealt with other web servers. So, we're just broadcasted to the backplane and backplane in turn broadcast messages, SignalR messages to all the web servers and they in turn broadcast to all their connected clients. So, with WebSockets ASP.NET SignalR backplane is a pretty common set up, if you have a web farm.

Now SignalR Backplane NCache or any other distributed caching product can be plugged in. It can also be a database or it could be also a message bus but idea here is that backplane should not have performance or throughput issues. It should perform very well, it should give you low latency, it gives you high throughput and at the same time, it should be very reliable if it goes down and you lose all the SignalR messages that would impact the business as well.

Databases are slow, they're not very scalable, they're slow in performance, Redis is an option it's not native .NET so, you need something which is very scalable, which is very fast and it's also very reliable. So, that's another problem within an ASP.NET application if you are using SignalR backplane as a result of that. So, this completes our five bottlenecks, I know it's a lot of information but primarily its database being slow, being not very scalable, ASP.NET session state does not have very scalable or fast or reliable options by default. View State for ASP.NET web farm is also a bottleneck, its source of contention, over utilization of bandwidth, static pages within application are going to be executed regardless, if your application content is changing or not. It's going to be executed and then we have SignalR Backplane which is generally slow and it's not very reliable, it's not very scalable and you need something which is very scalable very reliable in comparison.

So, this completes our five bottlenecks, on next few slides I will talk about solution and then we'll actually go through all of these bottlenecks one by one and I will present different solutions. Please let me know there any questions and I'll be very happy to answer those questions for you right now. I have some samples sample applications lined up right here, so I'll be using these alright. So, I think at this point there are no questions. So, I'm quickly going to get started with the solution because we have lot to cover in our next segment.

The Solution: In-Memory Distributed Cache

We have in-memory distributed caching as a solution and I'll be using NCache as an example product. NCache is main distributed caching product, .NET based distributed cache, it's written for within .NET and is primarily for .NET applications and it's one of our main products. I'll be using NCache as an example product and we'll talk about five different features within NCache that will take care of this and you would see a performance booster within your ASP.NET application. It would just dramatically improve performance as well as scalability and this is just ASP.NET specific features, there are other server-side features which you can tune, there's a separate webinar on how to improve NCache performance, that will just take things to a whole new level, that will further improve performance but this webinar I'll talk about five different bottlenecks and then talk about five different solutions to those bottlenecks.

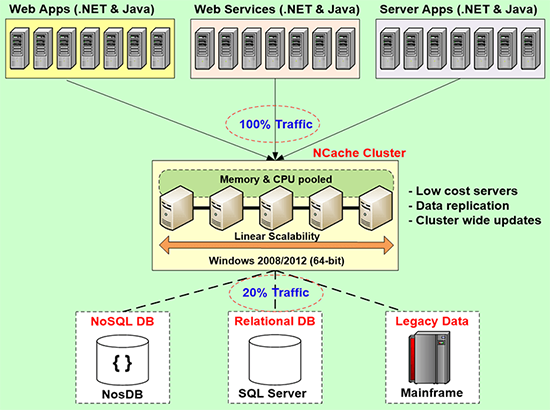

What is an In-Memory Distributed Cache?

So, what is an in-memory distributed cache and how it is faster and more scalable and in some cases it's more reliable in comparison? So, distributed cache is a cluster of multiple inexpensive cache servers which are pooled together into a logical capacity for their memory, CPU as well as their networking resources. So, if you have a team of severs and those team of servers are joined together into a cluster, it's a logical capacity for applications but it's a physical set of servers or VMs, so behind us in there are multiple resources and you have ability to add more servers on the fly. So, inexpensive cache servers, join together, came together and they pool their resources and that's what forms the basis of a distributed cache.

Then we have synchronized cache updates cross cache servers, it's very consistent in terms of data than it was because there are multiple servers, multiple client applications connect to it, so any update made on one cache server has to be visible on other web cache servers and also to the clients which are connected to it and I have used term visible, which means it has to be consistent. I didn't use replication as a term here, replication is another concept. So, all the updates are applied in a sync manner or in a consistent manner on all the caching servers, so you have the same view of the data for all your client applications.

Then it should scale out for transactional as well as for memory capacity and also for other resources. If you add more servers, it should just grow the capacity, if you have two servers and you bring the third server, previously you were handling say 10,000 requests with a third server it should bring it to 15,000 with two more servers doubling the capacity, it should handle 20,000 requests per second and that's our experience. It actually increases the capacity and overall request handling capacity increases as you add number of servers and I'll show you some benchmark numbers to support that. This is deployment architecture of typical distributed cache.

You have a team of cache servers, all Windows environments are supported 2008, 2012 and all your applications connect to it in a client-server model and they use this fast, scalable and reliable source in addition to our relational database. Someday they're going to give this exists in the relational database, you can bring some or all of your data in out in the cache. Session state this becomes your main store, view state, output cache, SignalR backplane this becomes your main provider for that.

NCache Performance Benchmarks

Let me show you some benchmark numbers to support that it's very scalable. On our website these numbers are published. So, these are the test details and then if you look at the performance this is growing for reads not that linear for writes, but this is the topology that we'll be using in today's webinar, reads and writes are growing linearly and this has reads and writes growing linearly as well as backups. So, this also comes with backup support that's why writes performance or capacity is slightly less than partition doesn't have any backup, so this has an overhead of backup as well. So, this is our most popular topology for reads and writes and as you add more servers it actually scales out the capacity as well.

Hands on Demo

Let me take you to our demo environment, set up a distributed cache and then get started with these features one by one and guys please I mean if there are any questions?

I'll be using, I've already downloaded installed NCache, scope of this webinar is not around NCache configurations or setup. So, I'll skip through some of the details here but just to let you know, I've downloaded NCache from our website a fully working trial and then I have installed it on two of my cache server boxes and one machine on my end is going to be used as a client. You can install NCache on the client as well or you can use the NuGet packages as needed.

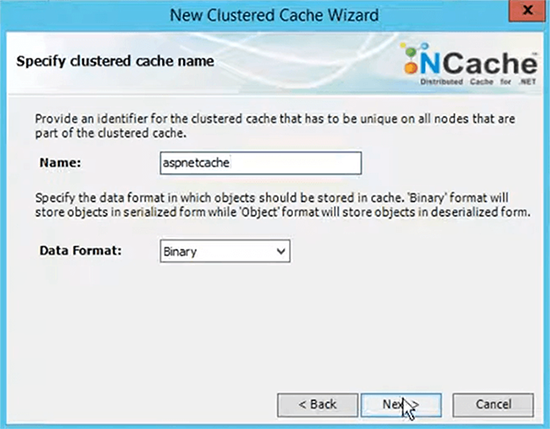

Create a Cache

I'll just create a cache, let's name it aspnetcache because that's what we have in focus today.

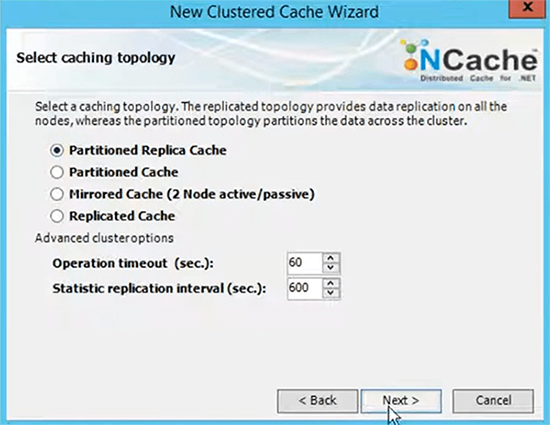

I'll pick partition replica cache and all these settings are discussed in great details in our regular NCache architecture webinars.

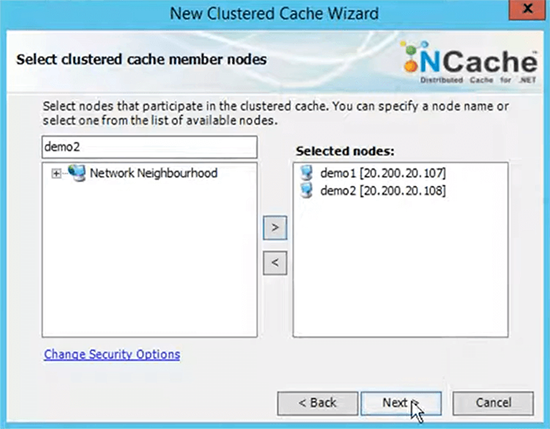

Asynchronous replication option and here, I specify the servers which are initially going to be part of my cache cluster.

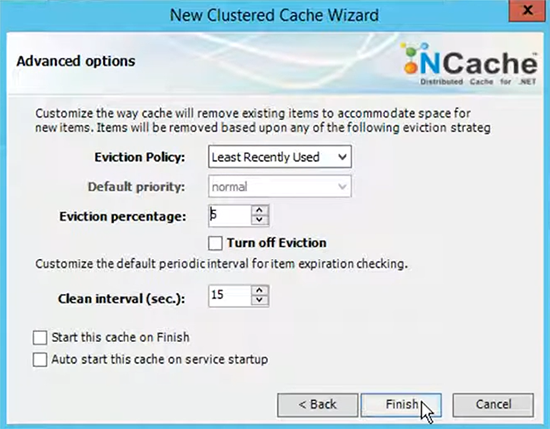

Keep everything same for TCP/IP port. NCache is a TCP/IP based communication, server- server communication and client-server is managed through TCP/IP and then size of the cache on each server, I'll keep everything default keep the evictions default and choose finish and that's it.

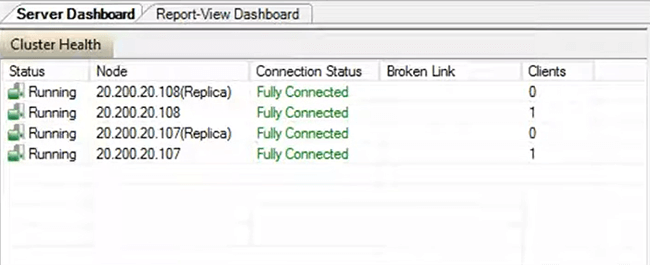

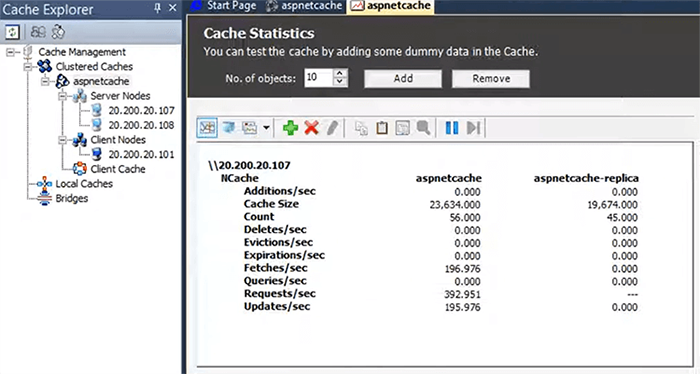

That's how easy it is to set up the cache. On the right pane we have all the settings that are related to this cache and by the way you can also set up command lines as well as powerShell tools and then you can manage everything from command line or powershell as well. I’ll add mybox from where I plan to run applications. So, that we have client-side configurations are updated and I am able to connect to this cache I'll start and test this cache cluster and that's It. My server site setup is complete at this point. Guys, please let me know are there any questions? All right, so, this has started. I'll right-click and choose statistics.

Ron, I have a question here real quick does NCache flows to support Java applications for JSP sessions a session caching or is it only ASP.NET apps? okay that's a very good question primarily this webinar was focused for ASP.NET so, I focused more on the ASP.NET site but yes to answer this question NCache fully support Java applications, we have a Java client and then for Java applications, if you have Java web sessions or JSP sessions, you could very well use NCache. So, our provider remains exactly the same, it's a no code change option and we have a sample application which comes installed with NCache as well. So, you just need to install NCache on Windows environment or you could also use containers and then the application could be on Windows or it could be on Linux UNIX environment as well, it fully supports Java applications.

Monitor Cache Statistics

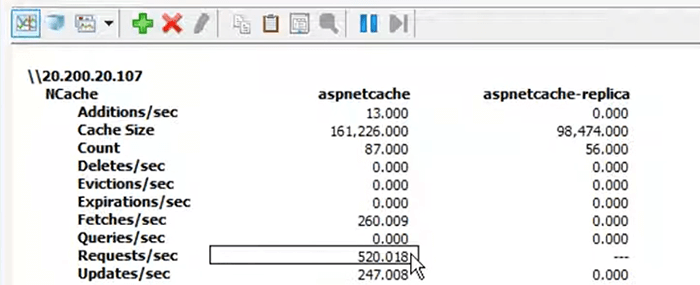

I've opened statistics just to see some monitoring aspects, I have opened a monitoring tool as well that comes installed with NCache. I'll quickly run a stress testing tool application to verify that my cache is configured fine and next I will actually get started with the sample applications, one client is connected, you should see some activity right here, there you go and we have counters showing value of requests per second from both servers and we have some graphs being populated already.

So, that ensure that everything is configured properly and then we can get started with our sample applications.

So, first sample application is, these are five different optimizations. I'll talk about these one-by-one and then I'll show you the sample applications. First of all, you can use NCache for data caching, we have our detailed API for database bottlenecks. You can use a fast in-memory distributed cache, it’s faster because it's in memory, it's more scalable because you can add more servers and then you increase the capacity at runtime by adding more servers. You can use it for session state this is a no code change option, sessions are very reliable in NCache because these are replicated, sessions are managed in a fast and scalable repository as well in comparison and you don't need sticky session load-balancing. I'll talk about more benefits once we actually show these sample applications but just let you know that these are some of the benefits that you get out of NCache right away as you as soon as you plug in NCache for these.

For web farms you can use view state, you can store view state on server end, you don't need to send view state and it reduce your view state payload size as well, then you can use NCache for SignalR Backplane, that's our fourth option and then you can also use NCache for output caching as well for static content and this is also true for ASP.NET core. You can use it for session state within ASP.NET core and you can also use it for response caching in ASP.NET core applications.

ASP.NET Session Caching Sample

So, let's get started with ASP.NET session state storage. This is easiest of all, you can set this up within five minutes and you can quickly test it. As a matter of fact, I'm going to show you how to set it up and then get started with it.

So, that's our first sample application, what I've done is this comes installed with NCache as well but I've slightly modified it. For session caching all you need to do is add an assembly tag, these two assemblies are redundant, these are for another use case for object caching but you only need Alachisoft.NCache.SessionStoreProvider assembly. Version 4.9 is the latest version and then you need culture and publicly token for this assembly as well. So, this is a typical reference to this session state assembly, after this you need to replace your existing session state tag with NCache. If you already have a session state tag you need to replace it, if you don't have one it was InProc, in that case you could actually replace it, you can add this as a new. Here the mode is custom and provider is NCacheSessionsProvider, timeout value is if a session object in NCache stays idle for more than twenty minutes, it would automatically expire remove from the cache.

Then there are some settings such as exceptions to be enabled. So, this can be used as a sample tag, you can copy it from here and paste it in the application and then most important thing is the cache name, you just need to specify the cache name aspnetcache. I think i use the same name aspnetcache and it would resolve the configurations for this sample because you only specify the name. So, it would just read the configurations for this cache from the client.ncconf aspnetcache or this file can be made part of the project as well. If you have a NuGet package you could actually make this file part of your project as well and that's it. You just need to save this and let me just make this as a main page because we were using two pages here and I'll run this and this would launch the guest game sessions for provider and it would connect to NCache for session data.

So, I'm expecting to create a session object in the cache and then I'll read some data back from the session and I'll show you the session object being created as well. This is a guessing game application, it allows you to guess a number between one and hundred and then it actually prints the previous guest from the session object. So, it guessed the number is between 0 & 23. So, I'll just guess 12, number is between 12 and 23. So, it read the last number as well. Let’s guess number is between 13 and 23. So, let's guess one more time. I'm not able to guess, hopefully I'll get there but just to let you know a session object must have been created on the server end.

Let me just show you this with the help of a tool and there it is, NCache test is a keyword that I append it with this sample application. If I change it, this is to distinguish between sessions of different applications, there could be a scenario where you have two applications and you will use the same cache for both applications. So, in that case you can have an app ID appended to the session variable but typically one application should cache its session in a dedicated cache. So, it doesn't really matter, if you even specify it as an empty string but this has been created and this is your ASP.NET session ID, session right here is replicated right here. So, if this server goes down you have the session data made available automatically.

Some more benefits of NCache session state in comparison to InProc hits out of process, so your web process either is completely stateless. So, that is as preferred by HTTP protocol, it's more scalable, you can add more resources, it's faster because it's in-memory, is comparable with in comparison to InProc and then it's very reliable. If a server goes down you don't have to worry about anything and most importantly you don't have to use sticky sessions or balancing anymore. You can have equal load balancing and request can bounce from one web server to another. You don't have to worry about anything because web servers are not storing anything, actual session objects are stored in NCache and this is an outer process store for your applications and comparisons of StateServer. It's not a single point of failure, if a server goes down or you bring it down for maintenance, you don't have to worry about anything, it would just work without an issue.

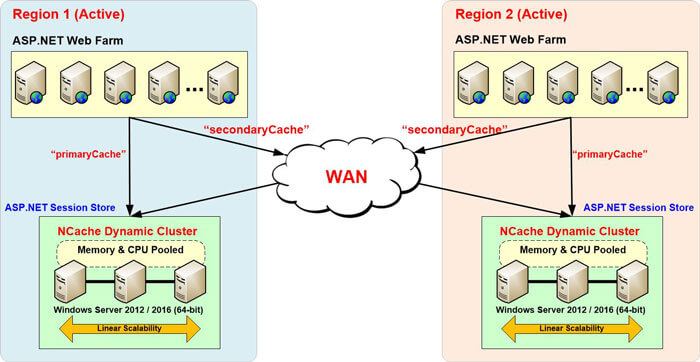

Secondly, it's very scalable you can add more servers on the fly. It's very reliable, it's very scalable, it's fast in comparison. For database it's not slow, it's fast and it's very scalable and in some cases it's even better in terms of reliability where you don't have replication for databases. So, this covers it and one more important feature within NCache is our multi-site session support, that's another feature multi-region sessions, where you going to have sessions from two different regions stored in NCache and you can have fully synchronized sessions.

If a session request bounces from one server to another or from one region to another, it were automatically fed session across the region and bring it here and vice versa. So, if you need to bring side down, you could route all your traffic's to the other side by keeping cache running for some period of time. So, that's another feature. One of our major airlines, airlines customer is actually using this feature for their location affinity. So, this covers our ASP.NET session state. This is a no code change provider, please let me know if there are any questions around it then I'm already answered a question around JSP sessions, you can use NCache for Java based web sessions as well. Assuming there are no questions.

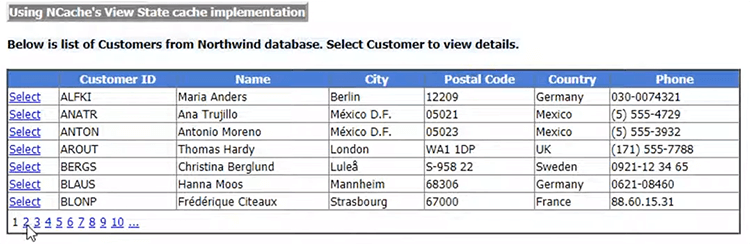

ASP.NET View State Caching Sample

Next, I'll talk about view state, now NCache also has a view state provider, the way it works is that basically, we keep view state on the server end. It's again a provider, all you need to do is set up a section group right, it’s content settings, it's ContentOptimization.Configurations.ContentSettings. The name of the section group is nContentOptimization and then this has some settings where you take a cache name for example, I'm using mycache right now, the way we have designed our ASP.NET view state provider is that we keep view state on the server end right. So, first of all I'll run without caching although I have the prior provider plugged in but I've set up the view state caching to be false and I'll run this and I'll show you the actual view state going back to the browser.

So, I'll show you the default option and then I'll show you the NCache view state and we'll compare the difference. So, there's a few pages web farm and I'll show you the view page source.

This is the default view state, the value part, let me bring it here and let me just create a temporary document here. Now this is the default view state which is part of my response packet and when I push back on this page, it's going to be part of my request packet as well. That is an individual request and notice how many characters, it has appended to request and response headers right and this is same for all the requests that I will make. Let me just show you few more requests and let me show you the view page source of this one as well.

So, it's almost similar you could see on the screen. Now with NCache what we have done is we intercept your view state with the help of our view state provider. We keep view state on the server end so, this value part we create a key and a value for a given page of web farm, we stored on the server end, now it is stored on the server end so, we send a small token back to the browser. So, that is a static size token, which is sent back to the browser. It never really changes, it's always going to be sent there and then brought back to be used again and when you post back, you actually make a call to NCache and fetch the extra view state from NCache and then use it on your web farm for actually view state and that's what we are doing behind the scenes.

The benefits that you get your view state packet becomes smaller in size right because it's a token. So, that has overall impact of reducing the size of payload for request and response. So, this improves your performance and secondly, if you have hundreds of kilobytes of view state traveling back and forth for thousands of requests, it will eat up your bandwidth. S, this is not going to happen with NCache, NCache view state is a static token and I'll show you the token real quick.

Ron, let me just jump in for the question, real quick does NCache have any bill security features such as the encryption for sessions and view state caching? yes it does, these are features that you can set up explicitly, these are general NCache features. So, all the view state is already an encrypted string but if you want to further encrypt and let me see if it's around yeah so, you can enable encryption on the cache itself you need to stop it, set up encryption, we have DES providers, we have AES providers, we have FIPS client, FIPS compliant, DES AES providers. So, yes you can simply set up this and all the payload from client-servers would be encrypted and decrypted on here in and fetch respectively. So, this is something that you can set up on demand and you can make things go and by this question, I also want to highlight that since NCache is not storing this is the view state after NCache has plugged in has been plugged in as a provider and notice the difference, it's these many characters versus these many characters. So, this is your default, this is with NCache and see the difference yourself this slows down, reduces the size of the payload over all performance increases, band virtualization costs go down. So, you see a lot of improvements in the architecture and most importantly your actual view state is never really sent back to the browser anymore. It's going to be stored on the server end, it's secure in general.

So, based on this question thank you for asking this NCache view state is by default more secure than the default option because we store it on the server end that's where it's actually needed. So, I hope that helps. I'm going to close this down, any of the questions around view state, I will otherwise move on to our next segment.

Application Data Caching Sample

Let’s make me show you the object caching first because of time constraint. I think, I should cover this first. For database bottlenecks, anything that stores in the database that slows things down alternatively you can use a distributed cache like NCache. This is NCache API here.

Cache ConnectionCache cache = NCache.InitializeCache("myCache");

cache.Dispose();

Employee employee = (Employee) cache.Get("Employee:1000");

Employee employee = (Employee) cache["Employee:1000"];

bool isPresent = cache.Contains("Employee:1000");cache.Add("Employee:1000", employee);

cache.AddAsync("Employee:1000", employee);

cache.Insert("Employee:1000", employee);

cache.InsertAsync("Employee:1000", employee);

cache["Employee:1000"] = employee;

Employee employee = (Employee) cache.Remove("Employee:1000");

cache.RemoveAsync("Employee:1000");This is how you connect cache, this is how you dispose the handle towards the end you make operations, everything's stored in a key value pair, it's a string key value .NET permitted object you can map your data tables on to your domain objects and then you store your domain objects and then you deal with domain objects by storing individual objects or collections having relationships, SQL searches the list goes on but this is basic create, read, update, delete operation.

I'll quickly run a sample application and demonstrate how you would actually use NCache for object caching. You need these two summary references Alachisoft.NCache.Web and Alachisoft.NCache.Runtime. Once you've done this, let me make this as a start up page and show you the code behind for this. This is a ASP.NET web farm but if you have controllers MVC you could also use the same approach and then I have this namespace right here and within this application, I'm initializing mycache, you need to specify the name of the cache and you could also specify servers here, using InitParams right cache InitParams right here or you could just specify the name and resolve the name through client.ncconf which I showed you earlier. There is an addObject, which adds a customer, name is David Jones, male, contact number, this is address and then I'm calling cache.Add.

Similarly, I'm inserting this by changing the page or I could actually, also change the name as well just to ensure that this is updated and then I'm calling cache.Insert and then I'm getting the object back, getting the count of the object. I'm removing that object, I'm just running a load test. 100 items are going to be updated again and again. So, let's run the sample application, this is how intuitive it is to get started with it and it would just launch the application and I'll be able to perform all these operations for you and before I actually do this, I would also like to show you the view states which were cached in NCache.

You could see the keys for the view state for three pages vs and this is the actual key of the object and then actual view status is on the value part. I forgot to mention this now assuming that we have let me just clear the contents. So, that we're only dealing with object caching data now, actually let me just do this from manager, it's convenient. So, I'll add an object, customer added I'll fetch this object back. So, we have David Jones, age 23. I'll update it and then add it, get it back now it's David Jones 2 and then we have 50 as age, again get it, items in the cache 1, I'll remove this object again items in the cache 0 insert it one more time, insert is in add as well get the object back and then I can show you this in the cache. So, I was dealing with one object at this point and the key was customer in the name of the customer appended to it and then I'll start the load, test now. You would see some activity on the cache, there are requests coming in and you have client requests dealing with the data.

And if I simply run this dump cache keys again, it would just show me the hundred keys dumped together. So, these are the keys that I added. So, this is how simple it is to get started with the object caching, any data that belongs in the database and slows things down, limits your scalability you can bring it to NCache using our object caching. It could be anything domain objects, collections, datasets, images any sort of application related data can be cached using object caching model. I hope this helps, let me get started with this, all right this covers our data caching next there are SignalR Backplane.

ASP.NET SignalR Backplane Sample

Let's talk about SignalR. With NCache we have a powerful pub/sub messaging platform. So, we have a sample application and this I'll also show you the NuGet packages. NCache libraries come installed with installation or you could use our NuGet packages. So, if you go to our online NuGet repository and you browse for NCache, you will see all the NuGet packages. Alachisoft.NCache.SDK is for object caching, Linq for linq query, we have session state provider, then we have open source and community as well.

This is the NuGet package that I have included in this application. Just build it and let's see if it works fine because it should have a NuGet package added to it. All right, for SignalR Backplane all you need to do is make sure that you have SignalR NuGet package added, I mean this is install if it's not installed already, all right. So, you have a NuGet package added and after that you need to add, it added some SignalR assemblies as needed and some helping assemblies as well and after that if you go to the web.config, i've just added some settings in there myname of the cache is aspnetcache right and then the event key, which is the name of the topic that you like for this. So, you would actually like to provide a topic name based on which you would like to have SignalR chat messages or SignalR messages for this particular chat application transmitted and then all you need to do is, one line of code change inside where you specify the name of the cache and the event key and point towords NCache and run the sample application. It would automatically use NCache for SignalR Backplan, NCache becomes your backplan that's how simple it is to plug in NCache for SignalR application.

Benefits that you get its native .NET unlike Redis it's fast, scalable, reliable in comparison to databases as well as message buses and then we have a fully functional fully supported pub/sub model, which is backing this up. So, you can use pub/sub messaging directly as well but one extension of that one use case of pub/sub messaging is our SignalR Backplane and it's pretty easy to set up as well.

So, this application has started. There should be a key here, I think it's hard to find the key but just to show you the chat through NCache, this works and I'm going to transmit this to another browser by signing another user, let's say Nick. If I bring this back you could see that it actually broadcasted the message to the other connected client as well. So, that's how easy it is. Let me just ask one more time and then check if it's working as expected, so there you go. So, this is being driven through NCache and it's very easy to set up and this is how you set up and last feature within NCache, the fifth booster is output caching.

Ron, I have a question regarding SignalR, our SignalR messages stored as a visual object within NCache or does NCache use notification for this? NCache uses pub/sub notification for this. So, we have a pub/sub messaging platform which is in the background, in the cache itself you would just see one object or you won't even seen object because it's a topic. So, there's a topic which gets created, it's a logical tunnel where multiple work processes are connected and they actually broadcast NCache, all the SignalR messages to that topic. So, it's a notification framework if you're asking, if you would see a lot of messages added in the cache, no you wouldn't, you would just see one topic and then there are messages within the topic. There are a few statistics in the popper encounters that you can visualize to see how many messages are there within the topic but as far as individual objects are concerned, you won't see those objects, you just see one object as a topic. I hope that helps.

ASP.NET Output Caching Sample

Final feature within this, I think we're also short on time as well, I'll quickly go through this. It's our output caching you just need to set up the output caching section, you need reference to NCache output cache provider dll. This is right here, you need these references Ncache.Adapters, web, runtime in cache and after that you simply refer the N output cache provider and then you set up the name of the cache to aspnetcache, version should be latest. The way it works is that it actually caches the output of static pages, you simply run it and then it would just cache the output of a static page. If it's the entire page or portions within the page which are static and you decorate your portion static pages with this directive output cache, specify a duration, location and VaryByParam. If these params are not changing that automatically means that this is same output.

So, cached output is presented to your end users, you don't have to be execute the pages, you don't have to involve databases, take the load off of worker processes, off the database, save expensive CPU’s and machine resources and get a ready-made page output made available for your end clients. So, it overall improves your performance, ASP.NET provides this as a feature and NCache takes it to a distributed level where we have very scalable, very fast and very reliable page output stored in NCache without any code changes and this is true for ASP.NET core as well, you could use by the way you can use NCache within ASP.NET core web applications as well. We did a separate webinar on that and if we have idistributed cache, we have session storage and then we also have ASP.NET core response caching as well along with EF core caching.

So, these are some of the features that I wanted to highlight, this completes our five performance boosters. I hope you liked it, please let me know if there are any other questions, I think we're very short on time as well. So, if there are any other questions now is it time to ask those questions, so please let me know.

In general, I would also like to mention that NCache is a fully elastic hundred percent uptime dynamic cache cluster, no single point of failure. We have many topologies and features like encryption, security, compression, email alerts, database synchronization, SQL like searches, continuous query, pub/sub model, relational data in NCache, these are all covered as part of different features. So, if there are any specific questions around these please feel free to ask those questions as well otherwise, I'll just conclude it and hand it over to Nick.

Thank you very much Ron one last question here is it possible to set up a custom provider for session caching in NCache? NCache already is a custom provider. NCache lies under a custom provider, default modes are InProc, State Server or SQL Server and then the fourth mode from ASP.NET is a custom. So, NCache provider itself is a custom provider. I'm slightly confused on the question if there are any specific requirements around this please let me know otherwise NCache itself is a custom provider for ASP.NET.

Okay thanks a lot Ron, if there are no more questions, I like to thank everybody for coming and joining us today and thanks Ron again for your valuable insight into this and guys if you have any questions you can always reach us by sending us emails at support@alachisoft.com. You get in contact our sales team by dropping us an email at sales@alachisoft.com and somebody from sales team will be happy to work with you and to make sure that all your questions are answered, provide all the information that you need and with that I would also suggest that you can download a trial version from our website of NCache, comes with a 30-Day trial. We also have a mediation which has a paid support option as well as free open source edition, thirteen years with that a lot of content. Thanks everybody for joining us and we'll see you next time thank you.