Redis vs NCache

Recorded webinar

By Ron Hussain and Zack Khan

NCache is a native .NET Open Source distributed cache that is very popular among high transaction .NET, .NET Core and Java applications. Redis is developed by Redis Labs and is currently used by Microsoft in Azure. In this webinar, learn how NCache and Redis compare with each other. The goal of this webinar is to make your task of comparing the two products easier and faster specially in qualitative aspects such as features, performance, scalability, high availability, data reliability, and administration.

Here is what this webinar covers:

- Performance & Scalability

- Cache Elasticity (High Availability)

- Cache Topologies

- SQL & LINQ Searching the Cache

- Third Party Integrations (EF, EF Core, NHibernate etc)

Today, we have topic of comparing two products which are very similar but different in a lot of ways as well. So, we have NCache which is our main distributed caching product for .NET and .NET Core applications and then we'll compare from a feature standpoint with Redis. So, we have a lot to cover in this. I am going to go over a lot of technical details starting from platform and the technology stack. Then we'll talk about the clustering. How these two products compare in regards to the cache clustering and what are the different benefits that you get out of using these products and in comparison, how NCache is better and then I'll talk about different features. We'll go feature by feature comparison in regards to different use cases that you can these products in and then how these two products compare from a feature comparison standpoint.

For this webinar, I have picked NCache Enterprise 5.0.2, as far as Redis is concerned, we will primarily focus on Azure Redis. That is open source Redis 4.0.1.4. But, I would also give you details about the Redis open source project, as well as, Redis lab that is the commercial variant of Redis. So, we'll compare NCache with all these flavors but our primary focus would be Microsoft Azure Redis, the hosted model of Redis that you can get in Microsoft Azure.

The Scalability Problem

So, before we get started, I'm primarily going to go over the introductory details about these two products. So, why exactly you need a distributed caching solution?

So, and after that, you would go ahead and compare different products. So, typically it is the scalability and performance challenge, that you may be experiencing within your application. It could be your application is getting a lot of data load and although your application tier is very scalable, you can always create a web farm, you can add more resources on the application tier but all those application instances must go back and talk to back-end data sources. And, when you need to go back and talk to those data sources that is where you see performance issues because databases, typically relational databases are slow in terms of handling transactional load.

There is a performance issue associated with them and then in terms of scaling out, for example, if you need to have a lot of request handling capacity or requirements around that we need to handle a lot of requests and your applications are generating a lot of user load, database is not designed to handle that extreme transactional load. It's very good for storage that's where you can store a lot of data but going around having transactional load on that data is something database would not be a very good candidate for. It may choke down. It would give you slowness and end user experience can be degraded.

So, you can get an impact on the performance and you don't have ability to increase capacity within the application architecture.

The Solution: In-Memory Distributed Cache (NCache)

Solution is very simple, that you use an in-memory distributed caching system like NCache which is super-fast because it's in memory. So, in comparison to a relational database or a file system or any other data source which is not memory based, if it's coming from a disk in comparison to storing your data on the memory, in-memory, it's going to make it super-fast. So, first benefit that you get out of it is that you get super-fast performance out of NCache.

Second benefit is that it's a cache cluster. It's not just a single source. You can start off with one server but typically we recommend that you have at least two servers and you create a cache cluster and as soon as you create that cache cluster, it would improve, if we just distribute load on all servers and you keep on adding more servers at runtime.

So, you can scale your capacity, you can, you know, increase capacity at runtime by adding more servers and you use it in combination to your backend relational databases as well. It's not a replacement of your conventional relational databases and we'll talk about some use cases down the line.

Distributed Cache Deployment (NCache)

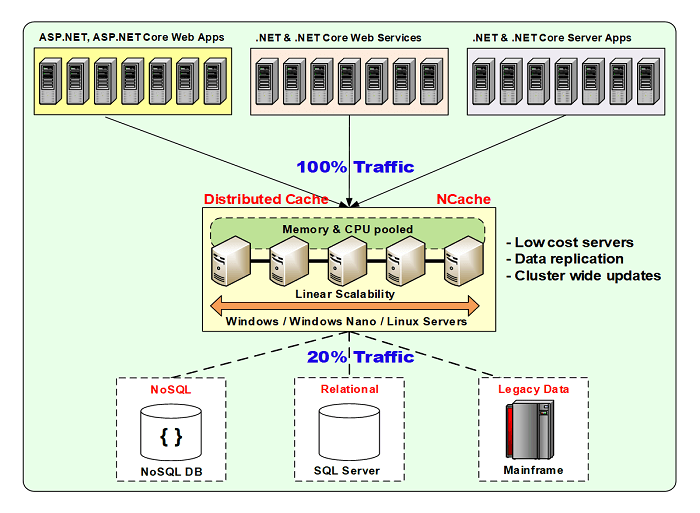

Here's a typical deployment.

I'm using NCache as an example for now but down the line within this presentation we'll compare how Redis gets deployed and how NCache gets deployed and what are the flexibilities available within these products.

So, for NCache, it's very flexible. You can choose to deploy it on Windows as well as on Linux environment. It's available on-prem as well as it's supported in cloud environments. It's available in Azure as well as AWS marketplaces. So, you can just get a pre-configured image of NCache and get started with it. Docker containers for Windows as well as for Linux are available, which you can use on any platform, where you need to use this.

Typically, your applications whether they're hosted on-prem or in cloud it could be an App service, it could be a Cloud service, it could be a Microservice, it could be Azure website, any kind of application can connect to it in the client-server model and it sits in between your application and your back-end database and that's the typical usage model. Idea here is that you would store data inside NCache and as a result you would save expensive trips to the back-end database. You would save trips to the database as much as possible and whenever you need to go to the database, you would always go to the database, fetch data and bring it into the cache so that next time that data exists and you don't have to go to the database. And, as a result your applications performance and overall scalability is improved because now you have in memory access which improves performance. You have multiple servers which are hosting and serving your requests, your data requests. So, it's more scalable in comparison. And then, there are high availability and data reliability features which are also built into NCache protocol.

NCache can be hosted on the same boxes, where your applications are running. Or it could just be a separate tier. In cloud, preferred approach would be that you use a separate dedicated tier of cache and then your applications are, application instances are running on their respective tier. But, both models are supported as far as NCache is concerned.

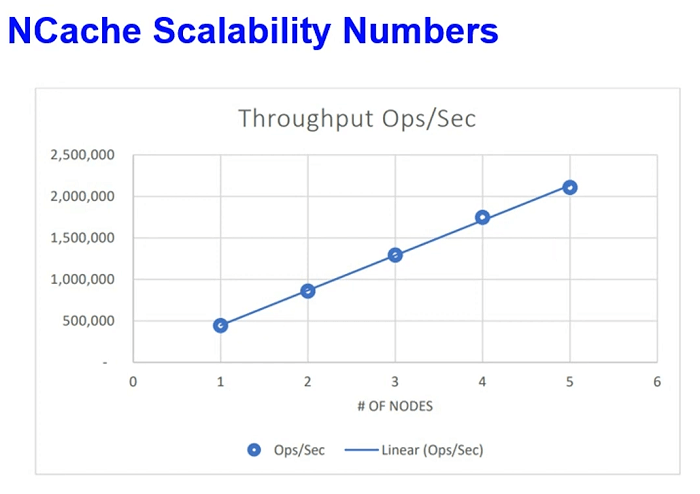

Some scalability numbers. We've recently conducted these tests in our AWS lab, where we simulated read and write request load and we kept on increasing load and after certain point when we saw that servers are being maxed out we increased number of servers in the cache cluster. So, from 2 to 3 servers and then 3 to 4, we were able to achieve 2 million requests per second throughput with just 5 NCache servers and this is not, this was not a touch-and-go data. This was a real-life application data but simulated in our AWS lab within our applications. And, the latency factor was also very optimized. We were able to achieve all of this within microsecond latency. So, individual request performance was not degraded, when we were able to achieve all of this load.

Common Use Cases: Distributed Cache

Some use cases and this is something which is common for Redis as well but I will talk about how NCache would compare.

App Data Caching

Where you cache almost anything that you normally fetch from the back-end database and data exist in in your database and now you want to cache it. So, that you save expensive trips to the database and we've already established that database is slow and then it's not very optimum in terms of transactions load handling. We have a lot of database synchronization features on this line but in this you simply connect to NCache and use basically our APIs to make connection, you know, make any data calls to NCache. So, you can cache almost anything. It could be your domain objects, collections, datasets, images, any sort of application related data can be cache using our data caching model.

ASP.NET / ASP.NET Core Caching

Then we have our ASP.NET and ASP.NET Core specific caching. That's again a technical use case, where you can use it for ASP.NET or ASP.NET Core session state caching. ASP.NET or ASP.NET Core SignalR Backplane. NCache can be plugged in as a Backplane. For ASP.NET Core you can also use it for Response Caching. IDistributedCache interface and sessions through IDistributedCache interface, these two features are also supported with NCache and for legacy applications you can also use it for View State and Output Caching. wanted to toss a quick question your way Ron.

We got come in, question is does NCache and Azure support a serverless programming model?

Absolutely. This is something that in terms of Azure deployment, you can either have your applications deployed on servers or your application, as far your application part is concerned, those could be serverless applications as well. You can just include our NuGet packages inside your application and those applications can just make NCache calls whenever they need to. They don't even have to have any installation of NCache or have a server setup for as far as application resources are concerned. But, as far as, NCache server-side deployment itself is concerned because NCache is the data source, so, it must have a VM or set of VMs, where your applications connect and retrieve in and add data into.

So, from servers, NCache cache server standpoint, as a source you need NCache servers but as far as your applications are concerned those could strictly be serverless and there are no issues. Even microservices architecture. That's a very common example, where microservices, there are a lot of microservices. There could be a Azure function, which is just performing and that deals with a lot of data and that data can come from NCache. So, you treat NCache as a data source. Whereas, your applications can be serverless and NCache is fully compatible with that model.

Pub / Sub Messaging and Events

Then another use case is around Pub/Sub messaging and that revolves around microservices because that is one of the impressing use cases where you can use messaging for serverless applications. Microservices are loosely coupled server less applications and building a communication between them is a big challenge. So, you can use our Pub/Sub messaging platform, where you can utilize our event-driven async event propagation mechanism. Where multiple applications can publish messages to NCache and subscribers can receive those messages.

Since, it's in a based on async event-driven mechanism, publisher applications don't have to wait for acknowledgement or messages being delivered & similarly subscribers do not have to wait or pole for messages. They get notified via callbacks when notifications. So, it's very flexible and that's another use case where you can use NCache as a Pub/Sub messaging platform for your applications.

NCache History

Some more details and then we'll talk about the differences between NCache and Redis. NCache was launched in 2005. It has been in the market for over 15 years now. Current version of NCache is 5.0, 15th version. We have lots and lots of customers. NCache is also available in Open Source edition. That you can download from our website as well as from GitHub repository.

Some of NCache Customers

Some of our customers. You can get a detailed list as well.

Platform & Technology

Next we'll talk about how NCache compares with Redis and first segment is built on some introductory details about the technology in general. This is going to be information around distributed caching technology. Now we'll focus directly on how NCache compares with Redis and I have some segments that I have formulated.

So, first section that we have defined is platform and technology and I've initially mentioned that we're targeting NCache 5.0.2. So, NCache 5.0 SP2 is the main version on NCache site and from Redis standpoint we will be using Azure Redis as a comparison and we'll also talk about Open Source and Redis Lab as part of that. Most of these details are common for different flavors of Redis.

Native .NET Cache

So, coming from an Azure background, if you plan on choosing a product so, number one thing would be the compatibility with the platform.

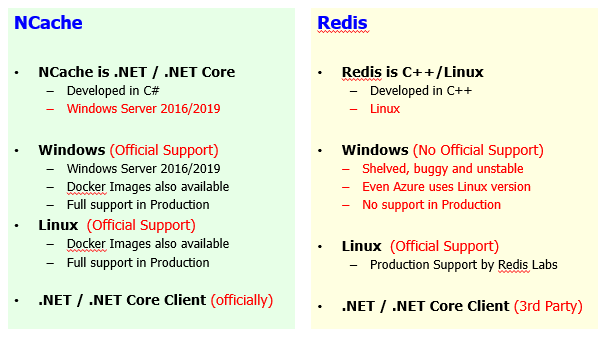

So, NCache itself is written in 100% .NET. It's a native .NET or.NET Core product, as far as your applications are concerned, right. So, basically, it's written in .NET and primarily for .NET applications and it gets deployed on Windows Server 2016, 2019, even 2012. Only prereq for NCache is .NET framework or .NET Core for that matter. Whereas, for Redis, it's written in C++. NCache is written in .NET. It's developed 100%, actually C# is the main technology language that we're using and it's 100% native .NET and .NET Core. Whereas Redis is a C++ Linux based solution.

So, coming from a Windows perspective, Windows background and if you have your applications which are written in .NET, the natural choice would be to use a product which is also written in .NET so that you're on the same technology stack. You don't have to have a lot of variations within the application development stack. So, that is one issue or one difference between these two products.

Second aspect is the Windows versus Linux and then you know what is available in NCache and what is available on Redis side. Windows, from a standpoint of NCache deployment, that is a preferred deployment but we also have a Linux deployment available with the help of our .NET Core Server release. So, we're fully compatible on Windows 2012, 2016, 2019. Our Docker images are also available for Windows variant. A variant of NCache. So, you can just download our Docker image and just spin Windows image of NCache as needed and we support it fully in production environment. It's an official support from our end. Whereas, if you compare Redis even in Microsoft Azure the Redis is hosted on Linux. The preferred approach, preferred deployment model is Linux for Redis. The Windows variant is third-party project. Microsoft Open Tech has a ported version of it. There is no official support from Redis itself. The project itself is shelved. It's buggy, unstable and even the Azure Redis, as discussed earlier on, uses the Linux version and the big issue with this is that you don't have an official support from the Redis, makers of Redis or from a standpoint, if you want to use the open source project and you want to deploy it on your own premise, that's where you would see a lot of issues.

As part of this, I would also like to highlight one other aspect is, if you using NCache on-premise and you now want to migrate off from on-premise and you would like to use in Azure, same software works as is. So, there's no change needed from moving NCache from on-prem to Azure. Similarly, within cloud vendors if you plan on using NCache on Azure you can just migrate off to AWS, if you need to. Because, the exact same software is available across the board on all platforms. Whereas, as far as, Redis is concerned, Azure Redis is a hosted model which gets deployed on Linux as far as the backend deployment is concerned but you don't have the same variant available on on-premise. So, you have to deal with open source Redis or some third-party provider. Even you have to go with a commercial variant, which is a completely different product.

So, the main point that I would like to highlight here is that Redis on-premise which is open source or some commercial version versus Redis in Azure or Redis in AWS which is Elastic Cache. These are completely separate products. So, there's a transition, there's a lot of change. You can't port Redis from one environment to another without going through some changes. Some feature sets are missing. Some APIs are different. The deployment model is completely changed between these products. So, there are no changes if you keep NCache on-premise on Windows or Linux and now you want to migrate off and go to Azure, it would be exact same product and now you want to change it from Azure to AWS, you want to change the cloud vendor, it's more flexible in comparison to Redis. So, NCache is a lot more flexible.

Linux support, NCache is fully compatible, officially supported. Even performance is tested and Linux performance is super-fast as at par with NCache on Windows. We have Docker images available. Fully supported in production and we have a fully integrated monitoring and management tools that you can, these are web management and monitoring tools that you can access from anywhere. So, even your Linux deployments can be managed and monitored as you would deploy and manage and monitor your Windows deployments with NCache. Linux is also supported on Redis. So, its production support available by Redis Lab. Azure Redis is also hosted on Linux version. So, it's supported by the vendor itself.

The second aspect after the platform is again the .NET and .NET Core, the technology stack. We have an official Client available. We have implemented it. We fully support it and if there are any feature sets and this is why NCache is compatible across the board. So, if you choose on-premise or Azure or AWS environments, you would have the same flavor of NCache and its Client available across the board. And, if there are any changes that need to be made, we will provide those changes officially because we own everything as well as project is concerned. Whereas, for Redis it's a third party. So, for different languages, support coming in from different languages is also coming in from different vendors. So, there could be a feature set difference. There could be a release cycle difference. So, you have to rely on third-party clients as far as technology as far as client requirements are concerned.

So, I want to highlight some aspects around NCache being native .NET and .NET Core product. NCache is fully supported on Windows, as well as, on Linux. Whereas, Redis is not very stable on Windows. It's the third-party ported version and Linux support is available and that's the, so, you have to rely on Linux support as far as Redis is concerned. So, coming from a Microsoft technology background this is something that you have to rely on.

Cache Performance & Scalability

Second aspect is our cache performance. That is also very important aspect.

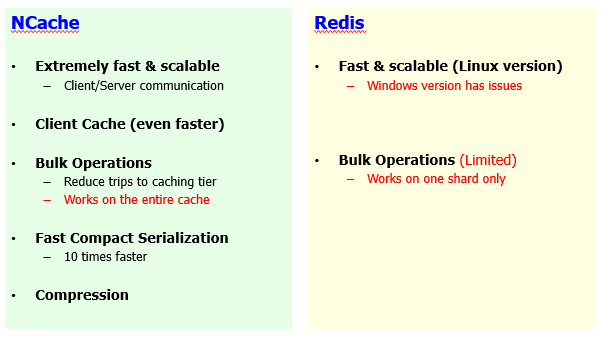

Both products are very fast and that's the idea here that main benefit of NCache and Redis, the main reason that you would choose such a product is the performance improvement aspect. We've already established that databases are slow and they're not very scalable. These products are fast and very scalable in comparison. So, I would not take away anything from Redis. Just the Windows version is not stable and there are performance issues but if you have Linux version, it's also very fast and scalable and it's extremely fast and NCache is also very fast. It's very scalable. We have our own implemented TCP/IP based clustering protocol, which is very optimized and very robust in performance.

However, there are some differences here as well. Within NCache we have a lot of performance improvement features. We have recently done a webinar as well, where we tackled six different ways where you can improve NCache performance. If you set up NCache by default, it will give you very good performance but on top of it, based on your use cases, you can enable different features and you can further improve performance and one of those features is our Client Cache.

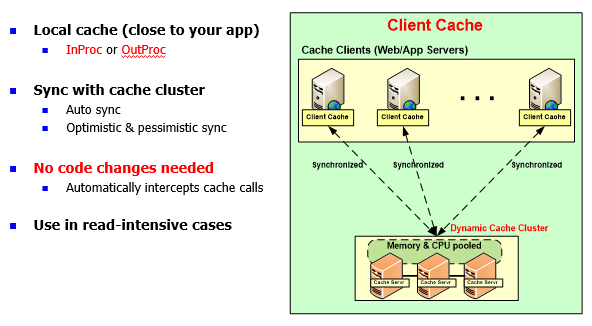

NCache: Client Cache (Near Cache)

Client Cache is a feature which is unique to NCache. Redis does not have this feature.

It's a client-side local cache, which again is possible even for serverless applications, where you can have an InProc copy within your application process and/or for, you know, server-based applications, you can use an out of process client cache. Idea here is that it would save expensive trips across network to your cache cluster. This cache was already saving trips to the back-end data sources. Now you can have cache in between and assume that you have 100 items in the cache, if you fed some items on the application side, you know, let's say 10 items, those 10 items would be brought back into client cache automatically and next time your application would find that data closer to your application and as a result it would save expensive network trips.

And, this is a synchronized client cache. Synchronization is managed by NCache. Any change in the client cache is propagated on the server cache as a much because that's the master copy. This is a subset of the data and that change is also propagated to other client caches as well. If you have reference data scenario. If you have a lot of reads then writes, we highly recommend that you turn on client cache and would give you a very good performance in comparison to a cache which is running against our database.

We recently done a POC with one of our customers, one of our bigger customers. Where they had a workflow, which was taking about 46 seconds with default configurations. They were making a bunch of NCache calls and retrieving data. So, it was primarily a read intensive use case. We turned on client cache out of process, by the way there are two flavors, you can keep it out process which means a separate cache process runs on the application box or you can have InProc where the client cache runs inside your application process. InProc does not have serialization or process to process communication overhead. So, it's extremely fast. Even in comparison to OutProc is faster. So, with that customer the workflow was taking about 46 seconds to start off. Then we turned on out of process client cache, it brought it down to 3 to 4 seconds and then we'd further turned it on turn on the InProc client cache and we were able to achieve all of this within 400 to 500 Milliseconds. From 46 seconds to 400 to 500 Milliseconds, that's the kind of improvement we're talking about and this feature is completely unavailable in any other products or even any other flavors of Redis, including Redis Labs, including open source project and Azure Redis.

So, you can tune performance, using our client cache and it's a no code change job. It's just a configuration that you turn on.

Bulk operations are supported on both side but with NCache it's, our bulk operations work on entire cache cluster which means that if you have ten servers and you have fully distributed data, a bulk call would fetch data from all those servers and consolidated result is retrieve. So, all those work in combination to one another to formulate result and then you get a result which is complete in nature. Whereas Redis bulk operations are on a shard level. So, you have to deal with data on a given shard. So, that's the limitation. If you have, let's say multiple nodes in the cache cluster & you have master shards which are available, so, you would be able to perform bulk operations on a given shard.

So, that's the limitation. Otherwise, this is a good performance improvement feature where you instead of going back and forth for individual requests you send a big request and get all the data at once and as a result you prove your performance.

Serialization, that's another feature and there's another aspect because most of your time would be spent on serializing and deserializing data and that's true for NCache as well as for Redis. By default, both products would serialize and deserialize but with NCache there is a way to improve your serialization and deserialization overhead. We have a fast-complex serialization, which would optimize your serialization time, which normally your application would take. Your objects become complex. So, without any code changes you can define them as compact types and NCache would ensure that it runs compact serialization on them at runtime and it would improve your serialization and deserialization overhead.

Finally, we have compression feature as well. Compression is done on the client end. Typically, if you are dealing with bigger objects, let's say, 2MB, 3MB or let's say 500 kilobytes, that's a bigger object. So, typically we recommend that you deal with smaller object but if you have bigger objects, there is a lot of network utilization and then performance also gets degraded. With NCache you can turn on compression. That is a no code change option, which is not available on the Redis side and it would automatically compress items while adding into the cache. So, smaller object gets added and transfers between, travels between your application and the cache and similarly the same smaller object is retrieved back on the application end as well. Dealing with smaller payload improves your applications performance. So, overall application performance would be increased if you have compression turned on.

So, we recommend any object greater than, let's say, 100 kilobytes you should definitely turn on compression and there's a threshold that you can enable and only bigger objects are compressed, smaller objects are left as is.

So, all these performance improvement features, client cache, bulk operations, compact serialization, compression, either are not available or Redis. For example, client cache is not available. Bulk operations are available but they're limited. There are no serialization optimization options and compression is something which is not available. So, that's where you have a clear difference between NCache and Redis, where NCache is complete package where we have a lot of performance centric features built into it.

High Availability

Next segment is high-availability and that's where you will see a huge feature-set difference between NCache and Redis. High-availability is another aspect where these parts can be compared. For mission-critical applications, this is a very important aspect that you need a source. Now you're bringing your data which normally is in the database and within database you would have some kind of mirroring, some kind of backups, right?

So, with data being moved in a product which is distributed cache, although it improves your performance, it's very scalable but high availability is a very important aspect. For mission-critical applications any downtime is not acceptable. It would impact your business and user experience can be impacted. So, it's not affordable. So, it's very important that your application is always able to get response from the cache where data exists. So, here we have a huge set of feature differences.

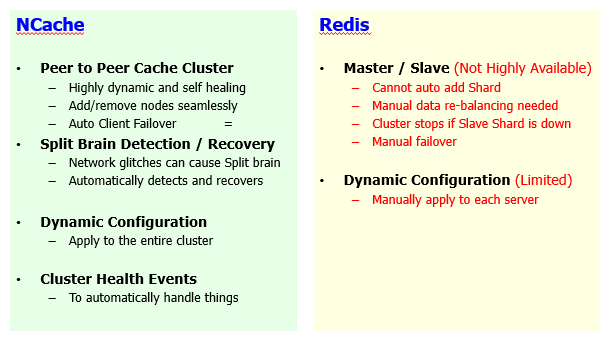

Cache Cluster

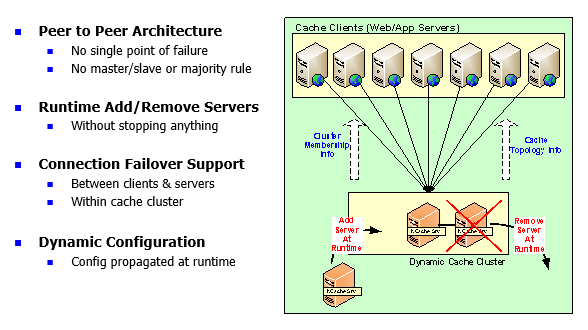

NCache is a 100% peer-to-peer architectured cache cluster.

It’s dynamic and self-healing and I'll talk about how, you know, that works out but in comparison Redis uses master / slave. So, being peer-to-peer architecture NCache allows you to automatically add and remove servers and it's seamless to your applications. You can go ahead and add as many servers as you need to. For example, you have started off with 2 servers. Now you want to add 3rd server, you can do that on the fly. You don't have to stop the cache or the client applications which are connected to that cache. Seamless experience is observed. So, your applications can continue to work without having any downtime or data loss with our high availability and data reliability features. Whereas, in Redis you cannot automatically add new shards. Because there is no automatic data rebalancing. That is the core of the dynamic nature of our cache cluster. Within NCache, it automatically rebalances data if you want to add new servers.

So, there are two scenarios. One where you add a new server to bring capacity, to bring more scalability and the other scenario is that you bring a server down.

So, let's first tackle the node being added scenario. A new node gets joined. With NCache, your data would automatically be distributed.

For example, from 2 to 3 servers, if you add 2 more, you had 6 items here and you add another server existing data would be transmitted to, will be balanced to the newly added server. So, that server would take chunk of the data from existing servers and that will be done automatically. It's dynamic in nature. So, there is an automatic data rebalancing which happens. With Redis, it's a manual rebalancing of data and this is true for Azure Redis because there are different set of tiers available in Azure. There is a basic, there is a mid-level and there is an advanced. The clustering only comes into play with advanced here, which is also expensive one as well and on top of it they need at least at the minimum of 3 servers which is again a limitation.

With NCache you can even have clustering fully up and running with just 2 servers and on top of it, adding a new server requires a manual data rebalancing. That's a big issue. So, you would have some kind of limitation on the application and when you are planning to add capacity. Whereas, with NCache you can achieve this at runtime. You can add more servers on the fly.

Second aspect is a server going down. So, we have, within Redis we have a master and slave concept. A master replicates data to slave. There's a slave shard. So, master has to replicate data and either it can be in Sync or Async manner. In Redis, if a slave shard goes down master itself stops, cluster becomes unusable. So, that's a big issue and that can happen all the time. Strictly on on-premise deployments where you have an open source or Redis Lab deployment of Redis. In that case, if a server goes down and that happened to be the slave of a master shard, the cluster itself would become unusable. So, you have to get involved and do a manual intervention to recover from that scenario. Whereas within NCache it's automatic. So, any server can go down, the surviving node and it could be active or backup.

For example, this server goes down, this is an active partition, it also has a backup partition. If this entire server goes down, backup promotes the active. The backup partition gets promoted to active and you get all the data from the surviving node and there is a connection failover which is built into it. Any server going down clients would detect that at runtime and they would decide and failover to surviving nodes and here I would like to reinforce that concept that with the Redis you need at the minimum 3 servers. This is the concept of majority rule. Cluster coordinator has to win an election. That is not the case with NCache. You can start fully working cache cluster with just 2 nodes and will give you full high availability features. Any server going down, a surviving node is fully capable to work without any issues and that is not the case with Redis.

Dynamic configurations. You can change cluster configurations at runtime and this involves adding new servers, removing servers or changing some settings on the cache cluster. That is something that you can apply on an entire crash cluster at runtime without stopping it. Whereas for Redis, it's limited. There are a lot of configuration that you have to manually apply and then there are a lot of cluster health events which are available on NCache side, which you can subscribe to. You can use monitoring and management tooling on top of it. Whereas, Redis does not have those features.

So, this is a very important concept. Let me just sum it up for you. Adding and removing a server in Redis is something which would give you a lot of issues. For adding data would not be automatically rebalanced. So, it's not hundred percent peer-to-peer architecture. So, cache cluster is limited in its capacity. Similarly, if a slave shard goes down cluster itself becomes unusable. Because, there's a distribution issue which now you have to manage manually. Failover is also manual, right? So, if a server goes down you have to manually failover and start using the survivor nodes. If you add new servers, it would have to manually shift over to the newly added servers.

So, these are all the limitations that you would have and you know, I would be surprised to see using a production deployment of such nature and now you need to bring capacity or you need to bring servers down for maintenance. So, that would be very difficult with product like Redis. Whereas, NCache gives you a seamless experience. Where you can add or remove servers on the fly without impacting anything.

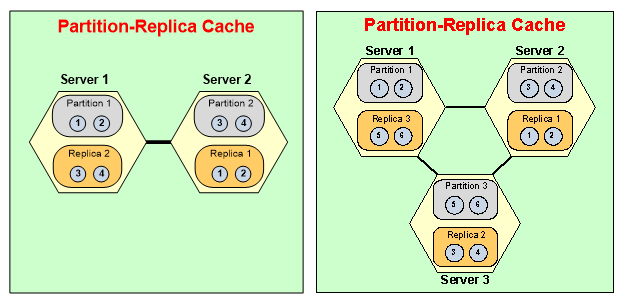

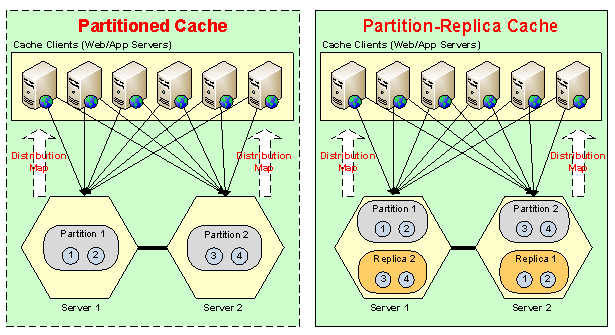

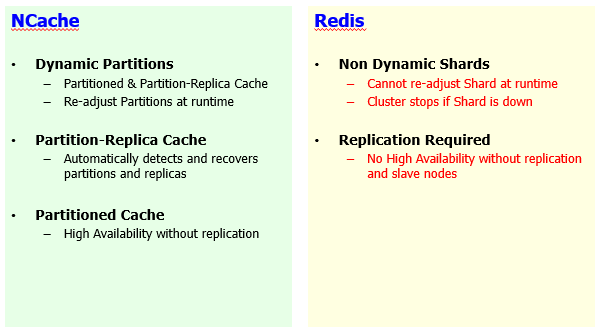

Dynamic Partitions / Shards

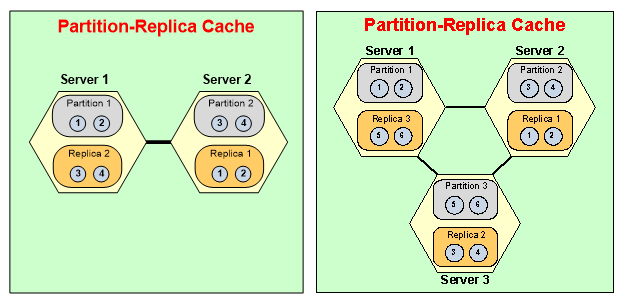

Now another concept within, you know, the cluster is the self-healing mechanism.

NCache has dynamic partitions. You add more servers, data gets redistributed, new partitions are formulated at runtime. Similarly, you bring a server down, cluster would make the backup available and it would heal itself and formulate a healthy 2 node cache cluster if you brought it down from 3 to 2 and then reliability aspect. It has replication partitions right, which also is available on Redis as the form of slaves but their high availability is dependent on replication. They do not have with Redis you would not have high availability, if you don't have slave shards configured. So, you have to have slave shards available. Whereas, with the NCache, we have topologies.

For example, Partitioned Cache, where we have master shards, master partitions. If this server goes down you still have high availability because clients would detect that and they would fail over and start using the survival node. They would have data loss and that's true for Redis as well. Data loss because there's no replication but it's still highly available and then we have an enhancement to this, where we have replication support as well. If this server goes down, not only the backup of the server is made available clients automatically failover. So, Redis is limited. It's high availability is dependent upon replication. It's not highly available, if replication is not turned on which is also a limiting factor.

And then the self-healing mechanism, though no manual intervention is needed.

If you start off with 3 servers, you bring a server down, you'd use active partition, a master and then you also lose a slave of another server. So, in that case server 3's backup was on server 1, so, this becomes activated. It joins into the active partitions at runtime. No intervention is needed. Manual work is needed and then partition server 2 would formulate a healthy partition on server 1. So, cluster would heal itself automatically and this is the dynamic nature of NCache in comparison to Redis. Where for Redis, shards cannot be readjusted at runtime. Cluster stops if the slave shard goes down. The data redistribution is not dynamic. High availability is dependent on replication that's not the case with NCache. NCache provides you high availability even without replication.

So, all these benefits that you get out of NCache, makes it a lot more superior product because these are completely missing or limited features in Redis and this is true for Azure Redis. This is also true for open source because these are very comparable products and this is also true for Redis Labs Redis offering as well.

NCache Demo

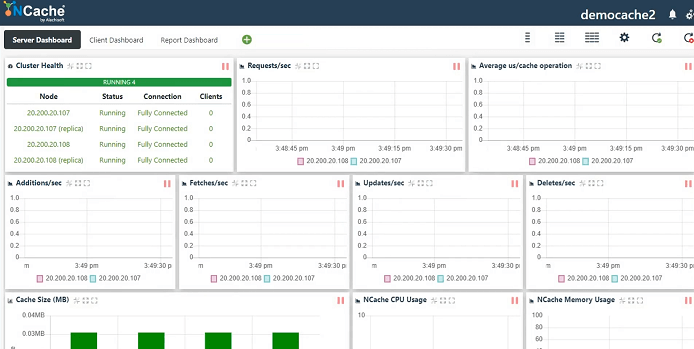

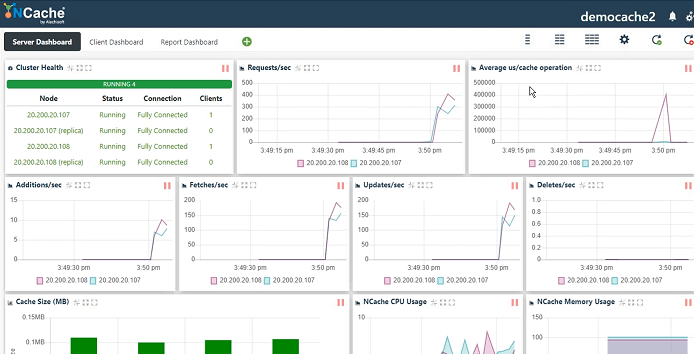

I'm going to now spend some time on, you know, showing the actual product in action so that you have some insight into how NCache gets configured. So, this is our demo environment. I've been working with it. So, I will just create a new cache. This is our web management tool which comes installed with NCache. Mode of serialization could be either binary or JSON. It's entirely up to you. I'll just name the cache and I'll show you how to create a cache cluster, connect a client application and monitor and manage it as well.

So, I’ll keep everything simple because the main focus in this webinar is around NCache vs. Redis, so, I'll keep all the details simple. Partitioned Replica, that's our most recommended topology. Async replication between active and backup. So, you can choose Sync. Async is faster so, I'm going to go with that. Size of the cache cluster. Then I'm going to specify these server nodes where NCache is already installed. TCP port. NCache is a TCP/IP base communication protocol. So, I'll keep everything simple and on this I will just enable eviction so, that cache becomes full. So, it automatically removes some items from the cache and as a result makes room for the newer items. Start this cache and finish. Auto start this on service startup, so, each time my server gets rebooted, it automatically joins the cache cluster and that's it. That's how simple it is to configure a cache cluster and then you use it, which I'm going to show you next.

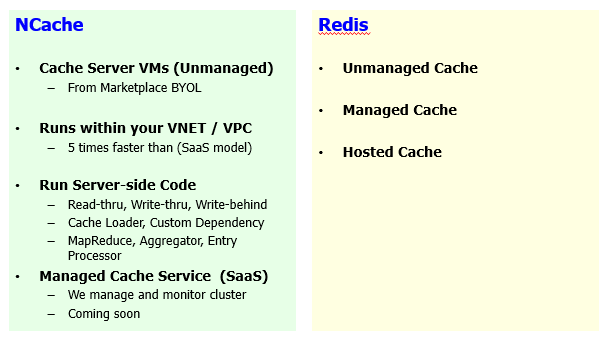

Cloud Support (Azure and AWS)

So, our managed service model is coming up. Our next release is focused on that which is I think two to three weeks down the road. So, we will have a fully managed NCache software as a service model in Azure as well as in AWS.

At the moment, it's either going to be VM model. If you have on-premise, you can use physical or VM boxes. If you choose Azure you need to either get your VM set up through marketplace or you can just set up a VM and then install NCache software by downloading from our website. And then we also have containerized environment. We have Docker images and we are fully supported on Azure Kubernetes Service, EKS - Elastic Kubernetes Service and any other, for example, OpenShift Kubernetes platform, NCache is fully integrated and fully supported on those platforms already. As far as, the managed aspect is concerned, that is something which is coming up. So, down the line two to three weeks from now it would be fully available.

So, I'm going to show you statistics window, which is a perfmon counters and by the way these monitoring options are available for NCache, in terms of NCache on Windows as well as on Linux environment, right?

So, I'm going to run a stress testing tool. I think there's one already running for another cache. So, I'm going to go ahead and run one more and this would simulate our, a dummy load on our cache cluster. You just specify the name and it automatically discovers the servers by using the configuration files and it just connects to it.

So, we have fully connected cluster status, Requests per second showing throughput, latency by Average Microsecond per cache operation, Additions, Fetches, Updates, Deletes, Cache Size, CPU, Memory. So, you get a centralized monitoring view. You can use this tool. You can also use Windows perfmon.

For Linux based we have our customized monitoring. So, you can use our monitoring tool directly for Linux servers as well and you can also use any third-party tool for monitoring NCache as well. So, that was a quick peek into our cache creation process. Some monitoring and management aspects.

So, coming back. Since we discuss some details. Next thing that I would like to discuss is the cloud offering / cloud support. Now Redis itself, you can choose an unmanaged cache you can also choose managed cache and then there's a hosted service which is available and manage option is from third-party vendors. Hosted option is from Azure Redis, where you can have a open-source variant of Redis customized by Microsoft and that's available as a hosted model. Whereas, on the NCache side, we have cache server model, VM model. I've already discussed container approach. It's fully compatible with Windows as well as with Linux containers. We have video demonstrations available for Azure Service Fabric for Windows containers, Microservices architecture details. We have Azure Kubernetes Service which are using Linux Containers. EKS – Elastic Kubernetes Service and then I think we also done Red Hat OpenShift Containers through Kubernetes.

So, those are all container deployment options available and it's flexible, its platform, you know, it's not platform specific. So, you can deploy it in any kind of containerized platform without any issues. The managed service is coming up, so, we already discussed that. So, that's something where NCache would have managed service but that's in our next version.

One important aspect is that the benefit of using a VM model, although you have to connect to a VM instead of a service but you control everything. You can run server-side code which I will cover next but the most important aspect is the performance aspect. We already discussed that there are a lot of performance features within NCache, which are missing in Redis. If you choose to have Azure Redis, you would have to connect to Azure infrastructure. So, those are VMs, those are running in a separate virtual network. These are located nearby but again they are far off. It's different than your own virtual network that you have in Microsoft Azure and where you have all of your application deployments.

With NCache you can choose our NCache deployment on the same virtual network, as your application virtual networks. For example, your App service, your Azure website, your Azure Microservices, they are running on an Azure virtual network. You can choose to deploy Azure VMs on the same virtual network and improve your application performance. Based on our own testing within our lab, NCache was four to five times faster than SaaS model of Redis that you normally get in Microsoft Azure. So, that's a very important aspect that I would like to highlight.

On top of it you get a lot of control on your VM. You have full control on starting a cache, increasing the size, you get full capacity on. There is no limit on request units, there is no limit on size, there is no footprint of usage and you're not being costed as part of that. You bring your own license and that could also be perpetual, that could also be subscription license, that could be very flexible in terms of licensing. On top of it, you can run server-side code on top of your NCache servers. You can fully manage this. You can fully optimize this. You can write a lot of interfaces. Such as, read-through, write-through, write-behind, cache loader and some compute grid features such as, MapReduce, Aggregator, Entry processors and this is only possible with NCache. Even our SaaS model which would be hosted model that would have all these offerings available, which is not going to be case with Redis. So, that's our platform.

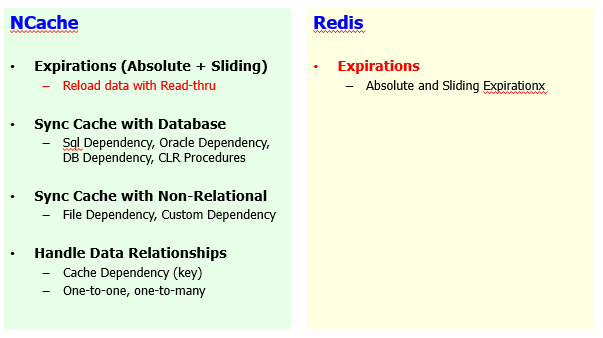

Keeping Cache Fresh

Next segment, next 15 minutes I would spend on the, you know, feature level comparison and for that I have some segments defined. So, I'll get started if you need to keep cache fresh and that's very important, there should be a separate webinar just on this, how to keep cache fresh specifically in regards to back-end data sources, in regards to your application use cases.

So, in comparison to Redis, you know, NCache has a lot of features on this side as well.

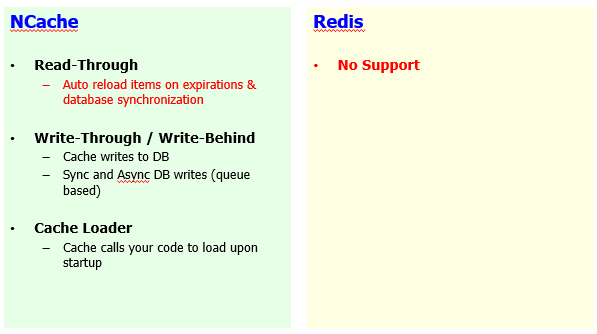

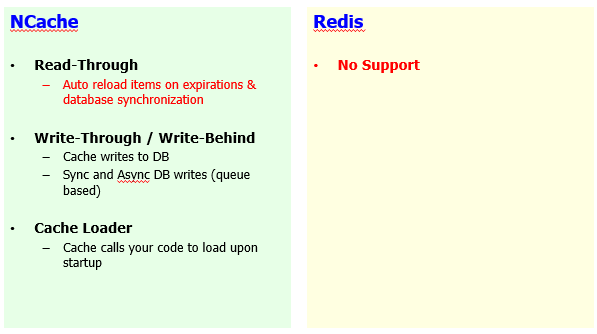

We have time base expiration, which is absolute and sliding and but we have an auto reload mechanism available for that as well. Redis only has absolute and sliding expiration without any reload mechanism. For reloading, we allow you to implement an interface, called read-through handler, which is a server-side code. Again, that's possible because NCache allows you to give, have full access to your VMs where NCache is hosted. So, you can deploy server-side code on top of NCache and use NCache computational power to back it up.

You can synchronize your cache with database. NCache is very strong on database synchronization. We have SQL dependency. We have DB dependency which is only DB compliant. We have .NET CLR Stored Procedures. So, all these features allow you to synchronize you cache with database. And idea here is if there's a change in the database, a record in the database changes and you had that record cached, these two sources can be out of sync. So, with NCache, if there's a change in the database you can automatically invalidate or reload that data in NCache at runtime. This is a feature unique to NCache. No other products have this feature. So, you can have fully synchronized cache with your back-end database and this is not only true for relational databases, we have features on non-relational data sources side as well.

File dependency is another feature where you can make items dependent on file. Contents of file, if the content changes you get items removed or reloaded automatically. And custom dependency, you can use it with any source. It could be a NoSQL database, it could be a file system or relational, any connector, any web service. So, you can make items in validated based on your flexible requirements. We have given an implementation of this dependency with Cosmos DB. So, we've implemented synchronization of NCache with Cosmos DB. If you are using NCache alongside cosmos DB, you can use custom dependency and I think I have done a webinar on this as well.

Handling relational data. So, relational data has relationships. Items in the cache are key value pair so you can make relationships between different items and that's something which is not available on Redis side. So, you would have to deal with items on their separate merit. Whereas, with NCache you can combine items in a one-to-one, one-to-many or many-to-many groups. The parent item gets through a change, the child item can automatically be invalidated or reloaded, as needed.

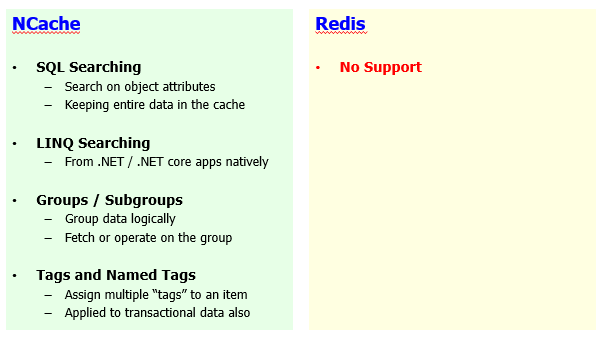

Data Grouping, SQL & LINQ Searching

Another aspect is data grouping and searching, where NCache is very strong & Redis does not have any features. And again, this is true for Azure Redis which is very limited anyway. The open source Redis is slightly ahead but it's still limited in terms of features. Even the Redis Lab, the commercial version of Redis, that is not equipped with these features.

SQL searching is available. You can search items within NCache based on their object attributes. The objects are added in the cache. You can define indexes for their attributes. For example, products can be at index for their ID, their price, their categories and now you can run searching on those products by using these attributes. A typical example would be select product where product dot category is something or product dot price is greater than 10 and product dot price is less than 100 and NCache would run in-memory searching on all the items, on the all the servers, consolidate results and would bring you back the result set. So, you don't have to deal with keys anymore. You retrieve data based on a criteria.

LINQ searches are also available. We have .NET and .NET Core apps. If you are using, you know, LINQ searching you can run LINQ searching on NCache as well. So, that's a unique feature to NCache where Redis does not have any support. Redis any, all the flavors of Redis, do not have this support.

You can have groups, sub-groups. You can logically make collections inside NCache. That's not available in Redis and you can retrieve, update and remove data based on those groups.

Tags and named tags can be attributed. For example, you can use keywords which can be attached to your items. A typical example would be that you can tag all customers with customer tag. All orders with an order tag and orders of a certain customer can also you have a customer ID attached as a tag. When you need orders, you just say get by tag and provide the orders as a tag, so you get all the orders. When you need orders of a certain customer you say get by any tag or get by tag and give customer ID it would get you all the orders but only for that customer ID. So, that is the flexibility of using tags and named tags that you have with NCache. Redis does not support these.

Server-Side .NET Code

We’ve already discussed that typically we use cache-aside pattern, where you first check data in the cache, if it's found there you return, if you don't find data in the cache you would go to the backend database in your application and then fetch that data and bring it in the cache. So, there's a null, you retrieve null and then you go to the database. You can automate that with the help of Read-Thru handler. It's a server-side code that runs on your NCache servers. Our managed service would also have this feature. So, you implement this interface which allows you to connect to any data source. It could be a web service, it could be a relational data source or non-relational data source and there are a bunch of methods which would be called as soon as there's a null found in the cache.

So, you call Cache.Get method, enable to Read-Thru flag. If item is not in the cache, call is passed on to your Read-Thru handler and as a result you would fetch data from the backend database by going through that handler code. Which is your user code running on NCache server side. So, seamlessly you can go through NCache and get the data that you need.

Write-through is opposite of it, which is also supported and Redis does not have these features where you can update something in the cache and now you want to update the database, you can update database by calling your write-through handler. You implement and register this write-through handler and NCache would call it to update the backend database and write-behind is opposite of it. Any update on the cache, client application returns and NCache would update the backend database asynchronously behind the scenes. So, NCache can even improve your performance for write operations on the database, which is not possible with Redis or any other product, if you don't have ability to run server-side code. And this is a purely native .NET and .NET Core class libraries that you can implement and register as read-through and write through interfaces, which is possible with NCache.

Cache loader is another feature. Where you can pre-populate the cache by implementing an interface and registering with NCache. So, each time you restart your cache, you automatically load some of your important data inside NCache and this runs on all servers simultaneously. So, it's super-fast. So, you can pre-populate all your data and you never have to go to the database again. You would always find that data because you pre-loaded it.

Custom dependency, Entry processor, these are again features which are only unique to NCache and the reason the main reason for not being able to use these features. First of all, Redis does not have these features, modules are not supported in the Azure Redis or even in open source Redis. The server side, code is not possible with Azure Redis. Primarily, because you don't have any access to the underlying VMs and this was the main reason that I was referring earlier on that with NCache on a VM model at the moment, you have full access where you deploy it, how you control it, how you manage it. So, you have full control. It's not a black box as Redis is.

WAN Replication for Multi-Datacenter

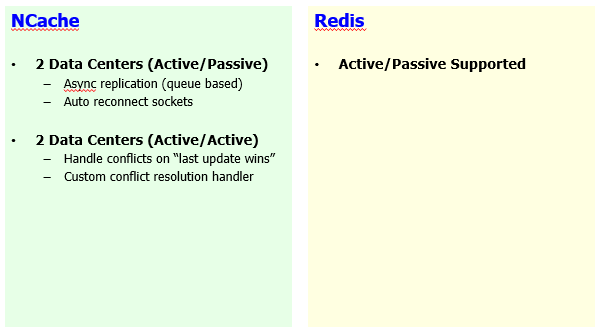

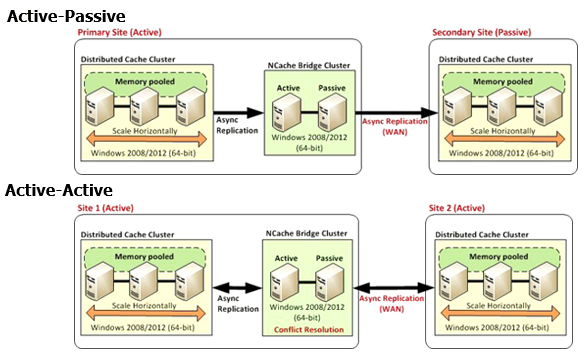

A few more details and then I'll conclude this. WAN replication is another Aspect.

Active-passive is supported on Redis, where you can transfer entire datacenter, cache from one datacenter to the other. In NCache, we have active-passive. So, one-way transition of data from one datacenter to the other. We also have active-active, which is very pressing, very important use case where you may have both sites active. You need site one’s updates on site two and vice versa. So, that's not an ability on Redis side. You don't have that ability, so, you cannot run active-active sites with Redis. With NCache this is true for your app data caching use case where you have data on both sites being updated and this is also possible through our multi-site sessions as well. So, that is another space where NCache is a clear winner.

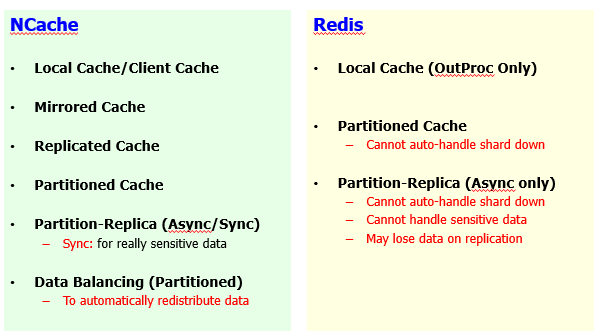

Cache Topologies

Then some other details. Caching topologies. We have a huge list of caching topologies in comparison to Redis.

So, based on different use cases we have Mirrored, Replicated, Partitioned and then Partition Replica and then we already debated, we discussed that how NCache clustering is better in general and we have a lot more options in comparison to Redis offerings.

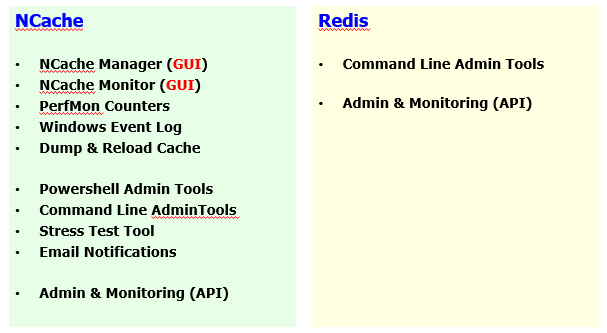

GUI tools - Cache Administration & Monitoring

Then we have GUI tools. Here's a Comparison. Manager, monitor.

Whereas, we have PowerShell tools, we have dump and reload tools, complete administration, monitoring aspects are built into it. Whereas, Redis is limited on that front as well and as part of that I've shown you some details and this is true for Windows as well as for Linux deployments of NCache.

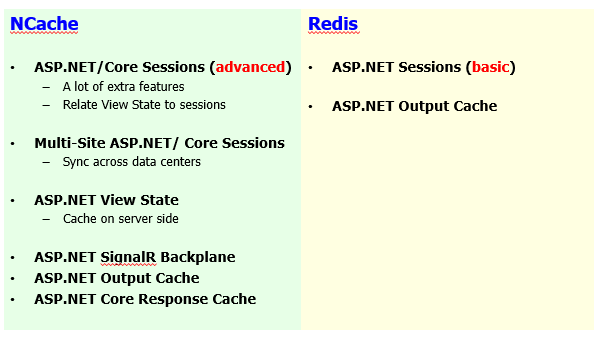

ASP.NET Specific Features

Some ASP.NET specific caching features.

Sessions, we have multi-site sessions, ASP.NET to ASP.NET Core session sharing is coming up. Multi-site ASP.NET and ASP.NET Core sessions are available. View state, output caching. Redis only has sessions, which is very basic in comparison to NCache. Session locking, session sharing is part of NCache. Output caching is supported on both end and additionally we have SignalR Backplane, ASP.NET Core response caching. So, all of this makes a complete of feature set for your web specific caching requirements. So, you can review feature set in detail.

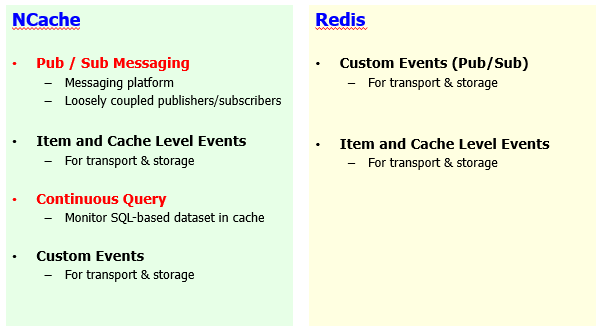

Runtime Data Sharing with Events

And then we have Pub/Sub messaging.

We have item level events and we have a criteria based event notification system as well, which is far more superior in comparison to Redis. I have a separate webinar on our Pub/Sub messaging. So, I would highly recommend that you review that if there are any questions. So, I think I'll conclude at this point.

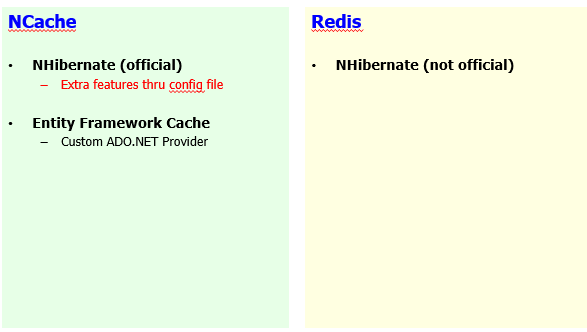

Third Party Integrations

Finally, third-party integrations.

We have NHibernate and Entity Framework as well. AppFabric wrapper is available. Memcached wrapper is available. So, if you're transitioning from those products, you can seamlessly transition to NCache in comparison to Redis.

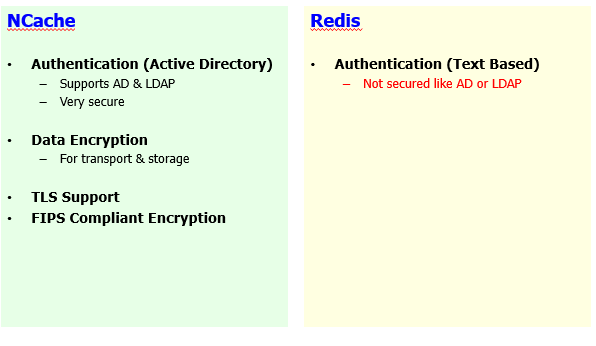

Security & Encryption

Some details on security encryption and I think we're already on time marker.

Conclusion

So, it's a good time to conclude it. So, the first thing is that anytime you guys, anytime you want, you can go to www.alachisoft.com and you can download an enterprise version of NCache and we will personally show you how it works in your environment. So, we encourage you to go straight to the website, get that 30-Day free trial of enterprise. Scheduling a demo is going to be is our pleasure. In addition to that, we will have a recording of this webinar available to you. So, please keep an eye out in your emails and on social media when we get that out to you. If we didn't get to your questions today and I know we've had a lot more coming and we're right at the edge, please, just email support@alachisoft.com.

If you have any technical queries, we will have an answer for you and if you're interested in moving along and applying NCache in your environment just you can contact sales@alachisoft.com as well.