Scaling ASP.NET SignalR Apps with Distributed Cache

Recorded webinar

Kal Ali and Nick Zulfiqar

ASP.NET SignalR is an open source library for web developers that is being used very commonly these days to add real-time web functionality to applications. You can use ASP.NET SignalR to push content from the server side to all the connected clients as soon as information gets available.

SignalR helps simplify the development efforts needed for adding real-time web functionality to your application. Some common applications that can use SignalR are chat systems, Internet of Things (IoT), gaming applications, Airline Booking Systems, Stock Exchange applications and many more.

The problem with SignalR is that it is not scalable by default. And, in order to create a web farm, Microsoft provides the option of using a SignalR backplane that can be generally defined as a central message store and to which all the web servers are connected simultaneously. SignalR messages are broadcasted to all the connected end-clients through the backplane.

Typically, SignalR backplane is a relational database which is slow and not scalable to handle extreme messaging load which is core requirement for high traffic real time web applications. SignalR backplane also needs to be very reliable and highly available so that you have hundred percent uptime on the application end.

In this webinar you see how NCache SignalR backplane is a better option in comparison to traditional options for deploying and scaling your real-time ASP.NET SignalR web applications with backplane. We demonstrate how NCache can be used as your SignalR backplane without requiring any major coding.

Our Senior Solutions Architect covers:

- Introduction to ASP.NET SignalR and its common use cases

- Issues with scaling SignalR apps and with traditional backplane options

- Why NCache Backplane is better option in comparison

- Using NCache as Backplane for your ASP.NET applications

- NCache Nuget packages for SignalR backplane

- Create, deploy and run SignalR apps using NCache backplane

So, today we will be talking about SignalR as the technology, how and where it is possible candidate to be used within your applications and what are the issues that are seen especially, in the case when you introduce the Backplane in this scenario and how what are the possible options, that you have and how you can basically work around those? So, I'm going to be covering all those different things and as a sample product, I'll be using NCache. So, we'll get to that part later on initially we'll just cover some basic theory there.

Introduction to SignalR

So, let's get down to the actual introduction to SignalR. So, these are some definitions that I've copied from the Microsoft documentation. So, what is SignalR? SignalR is basically, an open source library that developers can use to introduce real time web functionality within your ASP.NET applications. So, let me give you an example to explain, how and what exactly I mean here. For example, you have a user who is accessing a web page and he or she has a certain bit of data, that's present on the page but if that data needs to be fresh or if the user wants to get an updated data in normal scenarios, the user either has to refresh the page, basically the user has to send a request and then in response to that the updated data is received, either this or there has to be some long holding that needs to be implemented in the back. But if you have SignalR, this would not be required and I'm going to get to those details later on and this is one of the very good use cases where SignalR is a very good candidate.

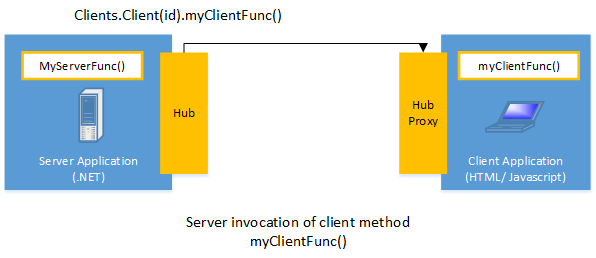

So, secondly SignalR gives you the ability to have several code push content right to the client side. So, basically the part where the client has to send a request to get the updated data is, it does not need to be necessary to be present anymore. So, basically, you're the clients, all the clients actually connected to the web server would get updated as soon as there is some new data within the server. So, the server does not have to wait for the client to actually request for new data. The data would automatically be updated on the client. So, the best thing about SignalR is that, it exposes the functionality to you. So, you just need to introduce the SignalR resources within your application and you need to call some specific functions and everything else is handled by SignalR. So, your application would be using SignalR to send a message from for example, clients to service and from servers to client, whichever your scenario is and everything else and by everything else, I mean the transport layer. How the message gets transferred and how it is notified everything else is taken care by the SignalR logic. So, you don't need to do it within your application, you just need to introduce these APIs within your application and everything else is automatically handled by SignalR. So, in this diagram if you can see right here.

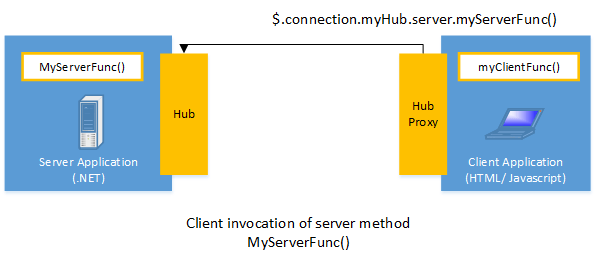

I'm just going to give you an example. So, in SignalR there is a concept of a Hub. So, in this diagram right here, we can see that the server, this is a web server and this is a connected client. It can be a HTML or JavaScript client. So, this server is able to call this function myClientFunc() on the client end right here and vice-versa. If you look at this diagram.

The client is able to call this specific function myServerFunc() on the server end. So, basically with HTTP we all know it's a stateless protocol. There's a request and then there's a response and there's nothing else, that remains everything else gets removed but with SignalR what happens is that underneath SignalR uses WebSocket to maintain connections. So, these are for the newer technologies but in the older ones there, they use the normal in the basic ones but it uses the concept of WebSockets to which it is able to invoke functions either from the server to the client end and then from the client to the server end depends on how it is done.

So, let me explain some general use cases for SignalR. So, the first one is a chat system. This is also a sample project which is shared by Microsoft themselves and in my demonstration as well, I'll be using a chat system provided by Microsoft and I'll have NCache integrated with it but let's get back to the examples right here. So, here you need real-time messages that need to be sent for example, if you have a group where there are number of members present within the group and if any of the member sends a message, that message notification needs to be received by all the members of the group and it needs to be done instantaneously. There is a delay of any sort cannot be afforded and same case with gaming industry. So, for example you have online multiplayer games where a lot of thinking needs to be done because there are a lot of players playing at the end of in the very same session together and the details of the game need to be synced with all of them.

Third use case is for example, airline booking system. So, if a ticket or a specific seat has been booked, all the other people or all the other users, which are currently viewing that specific data need to be notified, that this specific ticket or this specific seat is already booked and cannot be booked any more.

Similar examples of stocks because if they're constantly changing the values are going up and going down. So, they need to be updated on the client end pretty quickly and it is a really good requirement.

Another one is a second screen content, which can be ads or any sort of other notifications that need to be shown and then finally there's internet of devices, Internet of things. So, you have so basically, these devices are constantly gathering some bit of data, they're analyzing it, they're throwing some notifications. So, all these things need a certain sort of reliability as well as there's an urgency for these to be received on to the receivers. So, all of these use cases are pretty good scenarios where SignalR can be used and generally if talking about today, it is actually being used right now.

So, if I could just go to the next slide. Guys, if you have any questions you can always jump in and Nick would let me know if there's a question and I'll be happy to answer you.

Scaling out SignalR Applications

So, let's talk about scaling out SignalR Apps. So, if you look at this example right here.

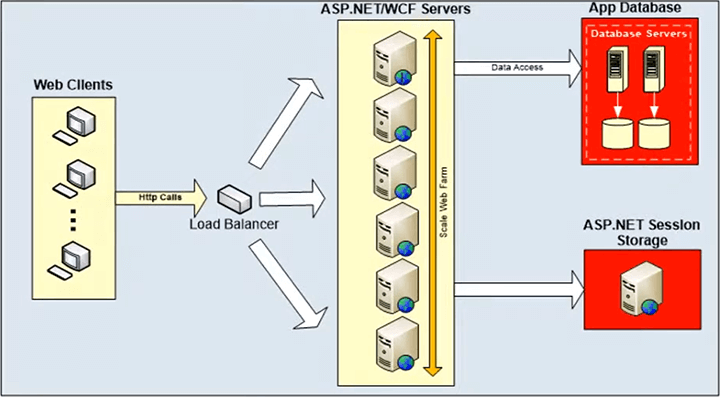

This example basically shows that, you have a web farm and could also be a web garden but I'm giving it the example with the web farm. So, you have a web farm here, where you have number of web servers here, you have a load balance in between which is regarding all the requests, that are coming in to all the web servers that are present within your web farm and then you have all these different clients. These clients there would be connected to any of the web servers within the web farm.

So, if you take this example right here, client A is connected to this web server and client B is connected to this web server and client C is connected to this web server. So, the issue here is that for example, if this web server needs to send a message, it needs to sent any sort of message or notification. It will only be able to send that notification to its specific connected clients and in this case, if this client A is sending out a message, it would only be received by the connected clients to this web server and in this case it's only one.

So, what if that there's a group chat and that is going on between at least three clients and they would be connected to three different web servers. So, if one of the client sends a message, the other clients would now be able to receive this message in this specific scenario because only the clients connected to a specific web server would be able to receive that notification. So, in this case, it is limited as to only the connected clients to the web server are able to receive this notification and in order to get rid of this the SignalR introduced a term with the name SignalR Backplane.

SignalR Backplane

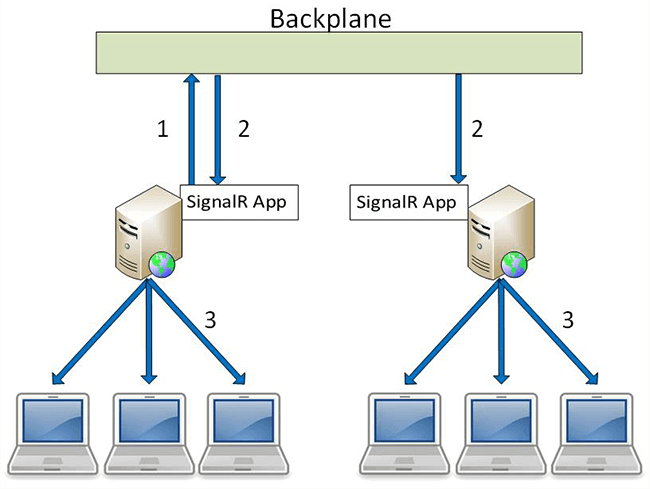

Let me explain what this is exactly. So, if you take the same example but a different little bit of a different scenario, you have a web farm right here and you have a load balancer in between, let's assume that and all the client’s requests are coming in through that load balancer and coming to their respective web service.

So, in this case right here, there’s a shared bus, there's a shared repository which is connected to all the web servers present within the form right here. So, if you have let's assume that you have ten web servers and all of them would be actually connected to this backplane right here and this is the common place which is going to be used to send out or basically, broadcast the messages or any notifications to all the connected web servers.

So, in this case if this web server is generating a notification or a message, it would not directly send to the connected clients. It would actually first send it to the Backplane and once the Backplane receives in this notification or message, it is then going to send it to all the connected web servers and then after that the web servers would send them to their connected clients and in this way, it ensures that the message or notification is not limited to only the connected clients of that specific web server. It is received by all the connected clients to that a specific web farm for example right. So, let's take this step by step.

So, for example there was a notification that this web server had to sent. So, the first thing it's going to do is going to notify the Backplane right here and what the Backplane is going to do right here, is going to broadcast this to all the connected web servers right here. So, the first message receives by the Backplane and then the Backplane sent it to the server one and then to server two right here and then after that these two in these specific web servers, sent that message to all the connected clients into their scenario.

So, in this case unlike the scenario that was here, if for example, there's a group chat going on as I mentioned earlier, all these clients would not get a consistent experience or a view but in this case right here, if there's a group chat going on, all the clients would have a consistent view, they would be notified instantly of any update or any new message that comes in. So, that is a main benefit of introducing a Backplane into the scenario but now, we're going to see what are the limitations that have been seen with a Backplane scenario and what are the possible options that we may have?

Bottlenecks with SignalR Backplane

Okay. So, I was mentioning Bottlenecks with SignalR Backplane. So, these are the main bottlenecks that are seen within the SignalR Backplane and these are also, the opportunities that any possible candidate can be marked on to be used as a SignalR Backplane in the SignalR scenario.

Latency and Throughput

So, the first one is the latency and throughput. SignalR Backplane needs to have very low latency and high throughput and let me explain, what low latency mean? Latency basically, means the time that SignalR Backplane or Backplane takes to process a message once he receives it, the time it takes to process that message and then broadcast or send it to all the connected web service in this scenario, that is latency.

So, it needs to be very minimum basically, what this means is as soon as it gets a message, it needs to quickly send it to all the connected web servers in the web farm. For example, and then the throughput is the total number of messages, that it can do in a certain time frame and it can be one second, ten seconds or even hundred seconds depending on whatever is the measuring scale that is present here.

Usually in an on-prem deployment environment applications, you have these databases as in their Backplane but there are few limitations with the database. Databases are generally slow, they're unable to scale out and they're usually choked under high transaction load. So, they're slow and then they are unable to scale out because it's a single server and then there's a backup for example, if that's a scenario but it's a single server and there's a certain limit to which only which it can process the number of requests coming in after, that it will start to get overwhelmed by these notifications by transactions and it would actually start to affect your application. So, you can basically scale out the number of web servers within your environment to handle increasing coming load to your web form but when those applications have to get in touch with a database. For example, in the bank that's where the bottleneck is and that's where you’re all of your applications take a hit on the performance part.

Guaranteed Delivery of Messages

The next thing is guaranteed delivery of your messages. SignalR Backplane must be reliable, it should ensure a guaranteed delivery of messages. There should not be a scenario where a message was not delivered all it was missed or due to whatever scenario was never received right. So, it needs to have a guarantee delivery of messages.

Another one is databases as I mentioned early, database can choke down under extreme load and it's in there a single point of failure as well. So, if that specific server goes down your whole environment basically, goes down that should not be the scenario, there should be some sort of efficient backup that can in emergency scenario whatever scenario it should be able to recover very quickly from that specific scenario.

High Availability of Backplane

The third one is it needs to have high availability. So, SignalR needs to be highly available due to whatever scenario, if there is any sort of issue the whole system should now go down. There should be some sort of backup that can help continue the operations, it should not be affected very quickly if there is a situation. There should be some sort of backup and that should be able to maintain everything or keep the environment going till everything can be restored back to normal and this can be in planned scenarios, where there is some sort of maintenance planned or unplanned scenario where anything could go wrong.

So, if we look at this diagram right here.

We can look at the scalability Bottleneck with a database and that's exactly what I was explaining to you guys right now right here, you have all your clients that are connected in through and their requests are rerouted through the load balancer and this is your web farming. As I mentioned earlier you can scale this web farm, you can increase the number of web servers and that would actually in turn increase the total number of requests, that your environment can basically take at a single point in time but the actual issue relies, when these web servers have to get in touch with the backend with the database at the back and it could be for whatever scenario but not only be just for SignalR or could be any other scenario as well.

In-Memory Distributed Cache as SignalR Backplane

So, I'm going to present the solution. The solution to this is an in-memory distributed cache as a SignalR Backplane and I'm going to as mentioned early, I'm going to be use NCache as a sample product for that and NCache is an open source product. We have different versions and you can value those if needed.

Cluster of Cache Servers

So, let me explain what an in-memory distributed Cache is? So, basically in-memory distributed cache is a cluster of cache inexpensive cache servers. So, you have different cache servers hosting the very same cache, it is logically a single unit but underneath there are multiple servers, that are hosting this cache and once they're together wants a multiple number of servers. They're not only pulling in the total computational power resources together but they're also pulling in the memory resources. So, as you had number of servers you basically scale out on the number of transactions that can be done in a specific set time and also the total number of data that can be stored.

Right now, I'm explaining generally in terms of what is an in-memory distributed cache? I'm not talking in reference to SignalR but I will map all these features with the bottlenecks that were initially discussed. So, initially I'm just explaining and this could be distributed cache could be used for an object caching scenario or a session caching scenario, it's for all of those right now but I will map these features over there as well.

Cache Synchronized Across Servers

So, here multiple number of multiple servers pool the pooling in their memory resources and also the computational power resources together and the next thing is a cache synchronizes across the service. So, with reference to NCache what it does is even if for example, you have four cache servers hosting the very same cache and there's some new data that gets added to the cache. There's a distribution map which gets updated as soon as the data gets added or updated or even removed it gets updated.

So, at any point in time even if one of the servers, one of the cache server gets a new item added to it, all the other servers within the cluster would automatically and instantly be notified that there's a new item added to the cache now. So, it ensures that the whole environment stays in sync with each other and there are different topologies to which data is kept within the cache and that's a separate discussion but generally talking as soon as the item gets added, all the servers in the cluster get notified about a new item that is being added. So, that way it ensures that it is immediately visible to all the servers present within the same costume.

Linear Scalability

The third one is linearly scalable as I mentioned to you earlier, these are multiple number of servers which are pulled together and there's no limit you can add as many servers here, as required and the cache would adjust automatically. It basically increases total request that it can handle, it also increases the total number of member data that can be added to the same cache. So, it's all linearly scalable. So, as you scale your application tier, you can also scale your caching tier and that was one of the bottlenecks with databases. You can never scale your database environment but with this you can definitely scale your cache server, your whole cache and in that way you're after you can actually increase your applications performance as well.

Data Replication for Reliability

The fourth is data replication for reliability. So, as I mentioned to you earlier, these are multiple number, multiple servers which are pulled together. So, depending on the topology there are replications being maintained on other servers. So, even if one of the servers goes down, there is the data of that lost server is replicated on any on one of the other servers and that would automatically start the recovery process, then it would bring up that data and put it back into the active partitions of the cache. If we're talking in for partition of replicas for example, as a topology of NCache. So, it would automatically bring that data and add it to the same cache. So, data is not lost even if one of the server is gone and if there are any clients connected to that server, which was lost they would automatically detect that and they would failover their connection to the remaining service present within the cluster and this is one of the another key things, that it's not a single point of failure. So, if you have multiple number of servers as long as you have one cache server remaining in the cluster, your cache would be functional, you would respond to requests that are coming in so, even if one of the server goes down, the whole cache does not go down. Your environment does not go down and your applications continue to work just fine. So, now I'm going to map all these things that I've just discussed or the bottlenecks that were previously discussed for a SignalR Backplane.

So, if you go right here and the first one was latency and throughput. So, latency as I mentioned earlier is the amount of processing time. So, if you have a lot of servers generating a lot of messages and so, those requests are coming in right to the Backplane and the Backplane in this case is Ncache. So, if you feel like your environment is getting choked, you can add number of servers and that would actually increase the total capacity of handling that number of requests.

Can I have a question here? How complex is it to set up NCache as SignalR Backplane? It's quite easy and we have a very detailed and explained explanation of a document, which helps you work through it and I'm going to be going through exactly that and the hands-on demo part and I will be referring to this question there.

So, coming back to the bottlenecks that were that are generally observed with the SignalR Backplane and mapping NCache on a general disability cache feature on it. So, as I mentioned earlier, your latency gets really low as you have multiple number of servers, which can actually handle the requests that are coming in. So, that would automatically in decrease the latency and obviously you would also increase the throughput as you have low latency, the throughput was automatically go down and general your overall performance would go down.

The second is guaranteed delivery of messages. So, within the NCache algorithm and logic the client-to-server, there are lot of checks being maintained. So, if for example, client tries to connect to the clustered cache and if for whatever reason, it is unable to deliver that message, there are internal retries that are implemented. So, the client NCache client automatically tries to reconnect to the cache server and this is all configurable as you can configure the number of retries, you can configure, how long it should retry all of those? So, basically you can ensure that your client is able to connect to your specific cache cluster in this case and even during while a connection is already maintained and it is found to be broken. Again, in that specific scenario, it would ensure that the message actually gets delivered.

The third one is high availability of the Backplane and this is one of the key things, I initially mentioned, you have multiple number of servers hosting the cache. So, if one of the server goes down, it does not mean the whole cache goes down. Your other servers are going to be able to handle the load that is coming in. They are going to have the connections of the clients failover to the remaining servers present within the cluster. So, in this way it ensures high availability, it ensures that the messages are gets actually get delivered and then lastly, it ensures that the performance is greatly increased as you add number, as you scale out on your application tier. You can also scale out on the caching tier.

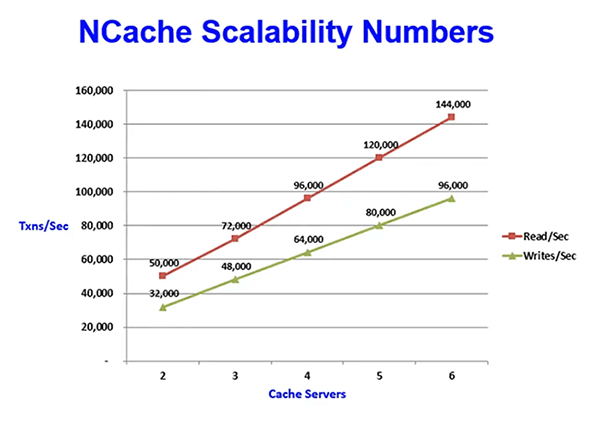

Kal another question, how much performance improvement can get every use NCache as SignalR Backplane, in comparison to databases or there any benchmarks? Okay in specific to SignalR, I may have to look these benchmarks up but generally, in terms of the benchmarks, in terms on the number of transactions that are being done, I can definitely show those later on, there was a screenshot. So, I can show that.

Right there. So, these are the scalability numbers and they're specific to the topologies as well but these are generally once. So, as you had a number of servers, you actually increase the total number of transactions that can be done and transactions and broken down into reads and writes per second. So, these can definitely be compared with databases because it's not scalable. So, it would, they would become a time when the cache would actually come up as compared with the database.

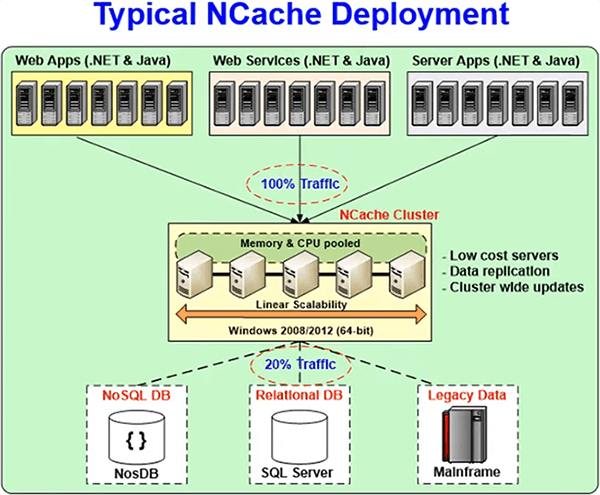

Okay. So, coming up here. This is a typical deployment of Ncache.

So, we recommend having a separate caching tier, as you can see in this but you can also have the cache on the very same servers as your application. So, that's also supported but we recommend having a separate caching tier and I'm going to be giving in referral talking in reference to that, So, this is your application tier, where you have all your application servers and this is your caching tier. Again, it's linearly scalable, you can add as many servers here as required and then all these servers as I mentioned earlier, they did not require any specific hardware. They don't require any high tech or they're inexpensive servers and with NCache the only pre-rec of NCache is .NET 4.0, otherwise NCache is supported on all windows environments. The recommended operating systems are Windows Server 2008, 12 and 16 where which ever has .NET 4.0 with it, you can definitely work with that with NCache.

There are two different kinds of installation types. There is the NCache cache server installation, which can actually host a cache and then there's a remote client installation. Remote client can have a local or basically standalone cache and they can, they have the libraries and resources for clients to be able to connect to the remote cluster caches and as I mentioned earlier, the clustered caches are the one, which are hosted on cache server installation. So, this is a typical deployment, all your applications are connected to the database to the caching tier and the cache tier is also connected with the database in the back. We have features, which it is supported as well and this can be used. So, NCache it can not only be used for example, for as a Backplane for SignalR but it can be used for data caching, session caching and we have other webinars and documentation, which help you configure those if it has an interest in that.

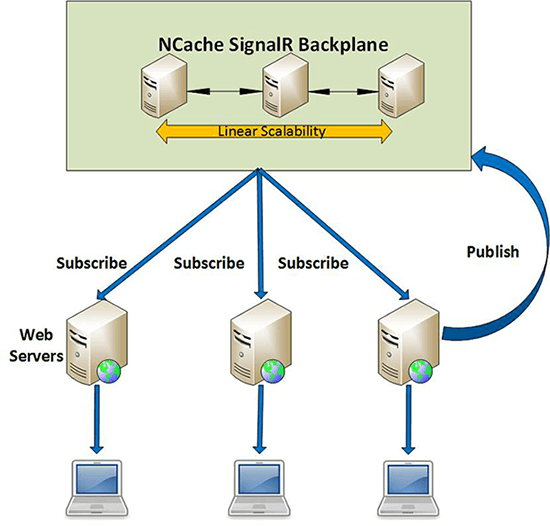

Okay. So, now this diagram basically shows NCache, it is deployed as a SignalR Backplane.

So, it is quite similar to the same one, which we were initially looking at. So, this is your web farm right here and you have let's assume a load balancer up here. So, which all the client requests are being rerouted, so instead of a plane Backplane right here, NCache is used as a Backplane right here and you have number of servers here, which are linearly scalable as I mentioned earlier in this case. We have three web servers, three cache servers right here. So, in this case this web server right here, generated a notification or a message and it sent this notification to the Backplane and in this case, the Backplane was NCache. What NCache would do? It would in turn publish this basically, broadcast this notification to all the subscribers of this very message right here. So, once this message was received by NCache, it was then broadcasted to all the connected web servers. In this case and after that it was then sent to all the connected clients. So, in this way it ensured that it was, there was all the connected clients had that consistent viewer and an experienced while working on their application and NCache was plugged in as a Backplane in this case. And these are the numbers, that I've already talked about. So, as you had a number of servers basically, you can see that there's a linear improvement in the number of reads and the number of writes that are being done right now. So, it's linearly scalable, there's no stopping to it, so you can add as many servers here as required within your environment.

Hands on Demo

So, I'm going to go to the actual hands-on demo part and this is where, I will be demonstrating how to create a cache? I'll be quickly skimming through it because that's not part of the agenda but we do have other webinars in which, we're covering each and every detail and we're talking about the best practices and other general recommendations, when you're creating cache or configuring and setting up different settings related to the cache creation process.

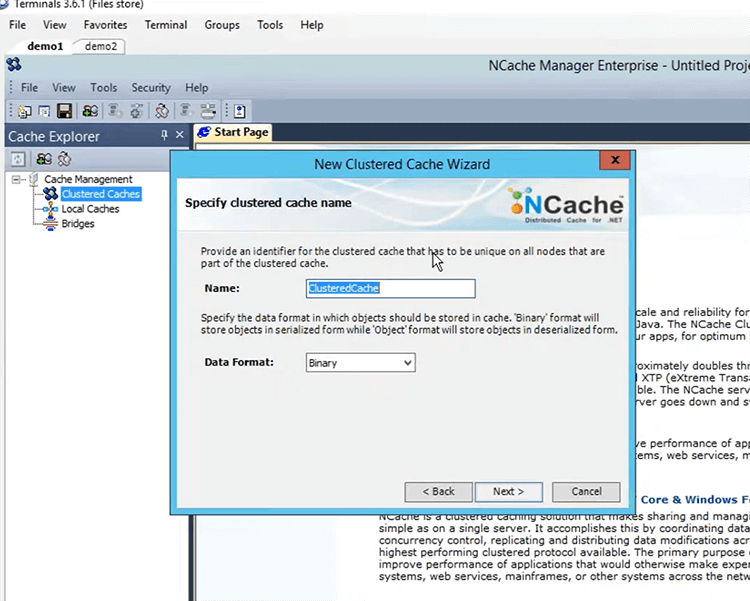

Install NCache and Create a Clustered Cache

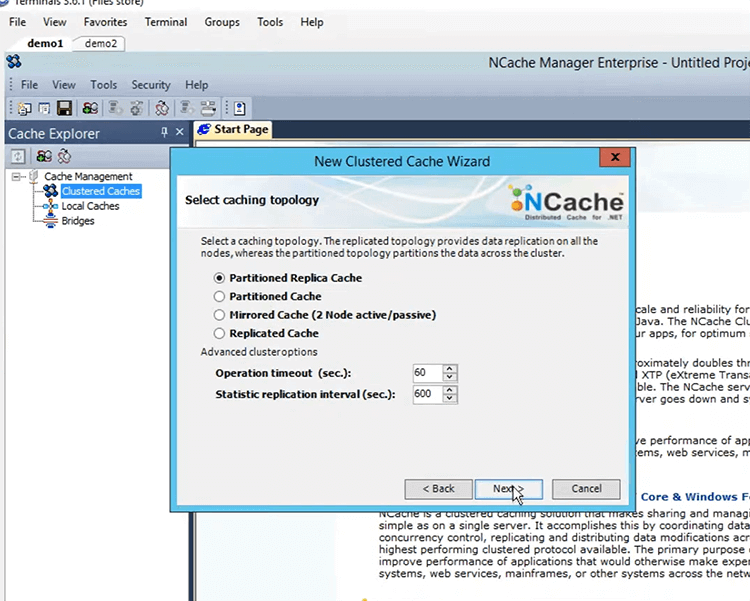

So, the first thing that you need to do is, you need to install, get it done and download a copy of NCache from our website and then you need to install it within your environment. I have two machines, which have NCache already installed on them and I'll be using that. So, I'm just going to log into those. I have these logged in demo1 and demo2 right here. So, with NCache there comes a tool NCache manager through which you can basically create and configure and work with and manage caches basically, so this is when you open up the NCache manager. This is the view that you get, if you right click on clustered caches and click on create new clustered cache, this is the view that you get.

So, all caches need to be named. I'm going to go ahead and give it a name SignalRCache. The all caches need to be named as I mentioned earlier but this is not case-sensitive, you can use it in any way. Click next. These are the four topologies and we have this covered in other webinars.

This is an application strategy, I'm going to keep everything default and I'm just going to configure the two cache servers that I've just shown you, demo1 and demo2 right here, just going to click on next. This is the port on which the cluster communicate. So, NCache uses TCP/IP ports to maintain its connections. So, I'm just looking Nestor, this is the cache size. If you're using NCache for SignalR and as a BackPlane for SignalR, it doesn't really matter the size because it's just a que, that's going to be maintained within the cache and as soon as that message gets sent out to the connected clients is going to be removed. So, besides a single item there's nothing more that is going to be stored within the cache and the single item, I'm going to talk about it later on when I'm going to look at the actual sample that comes with it.

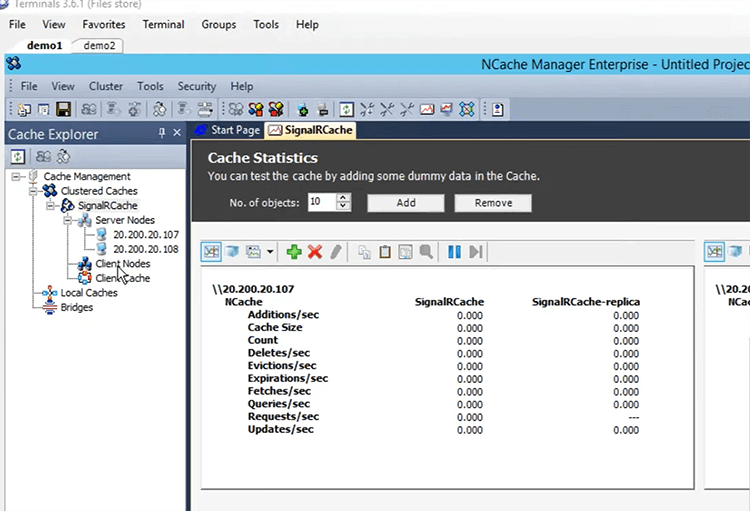

So, I'm just going to keep everything default right here, I'm going to turn off Evictions because I don't want anything to be removed, even in the case that the cache gets full. I'm going to check this box which is going to start the cache on when I click Finish, I'm just going to click on finish. So, that's how easy it is to create a cache within your environment using NCache. So, I'm just going to wait for the cache to start and then I'm going to add a remote client to it, that's going to be my personal machine right here. Ok so, the cache is started and we can see right here, this zero-activity going on at this point but that's because we have no other application, no application basically connected to this cache right now.

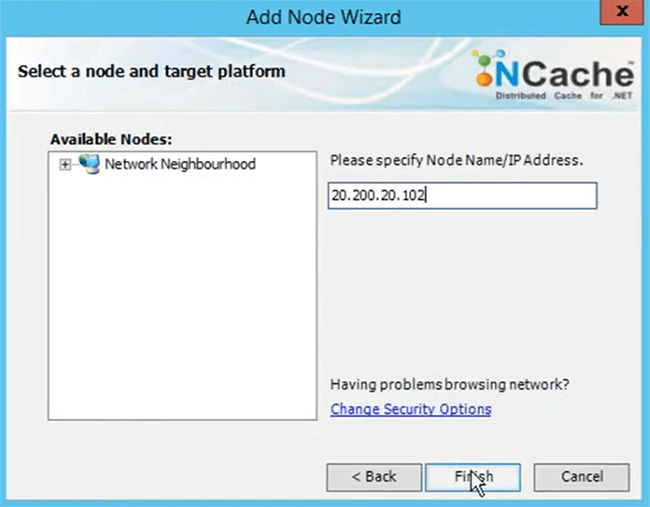

So, I'm just going to go ahead and add my machine as a client node to it to add a client or just right click here and click on add node. I'm going to give it my personal machines IP, it can be a machine name or an IP, it is both are supported. Just click on finish up right here.

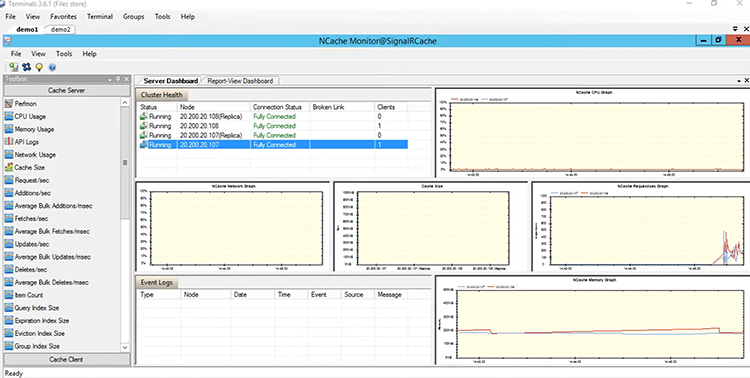

We can see that my machine is added as a remote client right here. So, I'm just going to quickly test this cache to see if everything is working, it just fine actually before that, let me show you the monitoring tool of NCache. So, if you're using NCache as a Backplane, you can use the monitoring tool which is an excellent tool, which gives you a quite bit of detail of exactly what is going on at the cache helps you debug issues and actually you get a lot of details around, how the performance is going on and how from where and where the actual hits are being taken in and what can be improved overall. So, if I just right-click on the cache name right here, I can click on monitor cluster. This opens NCache monitor, which can be used to do monitor the cache. So, it shows you a bunch of different things right here, if you just open up server dashboard right here, it gives me a pretty good view of exactly what is going on at the cache, different graph, this is the CPU graph, this is the total network that is being used, this is the cache size, this is the total requests that are coming in. So, it shows you quite a bit of different things that can be used and looked at when you have a working environment right here and what I'm going to do is, from my personal box right here. I'm just going to run a StressTest tool application to simulate some dummy load onto the cache. This tool comes installed with NCache. So, I'm just going to look for it. I'm just going to type STR, it's going to come up StressTest tool.

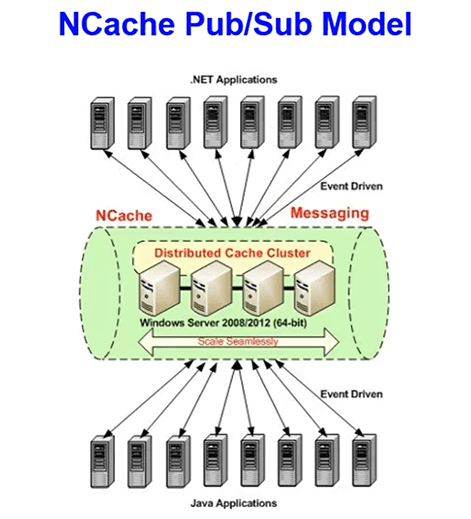

So, Kal while you’re pulling data, you can answer what sort of mainframe NCache uses for SignalR? Okay. So, basically NCache is using the Pub/Sub model. So, all these clients which are basically, web servers, they're connected as publishers as well as subscribers. So, any of the clients can actually publish a message and all the connected clients are also subscribers. So, they'll get notified about it as well. So, its basically Pub/Sub model, we have a complete separate implemented API as well as the whole framework of just Pub/Sub but underneath the SignalR Pub/Sub is being used you can use it separately as well.

Add Sample Data and Monitor Cache

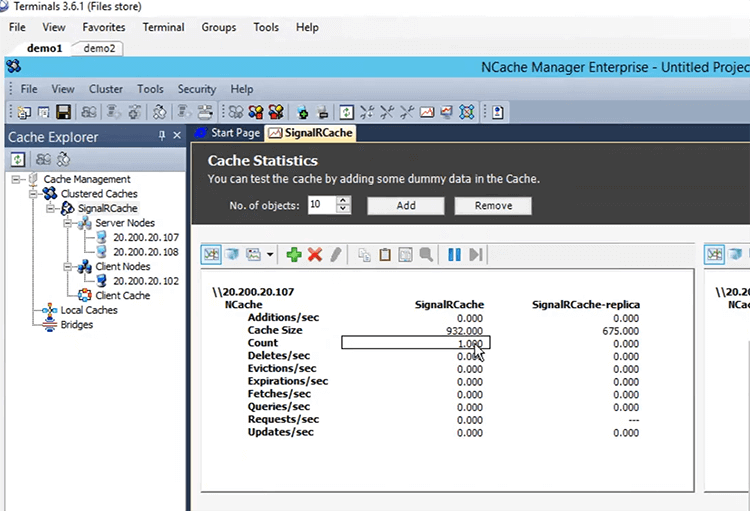

Okay. So, I'm just going to open up StressTest tool. So, this is the location, where it is actually, it is a C:\program files\NCache\bin\tools so, we just look for a StressTest tool right here, just going to open up instance off the command prompt, it's going to drag and drop it here. Okay actually, I hit enter too quickly just to clear the screen. Okay what I'm going to do is, I'm going to type okay this is StressTest tool. I'm going to give a space give the cache name, the cache name was signalrcache. I'm just going to hit enter now. So, if I go back to this view right here and open up the statistics, we should see requests coming in and do this right now. Okay see some requests coming in now and right here, you can see that there are a bunch of different requests coming in to both the connected both the cache servers right now. So, this seems like everything is actually working just fine and it's taking one request as well. If you open up the monitoring tool right here, you can see that this one client connected here, one client connected here and if we look right here, we see that there's a big jump in the total number of requests that are coming in, you can also see that the memory graph is taking a bit of hit as well.

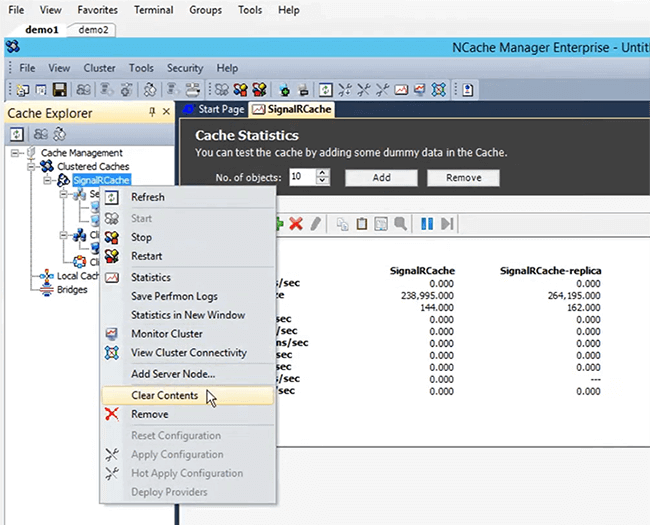

So, if we verified actually that it's working. So, I'm just going to stop this tool now, it's going to go back to the NCache manager. I'm going to right click here. I'm going to clear the contents of this.

Run SignalR Chat Sample

So, now that we have got a cache set up and it is working just fine, we've tested it, it seems to be working just fine. So, what I'm going to do is, I'm going to open up sample project that comes installed over the NCache and I'm going to follow the documentation, that is published on our website step by step on how you can basically configure it. You can configure it, your own ASP.NET application to use NCache as a Backplane. So, if I just go right back into the install directory of NCache that is in c:\program files\ncache right here, I can go into the samples folder and then into dotnet and right here, if we just look for right here, we can see that there's a SignalRChat sample given here. So, this comes installed with NCache and this is the very same one, that I'm going to be using in my demonstration right there. I've got it already opened up right here. So, I'm going to open up the documentation and this is the documentation that gives you a step-by-step view of how you can configure it within your own specific environment.

So, if we just go right here. So, the first thing that you need to do is, you need to install the NuGet package and let me show you exactly which NuGet package we're talking about. So, if we just right click here and click on manage NuGet packages for solution. Okay right here, if we go into the browser and we're browsing on nuget.org. I'm just going to search for NCache, okay and right here, if you just go a little bit down, think I may have missed it but it's the community SignalR, okay no time so, that I can find it, the name of the NuGet Package is Alachisoft.NCache.SignalR. So, it should come up here, Alachisoft.NCache.SignalR. So, this needs to be installed within your application. I don't need to install it at this point because I have an installation of NCache and have already references that will require libraries but if you're not using the NCache installation, you can definitely just add this as a NuGet package. You just need to install it within your applications product project.

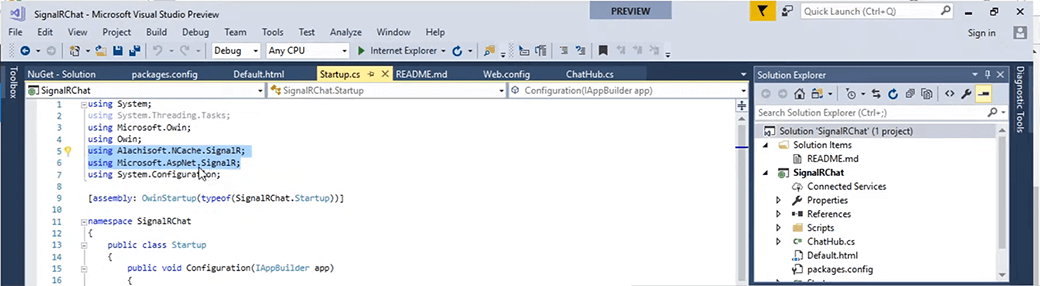

The next step here, is to include these two namespaces Alachisoft.NCache.SignalR and then Microsoft Microsoft.AspNet.SignalR. So, these need to be introduced in the Startup.cs, I’m just going to be following the sample project but you need to do it in reference to your own specific setup. So, if you go into Startup.cs right here, you go right at the top, we can see that these two are already reference here Alachisoft.NCache.SignalR and Microsoft.AspNet.SignalR. So, it's already added up here.

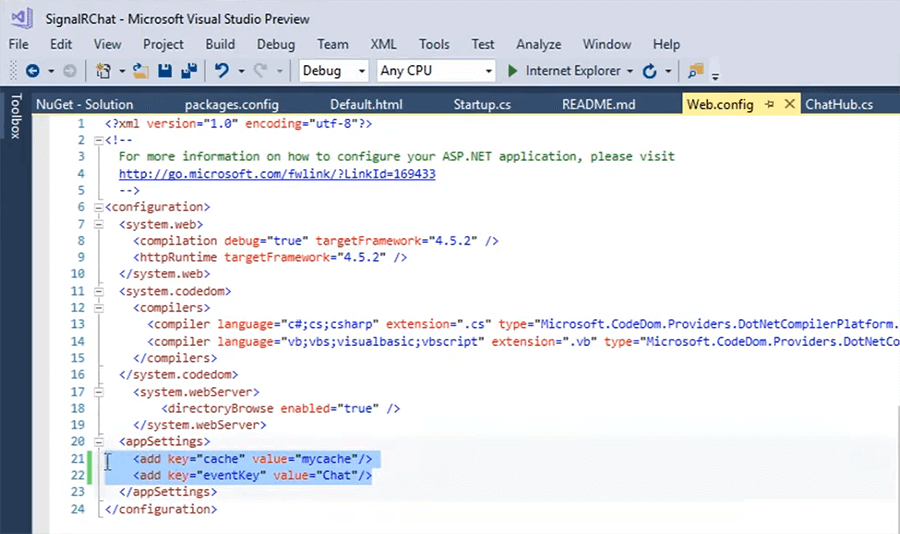

Let's go look at the next step. So, now we need to modify the web.config. So, we need to add these two specific keys right here and let me explain what these are? The first one, which is by the name cache is the actual cache name. So, you need to give it the cache that you're going to be using it, which is going to be on and running and you need to use this.

The second is the even key and this is what differentiates between multiple. So, if you have the same cache being used as a medium as a Backplane for multiple different applications. Right, so, this is what differentiates it. So, it opens up basically, a channel within the cache through which all the applications, which have the very same value would be able to get notified. So, if there are two applications, which need to communicate with each other, they need to have this value to be the same but if there are different application, you can change this according to your specific environment. So, right now, it is said to Chat right here and if we go back into the sample project right here and we go into the web.config, we can see that it is set up right here.

The cache name is different and what I'm going to do is, I'm going to change it to the cache that we have created and that is signalrcache. As I mentioned earlier, this is the cache name is not case-sensitive, so you can go with just this. So, I'm just going to go ahead and save this. Let’s go back right here, so these things are basically, these are the overloads that can be used, I'm going to show what I'm using in my environment. Initially you're just registering to string variables, which are going to be taking the values that we have initially stored within the web.config. That is the cache name as well as the channel for example, name and then this is where you specify that NCache should be usable. Basically, it's just two-three lines of code, that needs to be introduced within your application. You should be good to go to use NCache as a Backplane within your application.

There was a question initially which was referring it as to how difficult it is to configure NCache as a Backplane for your specific application and it's quite easy. So, there's just three lines of code that needs to be introduced and you should have NCache to be as a Backplane for your specific environment and if I go back to my project right here, going to startup.cs. We have the specific same code. There's just some checks present here, just to ensure that the values that are actually in place and then right here. We have this call right here, which introduces NCache to be used as the Backplane for this. So, what I'm going to do is now, I'm actually going to run this application and open up couple instances of it. So, that I can show you, how this chatroom is working right now. So, I'm just going to click on run right here and it should open it up. Okay. So, the build is done it should open up now. Okay just waiting for the load, it doesn’t take this long but it is right now.

Okay in the meantime let me just quickly go over the slides that we are using. So, if I just click on Default.html right here, there should be a pop up here, which asks me for the name that I'm going to be using. So, since this is a chatroom there are you need to register yourself as with the name. So, when you send a message, the other recipients should know from exactly who this message is coming from. So, right here, it is asking me for my name. I'm just going to go ahead and give the name Kal. I'm going to write hello and try to send it, meantime just going to open up another instance of it right here, it should open up. Still hasn't sent it but let's verify the cache name was correct, go back to web.config. I think I've got the name wrong. So, it's signalrcache. I may need to run it again. I'm just going to go ahead and stop, its going to close these instances obviously since the cache name was not correct. These would not work, so I need to run these again. Okay. So, let's verify one more time signalrcache, it is correct now. It is stopping the instances at this point. Okay just going to run it one more time, this should be quicker because already done.

In the meantime, if you have any questions? Please feel free to ask. Okay. So, the build is done and now, we're just opening it up okay. So, Kal I have a question here, does it work for SignalR core as well? Yes, it does work because NCache does support for .NET Core as well. So, it does work with that yes. Okay another question is what messaging capabilities NCache offers other than SignalR? As I initially mentioned on underneath SignalR the whole implementation as a Backplane, NCache is using Pub/Sub model but other than that you can use Pub/Sub as separately as well. It does always need to be used with as a Backplane for SignalR but otherwise, we have events and events can be data driven events or it can be specific user-defined events as well with which you can basically notify other applications for certain events. Newer item gets added, updated or deleted and we have these features then you also have the continuous query feature.

So, with that in specifies certain results set within the cache and if any update gets done on that specifically, result set and could be multiple items within the very same cache and any of them get updated, it is going to be the client that requested for it gets is going to be notified about it, so these are other ones that are present as well. So, I've just clicked on the default one. It should ask me for my name, now I'm just going to wait for that. Okay. So, I'm going to give it the name, Kal and hopefully to work. So, I'm just going to type hello and send it. Right now, I'm just going to copy this link and paste right here. Okay, providing the name I'm just going to give it the name Nick. Okay right here, so here from here, I sent a message with saying hello and it was received here as well. So, this is hello from Nick. So, I'm just going to type that hello from Nick and I'm going to send it. So, right here, Nick sends this message hello from Nick and if you look back into Kal's view, we can also see it come up with here as well.

So, we can send multiple messages here, as much as we want and they will be received to all the connected clients to this specific scenario. So, we can see that Kal send a bunch of messages and they were received on Nick's view as well. So, they get are updated automatically, if you just send one last one from Nick as well by from the Nick and you just send it out, you can see it came up from Nick and from in this view as well, we can see right here. So, if we quickly look at the cache that we created and which is being used by him. We can see that is one item added here and as I mentioned earlier only one object gets added, the rest is just a queue that is temporarily being maintained while it's being transferred.

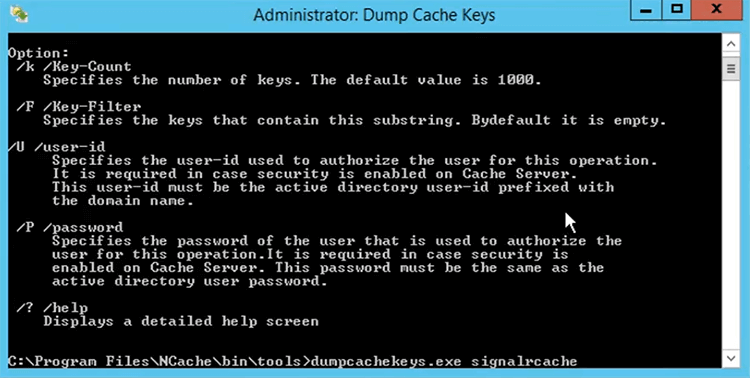

So, if we use a tool within NCache which is goes by the name dump cache keys, it's going to dump the keys that are present within the cache. So, I just want to quickly show you and we give it the name signalrcache and hit enter.

It's going to pull up all the items that are present in the cache. So, at this point they're only one and we can see there's this item placed here, with the name chat and this string value is the exact same one as we configured within our application against the value of event key. So, this is the common key that needs to be used within all of your applications, that need to use this specific channel to send and receive messages. So, this needs to be the same all around and this is what exactly what's going to ensure then all the connected clients or connected web servers to this specific cache are going to get notified and regarding any such thing that's happening. So, that's how easy it is to have NCache as a Backplane within your application.

If I quickly skim through what we have already discussed right here, let me just quickly finish the last screen and last presentation slide here is that NCache underneath it. It using the Pub/Sub model.

So, you have client applications connected, which can be .NET applications or java applications and they're connected as for publishers and subscribers both where it goes both ways. So, any application sending any connected client sending a message guess, it receives to all the connected clients and is that way ensures that, all the messages are actually received by all the connected clients.

So, if you quickly skim through over what we have discussed in this today's webinar, is that we discussed about SignalR as a technology. What it is and how it can help in different scenarios? Where it is dire need for it because the applications cannot wait for the client to actually send a notification or only then they can respond with respective data. They want the clients to be notified automatically your updated with the updated data pretty quickly. So, we discussed what SignalR is? How it basically works? What are the different use cases that are possible possibilities and where actually SignalR is currently being used as well?

So, they're not just future use cases. They’re current use cases as well and how scaling out SignalR Apps can have some sort of issues with consistency and how SignalR themselves have introduced the Backplane concept through which they can ensure that, all the connected clients have get a consistent view and they get notified immediately of anything, that's going on within the cache. I think there's a question.

Okay. So, let me discuss the bottlenecks associated with the SignalR Backplane. We discussed these three main points and these as I mentioned earlier, were also the opportunities which any solution could take and the solution we talked about was an in-memory distributed cache. In this case it was NCache and we mapped the different features, different things that NCache possesses over, what a SignalR Backplane really needs? NCache mapped on to them perfectly and it was an excellent usecase, excellent candidate basically, to be is to be a Backplane for a SignalR application and we discussed about NCache. How NCache can be used? We discussed the scalability numbers and we also went on to the hands-on demo of the sample project, that comes installed with NCache and how it can be used and then there's this documentation right here, which helps through each and every process of this configuration.

So, I think that's about it from my end, Nick over to you. Thanks, Kal, that was very informative, if anybody has questions kindly let us know or you can type those questions in the questions box. Just to recap the NCache solution is available to download from our website www.alachisoft.com. We have the community edition, the open-source version as well as the Enterprise Edition that you can download, take a look at enterprise comes with a 30-Day trial period, that you can use to test out before proceeding and open source is of course free to use. So, there are no questions, I'd like to thank everybody for joining us and thanks Kal for your time and forward to seeing you the next future webinar.