NCache Distributed Caching Features

Cache Dependency for Relationship Management

NCache has a Cache Dependency feature that lets you manage relational data with one-to-one, one-to-many, and many-to-many relationships among data elements. Cache Dependency allows you to preserve data integrity in the distributed cache.

Cache Dependency lets you specify that one cached item depends on another cached item. Then if the second cached item is ever updated or removed by any application, the first item that was depending on it is automatically removed from the cache by NCache. Cache Dependency also lets you specify multi-level dependencies as well.

Read more about cache dependencies.

Database Synchronization

NCache provides a feature that synchronizes your cache with your database automatically. This ensures that data in the cache is always fresh and you don't have data integrity issues. You can configure database synchronization either based on event notifications issued by the database server or by polling.

Events based synchronization is immediate and real-time but can get quite chatty if you're updating the data in the database very frequently.

Polling is actually a lot more efficient because in one fetch NCache can synchronize hundreds and even thousands of rows. But, polling is not immediate and usually has a few second lag. The polling interval is configurable however.

Read more about database synchronization.

Parallel SQL-Like Query, LINQ, & Tags

NCache provides multiple ways for you to search for objects in the distributed cache instead of only relying on keys. This includes a parallel SQL-like query language, Tags, and Groups/sub-groups.

Parallel SQL-like query language allows you to search the cache based on object attributes rather than the keys. And, this query is distributed to all the cache servers to be run in parallel and the results are then consolidated and returned. This allows you to issue a query like "find all customer objects where customer's city is San Francisco". From .NET applications, you can also use LINQ to search the distributed cache including lambda expressions.

public class Program

{

public static void Main(string[] args)

{

NCache.InitializeCache("myReplicatedCache");

String query = "SELECT NCacheQuerySample.Business.Product WHERE this.ProductID > 100";

// Fetch the keys matching this search criteria

ICollection keys = NCache.Cache.Search(query);

if (keys.Count > 0)

{

IEnumerator ie = keys.GetEnumerator();

while (ie.MoveNext())

{

String key = (String)ie.Current;

Product prod = (Product)NCache.Cache.Get(key);

HandleProduct(prod);

Console.WriteLine("ProductID: {0}", prod.ProductID);

}

}

NCache.Cache.Dispose();

}

} Tags & Groups/Sub-Groups

Tags and Groups/sub-groups provide various ways of grouping cached items. Tags provide a many-to-many grouping where one tag can contain multiple cached items and one cached item can belong to multiple tags. And Group/sub-group is a hierarchical way of grouping cached items. You can search for Tags also from within SQL-like query language.

Read more about different ways to query the distributed cache.

Read-Through, Write-Through, & Auto Refresh

Simplify and scale your application by pushing some of the data access code into the distributed cache cluster. NCache provides a Read-through and Write-through mechanism that enables the cache to read and write data directly to your data source and database.

You implement IReadThruProvider and IWriteThruProvider interfaces and then register your code with the cache cluster. Your code is copied to all the cache servers and called when NCache needs to access your data source and database.

You can combine Read-through with expirations and database synchronization to enable NCache to do auto-refresh and automatically reload a fresh copy of your data when needed.

See read-through and write through for details.

Runtime Data Sharing through Messaging

NCache provides a powerful runtime data sharing and messaging platform that is extremely fast, scalable, and real-time.

NCache allows real-time data sharing with the help of event notifications. There are three types of events that applications can use to collaborate with each other.

First is cached-items based where applications register interest in certain cached items and are notified whenever they're updated or removed.

Second are application-generated events that allow your application to use NCache as an event propagation platform and fire custom events into NCache cluster. NCache then routes these events to other client applications that have shown interest in these custom events.

Finally, NCache allows you to be notified separately whenever anything is added, updated, or removed.

Read more about runtime data sharing.

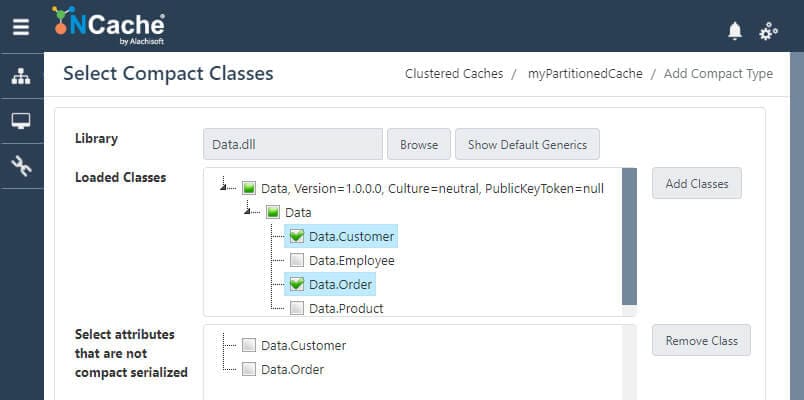

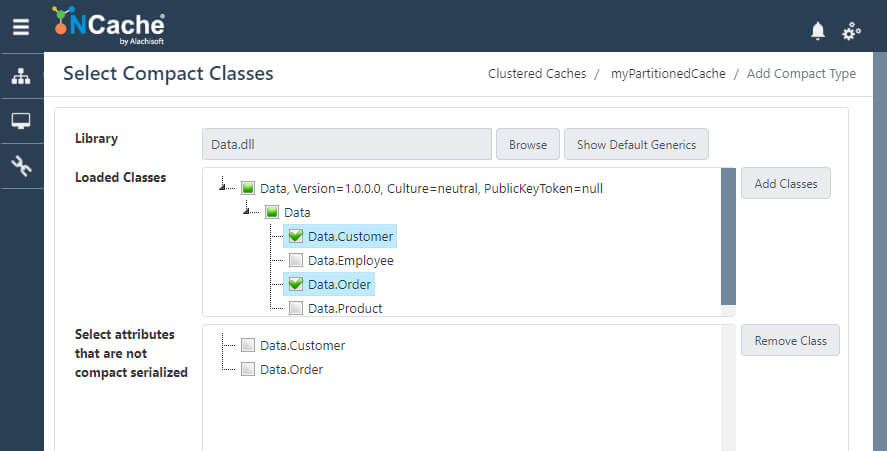

Compact Serialization

NCache provides an extremely fast object serialization mechanism called Compact Serialization that requires no code change on your part to use.

This serialization is faster because it does not use any reflection at runtime. And the serialized objects are more compact than regular serialization because instead of storing string-based long type names, NCache registers all the types and only stores type-IDs.

And, best of all, it requires no code change on your part because NCache generates serialization code at runtime once and uses it over and over again. The net result is a noticeable boost in your application performance.